How CrePal became the AI video director

The AI video generation market is entering a breakout phase.

According to MarketsandMarkets (2025), the global market for AI-driven video tools reached $716.8 million this year and is projected to climb to $2.56 billion by 2032, growing at a CAGR of 20.1%.

But what does this rapid growth really mean for independent creators like me?

From Week-Long Workflows to Minutes

Not long ago, producing a single minute of professional AI video was painfully slow and expensive.

I used to juggle five or more platforms—from prompt builders to motion editors—just to finish one clip.

Each minute of polished output could cost around ¥2,000 (≈ $280) and take a full week to deliver.

That barrier locked out most creators from participating in the AI-video revolution.

Then CrePal changed everything.

This platform transformed the process from fragmented and manual to orchestrated and intelligent.

By coordinating multiple specialized models, it cut my production cost to $2–3 per video and reduced turnaround from days to mere minutes.

The Vision Behind CrePal

CrePal was founded by Jiaming Liu, a former AI Product Manager at Tencent WeChat BU.

The company’s early experiments centered on an AI dating assistant, but in March 2024, it made a decisive pivot toward AI video Agents—tools designed to automate the creative pipeline.

Its first flagship product, AI VLOG, quickly climbed to the top of Product Hunt’s daily and weekly charts, signaling strong community traction.

Following the public beta launch on July 16, 2025, CrePal attracted 5,000+ users within just two weeks.

By August 1st, paid subscriptions exceeded $500 in under 48 hours—a clear indicator of unmet demand in the market.

Meeting the Market’s Demand for Efficiency

The appetite for faster content creation is real.

A 2025 HubSpot survey found that 62% of marketers using AI for video say text-to-video platforms helped them cut production time by over 50%.

Yet most existing tools still require creators to patch together multiple workflows across different browsers and applications.

CrePal takes a different approach.

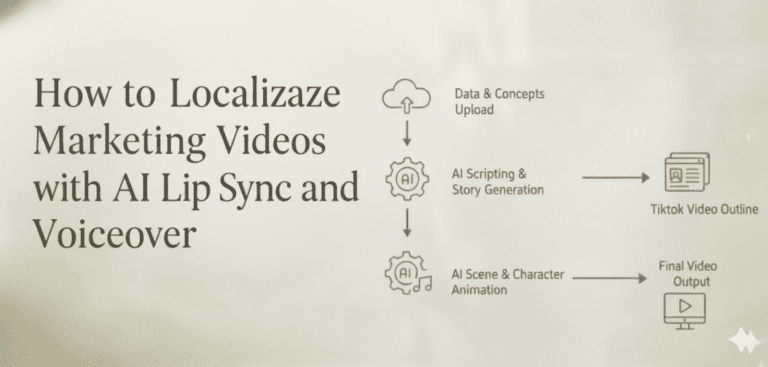

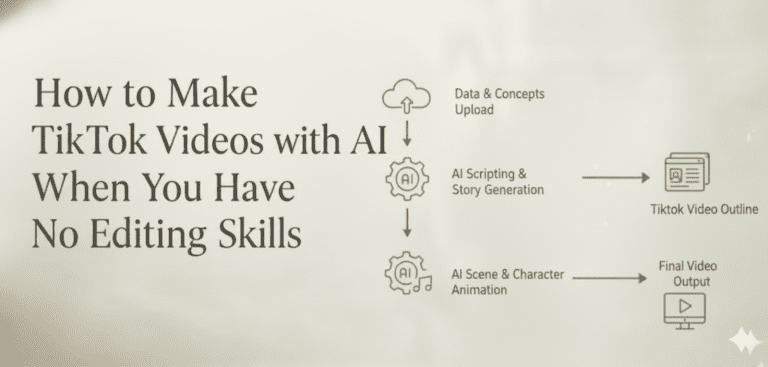

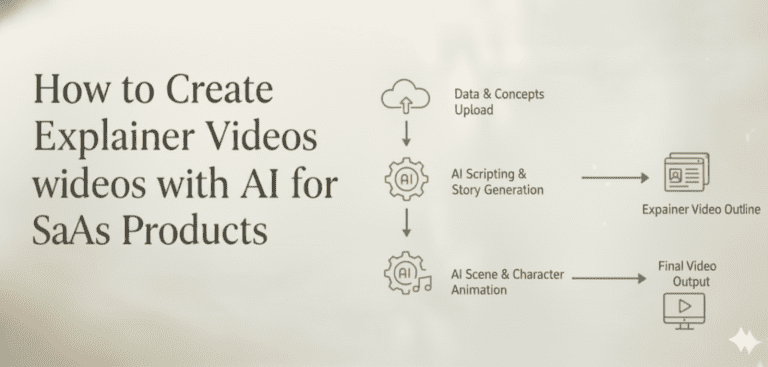

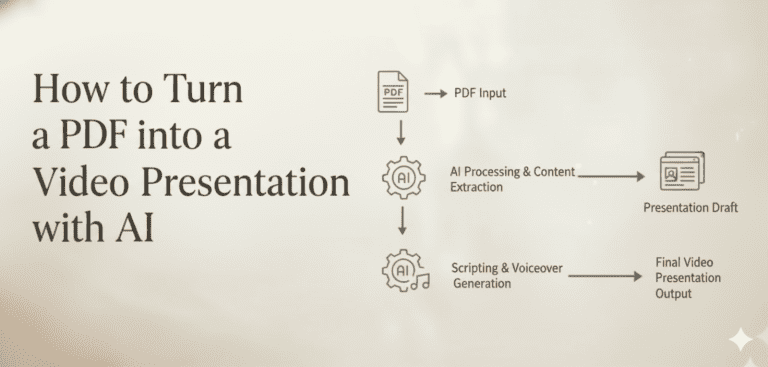

I simply describe my creative goal in natural language, and its orchestration engine delegates tasks to the best-fit models—

for instance, ChatGPT Plus for scripting, Runway Gen-3 for motion rendering, or ElevenLabs for lifelike voiceovers—

all coordinated seamlessly within browsers like Chrome or Firefox.

From Tools to Direction

Rather than being “just another model,” CrePal acts as the director—the brain that coordinates every creative step.

It eliminates constant context-switching between tools like Photoshop, Notion, or Google Workspace, and instead delivers a cohesive, automated workflow from concept to export.

For me, that means I can focus entirely on storytelling and creative direction, while CrePal handles the rest—

turning what once took a week into a polished, platform-ready video before I finish my morning coffee.

Evolution from text-to-video to intelligent orchestration

The shift from single-model tools to orchestration platforms marks the real breakthrough in AI video creation.

People once had to juggle five or six different platforms just to make a short video with generative AI. I used several web browsers, managed countless prompts, and even edited rough cuts by hand in Final Cut.

Now, I simply set my goal in CrePal using plain language. The system understands my intent and carries out every step without needing manual tweaks.

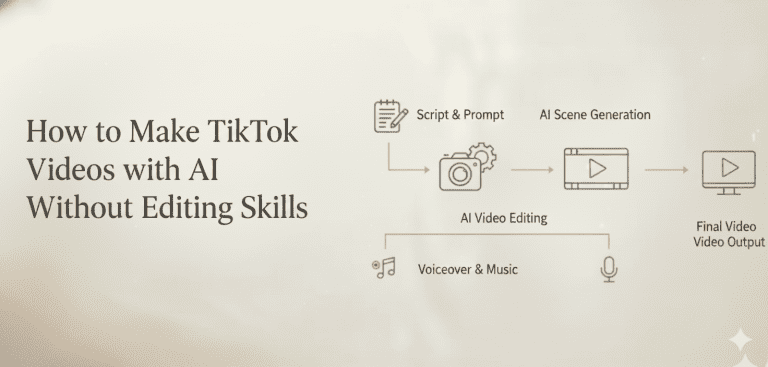

How the orchestration workflow operates

CrePal handles everything by breaking down my request into coordinated tasks. Each specialized model tackles the job it does best.

The system plans the script with DeepSeek or other llms, grabs images from Midjourney or GPT-Image, creates clips with Veo or Seedance, then adds music from Suno.

Editing gets handled by its own Agent editor since no public model gets transitions and rhythm right on its own yet. If I want control at any stage, changing environment variables for Python rendering or swapping models, I can do that too.

While competitors like InVideo AI serve over 50 million users creating 7 million videos each month, CrePal differentiates itself through deeper model orchestration rather than relying on a single AI engine.

When finished, I export directly to TikTok or YouTube Shorts. The formats fit each platform perfectly without cross-platform stitching headaches.

This type of model orchestration saves time and makes content creation as easy as sharing a Google Drive link through WhatsApp on Android devices or Windows mobile.

How multi-model synergy improves video quality

CrePal’s pipeline uses the best model for each job. This intelligent routing is what separates orchestration platforms from single-model tools.

For realism and face consistency, Midjourney handles ai-generated image content with impressive results. When I need to blend pictures, I rely on GPT-Image because it fuses multiple references well.

Specialized model selection for different video tasks

For cinematic effects or smooth transitions, Veo leads as it creates polished video clips that look like high-quality movie scenes. Testing by independent reviewers in 2025 found that Veo produces footage at roughly $6 per 8 seconds.

Hailuo proves perfect for motion tasks, like “small animals diving,” as its engine understands movement physics much better than others.

The system organizes knowledge using databases and maps every generation step to its strongest assistant model while keeping things scalable and customizable within chromium-based browsers such as Microsoft Edge and Safari.

| Model | Primary Strength | Best Use Case |

|---|---|---|

| Midjourney | Face consistency & realism | Character-focused scenes |

| Veo | Cinematic transitions | Polished video clips |

| Hailuo | Motion physics | Dynamic movement |

| Suno | Audio generation | Background music |

Maintaining consistency across assets

The storytelling process keeps style steady across all assets thanks to a prompt system tuned just right, reducing visual drift between scenes whether pulled from pinterest or modeled after promo graphics for tiktok and instagram campaigns.

Automated asset filtering checks outputs before showing drafts so oddities rarely pass through. Gacha randomness drops sharply, saving time editing in the Agent editor or handling pull requests in the backend with OpenAI’s help or Gemini-powered chatbots guiding more complex edits via drag-and-drop tools.

Soon batch storyboard output should speed up my workflow even more. Track preview will allow real-time tweaks directly from the frontend while text-to-speech brings dialog edits closer to instant feedback levels found in top apps like Google Vids.

Now keeping characters consistent is easy since I set persona at frame one, stopping those strange shifts common with single-model workflows. Best of all, this orchestration means each finished video only costs $2 to $3 for me instead of breaking my budget every month on platforms without smart synergy built in.

Case study: Veo + Midjourney workflow

Moving from theory to practice, I tested how pairing Veo with Midjourney creates a strong workflow for genai video direction. This approach blends high realism and cinematic quality through artificial intelligence.

The step-by-step generation process

- For a test, I used CrePal to make a 3-second, 16:9 video of futuristic ruins, seen through a rain-splattered semi-basement window as if shot by a 17-year-old girl.

- Veo drove the main video generation, producing smooth shots with lifelike denoise, careful blur effects, and cartoony touches where needed.

- I grabbed key visuals from Midjourney since it excels at detailed portraits and delivers dream-like quality for people’s faces in scenes.

- GPT-Image helped keep scene consistency by merging several reference images, making sure the girl and her setting stayed aligned from frame to frame.

- Complex scenes needing real physics, like running or fighting, went through Hailuo for better motion accuracy.

- The system mostly replaced basic storyboard work. It missed only on how a flower should emerge in one spot of the clip due to model limits.

- Each eight seconds of cinematic footage cost about $6 on Veo, so budget is still something to watch during content marketing campaigns using tools like TikTok or Google’s platforms.

- Automatic shot understanding is now good enough for user-friendly workflows but relies on how mature each artificial intelligence model is at storytelling today.

Quality and cost trade-offs

The results impressed me. Visual coherence stayed strong across the entire sequence.

Compared to competitors like Runway (which charges $35/month for its Pro plan with 500 credits) or Synthesia (starting at $29/month for 10 video minutes), CrePal’s orchestration approach delivered superior results at a fraction of the per-video cost.

The future of AI-directed video creation

Creativity shifts back to the storyteller.

AI directors like CrePal free me from being just a tool user. I now set goals, not parameters or endless shot lists.

Adobe’s new platforms and OpenAI’s text-to-speech models help streamline each step, turning tiktok clips, http links, or even an email idea into smart video content within minutes.

The shift to orchestration hubs

Soon, end-to-end orchestration hubs will replace single-use tools. The winning systems collect process data and user memory instead of only chasing higher resolution battles.

With over 5,000 sign-ups and fast-growing subscriptions in days, real demand proves users want orchestration that puts creative control first, even if live-action edits or melody-following singing still have limits today.

The AI video generator market is expanding at a compound annual growth rate of 20%, with Asia-Pacific accounting for 31.40% of global revenue in 2024, driven by widespread adoption across marketing, education, and entertainment industries.

What separates orchestration from generation

The distinction matters. Single-model generators like Runway’s Gen-3 Alpha or Kling 2.1 Master produce impressive individual clips, but I still need to stitch everything together manually.

Orchestration platforms coordinate the entire pipeline. Script planning flows into asset generation, which connects to editing, then exports in the right format for each platform.

The next wave will integrate more sophisticated memory systems. CrePal already remembers my style preferences across sessions. Future versions will understand my brand voice, automatically applying consistent visual treatments and pacing to every video I create.

Model performance keeps improving, too. As Veo, Midjourney, and other engines get better at their specialized tasks, the orchestration layer becomes even more valuable. I benefit from every upgrade without changing my workflow.

FAQs

1. What is an AI Director?

In my experience, an AI Director is software that automates video creation by managing storytelling, suggesting camera angles, and handling text to speech features, letting me produce content without deep technical skill. Tools like PowerDirector and Detail’s “AI Director” feature use artificial intelligence to analyze clips and turn them into shareable videos.

2. How does text to speech work with AI Directors?

The technology uses neural networks to analyze a script and convert it into lifelike audio. I find that platforms like ElevenLabs or Typecast can even add emotional inflections, which makes the text to speech narration for my videos sound much more natural and engaging.

3. Can AI Directors create content for TikTok?

Yes, they are specifically designed for it, and I’ve seen reports that over 50% of short-form video content on TikTok already involves AI. Tools like InVideo AI and FlexClip offer templates and auto-captioning features that are optimized for creating viral TikTok videos quickly.

4. What kind of stories can AI Directors tell?

I’ve seen AI Directors handle a huge range of story telling, from generating horror narratives reminiscent of Innsmouth to crafting marketing scripts. Because the AI can control plot points and character development, I can use it to create complex interactive stories or simple, engaging narratives for my audience.