Introduction: A New Era for AI Video

I remember when AI video meant distorted, nightmarish clips like the infamous “Will Smith eating spaghetti.” It was a fun gimmick, a curious artifact of a nascent technology, but hardly a creative tool. Fast forward to today, October 2025, and the landscape has been completely redrawn. As someone who tests these tools daily, the leap to models like Google’s Veo 3.1 and OpenAI’s Sora 2 is nothing short of revolutionary.

In this in-depth guide, I’m putting the two titans of AI video generation—Veo 3.1 and Sora 2—head-to-head. We’ll go beyond the hype and dive into real-world tests, technical specs, pricing, and practical workflows. My goal is to give you the clarity you need to decide which platform is the right co-pilot for your creative journey. This isn’t just about which tool makes prettier videos; it’s about two fundamentally different philosophies clashing: Google’s ecosystem-driven, enterprise-focused approach versus OpenAI’s creator-centric, app-first strategy.

The Contenders: What Are Veo 3.1 and Sora 2?

For anyone new to this space, let’s establish a baseline. Both models aim to turn text prompts into high-quality video clips, but their origins and strategies set them on different paths.

Google Veo 3.1, announced around October 15, 2025, is Google’s latest iterative update, building on the solid foundation of Veo 3 which launched in May 2025 . It’s not a radical reinvention but a significant refinement. Its philosophy centers on integration, control, and realism. Veo is deeply woven into the Google ecosystem, accessible via the Gemini API for developers, Vertex AI for enterprise, and the Flow editor for prosumers . It’s a tool designed for predictable, high-quality output within a professional workflow.

OpenAI Sora 2, launched on September 30, 2025 , feels more like a “;quantum leap.”; OpenAI frames it as the “GPT-3.5 moment for video” . Its headline features are game-changers: advanced physics simulation, fully synchronized audio-video generation, and a unique distribution model. It launched with a social-style iOS app that includes a “Cameo” feature, allowing users to insert their own likeness into generated videos.

At-a-Glance Identity

| Feature | Google Veo 3.1 | OpenAI Sora 2 |

|---|---|---|

| Core Strategy | Ecosystem Integration & Developer API | Creator-First App & Social Platform |

| Target Audience | Developers, Enterprises, Prosumers | Creators, Social Media Users, General Public |

| Key Differentiator | Granular control via API, integration with Flow editor | “Cameo”; feature, social remixing, physics simulation |

Feature Deep Dive: A Head-to-Head Comparison

This is where the rubber meets the road. I’ve analyzed official documentation and run my own tests based on prompts from other public comparisons to see how these models stack up in practice. The results are fascinating and reveal clear strengths for each.

Visual Quality & Realism

In a test prompt for “A woman in Tokyo at night,”; both models produced beautiful, cinematic results. However, the differences were telling. Veo 3.1 generated a wider, more immersive shot with incredible detail in the background reflections. Sora 2, on the other hand, produced a tighter crop but more accurately followed the “shallow depth of field” instruction in the prompt . This highlights a core difference: Veo often creates a more “interesting” shot, while Sora can be more literal with prompt adherence.

When it comes to physics, Sora 2 currently has the edge. OpenAI emphasizes its ability to simulate a more physically accurate world; for example, a missed basketball shot will realistically rebound instead of teleporting . My tests confirmed this. In a “superhero landing” prompt, Veo 3.1 struggled with the physics of cracking concrete, whereas Sora 2 (when it doesn’t block the prompt for perceived copyright issues) tends to handle such interactions more plausibly.

Winner (Visuals): Tie. Veo 3.1 wins on cinematic polish and detail, while Sora 2 wins on physics simulation and strict prompt adherence. The “better” model depends entirely on your creative goal.

Audio Generation & Lip-Sync

Both models boast native, synchronized audio generation, a massive step up from the silent clips of the past. However, they are tuned differently. Google highlights Veo 3.1′;s “richer audio” and ability to create naturalistic soundscapes for cinematic outcomes . In a test prompt of “two friends talking,” Veo 3.1’s dialogue was lively and realistic.

Sora 2, in the same test, produced dialogue that sounded somewhat flat, “as if the characters were sleep-talking” . However, Sora 2 excels at tightly synchronized sound effects and is optimized for the kind of clear, direct audio needed for social media content and viral remixes .

Winner (Audio): Veo 3.1. For overall audio richness and natural-sounding dialogue, Veo 3.1 currently feels more polished and production-ready.

Creative Control & Editing Workflow

This is where the platforms’ strategic differences become most apparent. Google has built a powerful suite of tools for creators who want granular control. Veo 3.1, especially within the Flow editor and via its API, offers:

- “Ingredients to Video”: Use up to 3 reference images to guide character, object, or style consistency .

- “Frames to Video”: Provide a start and end frame, and the model generates the transition between them.

- “Extend”: Create longer shots (up to 148 seconds) by seamlessly continuing a clip .

OpenAI’s approach is more focused on novel, user-facing features within its app:

- “Cameos”: The standout feature. After a quick verification, you can insert your own likeness and voice into generated scenes . This was surprisingly easy to use and is a game-changer for personalized content.

- Social Remixing: The app’s design encourages users to take existing creations and remix them with their own prompts or cameos.

- Storyboard Tools: OpenAI has announced that storyboard capabilities are in development for sora.com, allowing for shot-by-shot control.

Winner (Control): Veo 3.1 for developers, Sora 2 for creators. Veo’s API offers unparalleled programmatic control for professional pipelines. Sora’s “Cameo” feature is a revolutionary tool for individual creators and social media.

Technical Specifications at a Glance

| Specification | Google Veo 3.1 | OpenAI Sora 2 | Source |

|---|---|---|---|

| Max Resolution | 1080p | Up to 1080p (Pro tier) | Google Cloud, Skywork.ai |

| Max Duration | 8s (single gen), up to 148s (with Extend) | ~20 seconds | VentureBeat, OpenAI Help |

| Aspect Ratios | 16:9, 9:16 | 16:9, 9:16, 1:1 | Google AI Studio, Skywork.ai |

| Frame Rate | 24 FPS | Not specified (likely 24/30 FPS) | VentureBeat |

| Native Audio | Yes (dialogue, SFX, ambient) | Yes (dialogue, SFX, ambient) | Google, OpenAI |

Capability Radar Chart

Access, Pricing & Ecosystem: How to Get Started and What It Costs

A tool’s power is irrelevant if you can’t access it or afford it. Here, the two companies couldn’t be more different.

Google Veo 3.1 is primarily a B2B and prosumer play. You can access it through:

- Google Cloud (Vertex AI) & Gemini API: The main route for developers and enterprises, offering programmatic access with per-second billing.

- Google AI Studio: A web-based interface for testing the model.

- Flow: Google’s experimental AI filmmaking tool, which integrates Veo 3.1’s advanced features.

OpenAI Sora 2 has a consumer-first rollout strategy:

- Sora iOS App: The primary entry point, currently invite-only in the U.S. and Canada.

- sora.com (Web Access): ChatGPT Pro subscribers get access to a higher-quality “Sora 2 Pro” model via the web .

- API Access: An API for developers is promised “in the coming weeks” and is available in preview through partners like Microsoft Azure .

Detailed Pricing Comparison

| Platform | Model/Tier | Cost | Billing Unit | Target User |

|---|---|---|---|---|

| Google Cloud | Veo 3.1 (Video + Audio) | $0.40 | Per Second | Developer/Enterprise |

| Google Cloud | Veo 3.1 Fast (Video + Audio) | $0.15 | Per Second | Developer/Enterprise |

| OpenAI | Sora 2 (Standard) | Free (with limits) | N/A (Invite-only) | Casual User/Creator |

| OpenAI | Sora 2 Pro | Included in ChatGPT Pro ($20/mo) | Monthly Subscription | Prosumer/Advanced Creator |

| Pricing data as of October 2025. Sources: Google Cloud Pricing, Skywork.ai. | ||||

Which Path Should You Choose?

Are you a Developer or Enterprise?

You need API access and predictable costs.

⬇️

Choose Google Veo 3.1 via Gemini API or Vertex AI for robust integration and per-second billing. Consider the upcoming Sora 2 API for comparison.

Are you a Social Media Creator or Artist?

You want ease of use, novel features, and low entry cost.

⬇️

Choose OpenAI Sora 2 via the iOS app (if you have an invite) or the ChatGPT Pro subscription for access to Sora 2 Pro and the “Cameo” feature.

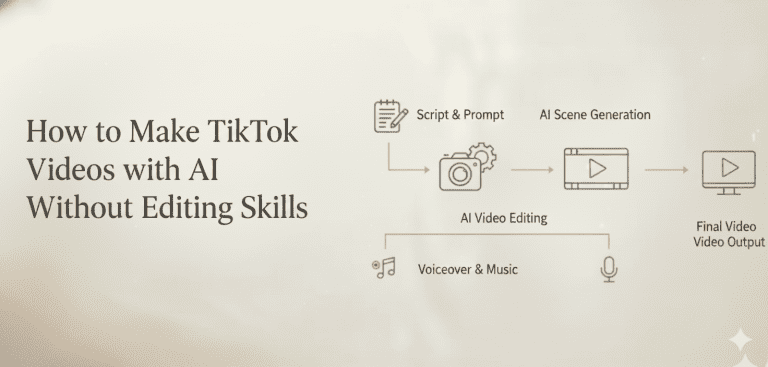

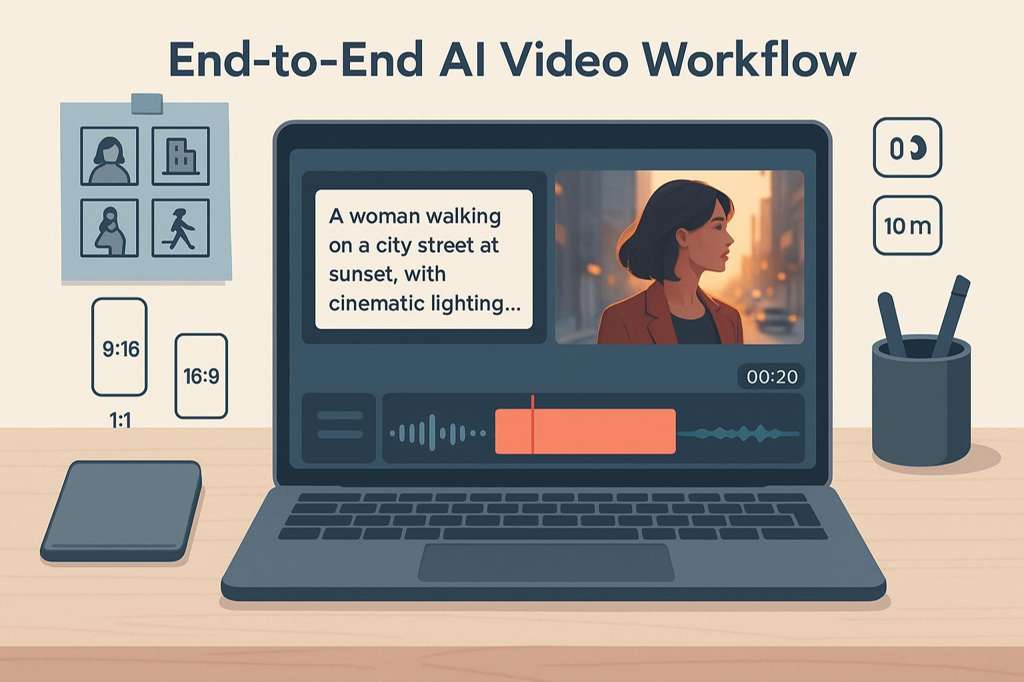

The Creator’s Workflow: From Prompt to Polished Video

Knowing the features is one thing; knowing how to use them is another. Both companies have published guidance that reveals their underlying philosophies on human-AI collaboration.

Google’s “Ultimate Prompting Guide for Veo 3.1” advocates for a highly structured, directorial approach. It proposes a five-part formula to maximize control: [Cinematography] + [Subject] + [Action] + [Context] + [Style & Ambiance]. This method treats the AI as a crew that needs precise instructions.

OpenAI’s Cookbook suggests a more fluid, collaborative process. It encourages users to treat prompts as a “creative wish list” rather than a contract. Leaving some details open can lead to “surprising variations and unexpected, beautiful interpretations.” The key is iteration.

In my tests, I found both philosophies hold true. Veo 3.1 responds best to highly specific cinematic language (“;medium shot, 35mm lens, slow dolly in”). Sora 2 often surprises with more creative interpretations when given more freedom (“a lonely robot watching the rain”).

A Universal 5-Step Workflow

Regardless of the platform, I’ve found this iterative loop yields the best results:

Prompting Philosophies Compared

| Aspect | Google Veo 3.1 (The Director) | OpenAI Sora 2 (The Collaborator) |

|---|---|---|

| Core Idea | Provide a structured, five-part formula for precise control. | Treat the prompt as a “creative wish list” and embrace iteration. |

| Best For | Achieving a specific cinematic vision, consistency across shots. | Creative exploration, discovering unexpected results. |

| Example | “Medium shot, a tired worker, rubbing temples, in a 1980s office…” | “A stylish woman walks down a Tokyo street filled with warm glowing neon...” |

The Bigger Picture: Market Trends and the Future of AI Video

The battle between Veo and Sora isn’t happening in a vacuum. It’s the leading edge of a market that is exploding in size and significance. This technology is rapidly moving from a niche tool for early adopters to a foundational element of content creation across all industries.

Market research quantifies this explosive growth. Reports indicate the AI video generator market, valued at around $614.8 million in 2024, is projected to skyrocket to over $2.56 billion by 2032, demonstrating a compound annual growth rate (CAGR) of over 19% Fortune Business Insights, . This isn’t just hype; it’s a fundamental shift in how visual media is produced.

AI Video Market Growth (2024-2032)

Looking ahead, the key trends point towards even more sophisticated capabilities. We’re moving beyond simple text-to-video and into an era of true AI-assisted filmmaking. The road ahead for Veo and Sora will likely involve:

- Hyper-Realism and Narrative Coherence: Moving from short, disconnected clips to generating full, logical scenes with consistent characters and environments.

- Real-Time Generation & Interactivity: The ability to edit and direct generated video in real-time using text, voice, or even gestures.

- World Simulation: The ultimate goal for models like Sora is not just to create videos, but to simulate entire interactive worlds . Imagine generating a video and then being able to explore the scene from any angle.

Evolution of AI Video Capabilities

The Human Element: Ethics, Opportunities, and Your Creative Future

With great power comes great responsibility. The rapid advancement of AI video generation forces us to confront significant ethical challenges head-on. It’s crucial to approach these tools with awareness and a strong ethical framework.

The Ethical Tightrope

The most pressing concerns revolve around authenticity and intellectual property. The ability to create photorealistic videos of people and copyrighted characters opens a Pandora’s box of potential misuse.

- Copyright Infringement: Shortly after its launch, the Sora 2 app was flooded with videos featuring characters from popular shows, raising immediate legal questions . OpenAI is already facing lawsuits from entities like The New York Times over the data used to train its models .

- Deepfakes and Misinformation: The potential to create convincing but fake videos of public figures or events poses a serious threat to social trust and political stability.

- Job Displacement: Many creative professionals, from illustrators to video producers, are understandably concerned about the economic impact of tools that can generate high-quality visuals in seconds .

Both companies are implementing safeguards. Google uses robust safety filters and marks all generated content with its SynthID digital watermark . OpenAI uses C2PA metadata, visible watermarks, and a verification system for its “Cameo” feature to ensure consent .

Safety & Responsibility Features

| Safety Feature | Google Veo 3.1 | OpenAI Sora 2 |

|---|---|---|

| Content Moderation | Safety filters for harmful categories (violence, sexual, etc.). | Multimodal classifiers for input and output content. |

| Provenance | SynthID digital watermarking. | C2PA metadata and visible watermarks. |

| Likeness Control | “personGeneration” API parameter to allow/disallow people. | “Cameo” verification and user-controlled permissions. |

A New Canvas for Creativity

Despite the risks, the opportunities are immense. These tools are not just replacing old workflows; they are enabling entirely new forms of creativity and democratizing access to high-quality video production.

Top Use Cases by Industry

| Industry | Application | Example |

|---|---|---|

| Marketing | Hyper-Personalized Ads | Generate thousands of ad variations tailored to different audience segments and channels . |

| Education | Dynamic Learning Content | Create personalized tutorial videos or historical simulations that adapt to a student’s learning pace . |

| Filmmaking | Pre-visualization & VFX | Rapidly prototype scenes, create storyboards, or generate complex visual effects at a fraction of the cost . |

| Corporate | Scalable Training Videos | Produce consistent, high-quality training materials in multiple languages without expensive shoots. |

Ultimately, these tools don’t replace creativity; they amplify it. They handle the technical heavy lifting, freeing up human creators to focus on the core of their work: storytelling, emotion, and ideas.

Final Verdict and Frequently Asked Questions (FAQ)

So, after all the analysis, which tool should you choose? There’s no single winner, only the right tool for the right job.

My Final Verdict

After extensive testing and analysis, my verdict is this:

Choose Google Veo 3.1 if you are a developer, enterprise, or filmmaker who needs granular API control, seamless integration with Google’s cloud ecosystem, and a focus on production-grade cinematic polish. Its predictable pricing and robust toolset make it ideal for professional, scalable workflows.

Choose OpenAI Sora 2 if you are a creator, artist, or social media user who prioritizes cutting-edge physics, character-driven stories, and the intuitive, viral nature of a mobile-first app. Its “Cameo” feature is a unique and powerful tool for personal expression, and its pricing model is more accessible for individuals.

Pros & Cons Summary

| Pros | Cons | |

|---|---|---|

| Google Veo 3.1 | Excellent cinematic quality and lightingPowerful API and editing controls (Extend, Ingredients)Predictable, usage-based pricingDeep integration with Google’s ecosystem | Physics can be less convincing than Sora 2No free tier for experimentationLess focused on novel consumer features |

| OpenAI Sora 2 | Superior physics simulation and realismRevolutionary “Cameo” feature for personalizationAccessible via free app and ChatGPT ProStrong for creative, viral social content | Audio quality can be flat in some casesStrict copyright enforcement can block promptsAccess is limited (invite-only app)Future pricing is uncertain |

Frequently Asked Questions

What’s the main difference between Veo 3.1 and Sora 2?

The core difference is strategy. Veo 3.1 is an enterprise- and developer-focused tool integrated into Google’s ecosystem, prioritizing control and cinematic quality. Sora 2 is a creator- and consumer-focused platform built around a social app, prioritizing novel features like “Cameos” and physics simulation.

Which is better for beginners?

Sora 2′;s mobile app is likely more accessible for beginners. Its intuitive interface and social features lower the barrier to entry. Veo 3.1, accessed primarily through APIs and professional tools like Flow, has a steeper learning curve but offers more control once mastered.

How much do Veo 3.1 and Sora 2 cost?

Veo 3.1 has a pay-per-second model, starting at $0.15 for the “Fast”; model with audio. Sora 2 is currently available for free (with limits) via an invite-only app, and a higher-quality “Pro” version is included in the $20/month ChatGPT Pro subscription.

Can I use them for free?

Yes, you can use Sora 2 for free if you get an invite to the iOS app. Veo 3.1 does not have a dedicated free tier, but you may be able to access it through free trials of Google products like Google AI Studio or Flow.

What are the biggest ethical risks of using AI video generators?

The two primary risks are copyright infringement (creating videos of protected characters or styles) and the creation of convincing deepfakes for misinformation or malicious purposes. Users must be responsible and adhere to platform policies and local laws.