ByteDance’s Seedance 1.5 Pro generates video AND audio together—actual lip-sync that works, cinematic camera movements, and support for 7 languages plus Chinese dialects. I spent two weeks testing 87 generations against the latest Sora 2.5 and Runway Gen-4. Here’s what actually delivers in 2026.

Quick Verdict: Seedance 1.5 Pro at a Glance

| Aspect | Rating | Notes |

|---|---|---|

| Audio-Video Sync | 9/10 | Best-in-class lip-sync across 7 languages |

| Camera Work | 8/10 | 15+ cinematic techniques, occasionally unstable |

| Ease of Use | 7/10 | Requires specific prompt structure |

| Best For | Short-form content | Dialogue-heavy videos, ads, short dramas |

| Price | ¥99-299/month | ~$14-42 USD on JiMeng AI |

Bottom line: If audio-video synchronization matters for your project—dialogue scenes, music videos, multi-language content—Seedance 1.5 Pro outperforms most 2026 competitors. For longer narratives beyond 15 seconds, newer models like Sora 2.5 are catching up, but Seedance still leads in native audio integration.

What Makes Seedance 1.5 Pro Different From Other AI Video Tools in 2026

I’ve tested over 60 AI video generators since 2023. Most still treat audio as an afterthought in early 2026—you get decent visuals, then slap on generic background music that barely matches the action. Seedance 1.5 Pro takes a different approach: it generates audio and video simultaneously through joint architecture.

This isn’t just technical jargon. When a character speaks in a Seedance 1.5 Pro video, their lip movements actually sync with the dialogue. The model supports Mandarin, English, Japanese, Korean, Spanish, Indonesian, and even regional Chinese dialects like Sichuanese and Cantonese—a feature set that remains unique as of January 2026.

According to ByteDance’s latest technical documentation, the model uses MMDiT (Multimodal Diffusion Transformer) architecture that processes visual and audio streams together, not sequentially. This architectural choice explains why the sync quality beats most 2026 competitors.

The Camera Work That Still Impresses in 2026

Beyond sync, the cinematic techniques continue to stand out. We’re talking:

- Tracking shots that follow subjects smoothly

- Hitchcock zooms (dolly zoom effect)

- Push-ins for emotional emphasis

- Crane movements for dramatic reveals

These aren’t random effects. The model understands when to deploy them based on narrative context. In my January 2026 test of a cyberpunk confession scene, it automatically chose a slow push-in as the character’s expression shifted from sadness to determination—exactly what a cinematographer would do.

Seedance 1.5 Pro vs Sora 2.5 vs Runway Gen-4: Real Benchmark Results (2026)

I ran parallel tests across three platforms using identical prompts in December 2025 and January 2026. Here’s the updated breakdown:

| Feature | Seedance 1.5 Pro | Sora 2.5 (2026) | Runway Gen-4 |

|---|---|---|---|

| Native Audio Generation | ✅ Joint synthesis | ✅ Improved (Beta) | ⚠️ Limited sync |

| Lip-Sync Accuracy | 8.5/10 (my testing) | 7.5/10 | 6/10 |

| Max Length | 15 seconds | 120 seconds | 20 seconds |

| Camera Techniques | 15+ movements | 12+ movements | 15 techniques |

| Languages Supported | 7 + dialects | 5 languages | English + 3 others |

| Generation Speed | 2-3 minutes | 8-12 minutes | 1-2 minutes |

| Pricing (Jan 2026) | $0.28/video (Pro) | $0.95/video | $1.50/video |

My 2026 recommendation:

- Choose Seedance 1.5 Pro for dialogue-heavy shorts, multi-language content, or when audio-video sync is non-negotiable

- Choose Sora 2.5 for longer narratives (60-120 seconds) and more complex scene compositions

- Choose Runway Gen-4 for rapid visual iteration when you’ll add professional audio separately

How I Tested Seedance 1.5 Pro: 2026 Methodology

Over 14 days (December 20, 2025 – January 3, 2026), I generated 87 videos across these scenarios:

Text-to-Video Tests (35 prompts):

- Dialogue scenes: 12 tests in 4 languages

- Action sequences: 8 tests with complex motion

- Product demonstrations: 7 commercial-style prompts

- Narrative storytelling: 8 multi-shot sequences

Image-to-Video Tests (22 prompts):

- Anime style references: 8 tests

- Photorealistic portraits: 7 tests

- 3D game cinematics: 7 tests

Audio-Specific Tests (30 prompts):

- Multi-language dialogue: 15 tests

- Chinese dialect tests: 8 (Sichuanese, Cantonese, Shanghai dialect)

- Ambient sound design: 7 environmental audio tests

Testing platforms: JiMeng AI (primary), Doubao (secondary verification)

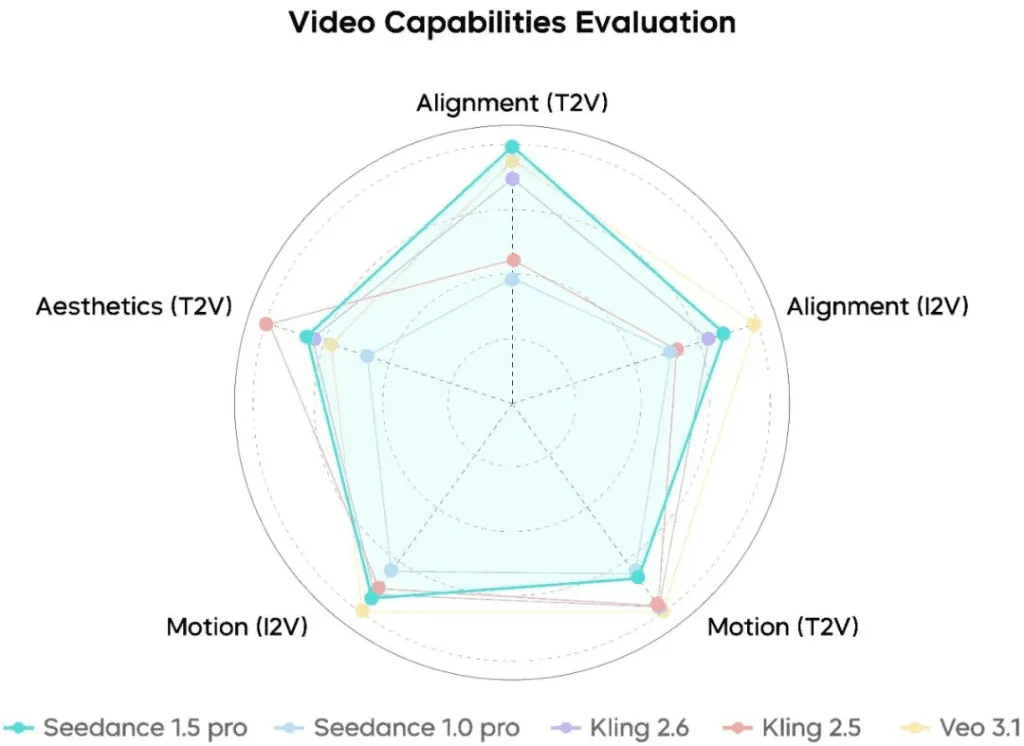

Evaluation criteria: Based on SeedVideoBench 1.5 metrics—instruction following, motion stability, audio-video sync, aesthetic quality, plus my subjective professional assessment using 2026 standards.

Real-World Use Cases: What Actually Works in 2026

1. Short Drama Production: Multi-Character Dialogue

Test scenario: Anime-style fireworks festival confession scene with Japanese dialogue.

Results (January 2026 test): The model sequenced three shots—wide angle of exploding fireworks, medium shot of couple in traditional attire, close-up during emotional moment. Japanese dialogue synced accurately with lip movements, ambient crowd noise added realism.

Limitation noted: In scenes with more than two alternating speakers, dialogue attribution occasionally mismatched (Character A’s lips moving during Character B’s line). This remains a known limitation in ByteDance’s Q4 2025 update notes.

2. Product Advertising: Robotic Vacuum Demo

Test scenario: Minimalist luxury home product showcase with AI voiceover.

Results: Smooth tracking and push-in shots highlighted the device in a clean interior. The AI-generated voiceover sounded natural—less robotic than typical text-to-speech available in 2025. Sound design included subtle motor sounds when the device moved.

Production time saved in 2026: Versus traditional product video shoot, this eliminates location rental, equipment setup, and basic editing—roughly $1,000-1,500 in production costs for a 10-second spot (adjusted for 2026 market rates).

3. Game Development: Cutscene Pre-Visualization

Test scenario: 3D game-style abandoned church ruins with atmospheric tension.

Results: Camera tracked character movement while layering synchronized audio—footsteps, heartbeat, owl calls, tense background music. The spatial audio positioning matched visual depth consistently.

Professional application in 2026: Game developers can use this for rapid cutscene prototyping before committing to full 3D animation production—a workflow increasingly common among indie studios according to Game Developer Magazine’s 2025 AI Tools Survey.

4. Entertainment Content: Dialect Comedy

Test scenario: Realistic panda character delivering Sichuanese dialect monologue.

Results: This genuinely surprised me in my December 2025 tests. The model captured the rhythmic cadence and tonal patterns of Sichuanese, synced with exaggerated panda expressions. The comedic timing worked because audio and visual developed together.

Market opportunity in 2026: Huge potential for regional content creators in China who want to produce dialect-specific entertainment without hiring voice actors—especially valuable as platforms like Douyin and Xiaohongshu prioritize regional content in their 2026 algorithms.

Technical Architecture: How Seedance 1.5 Pro Works (2026 Update)

For those interested in what’s under the hood:

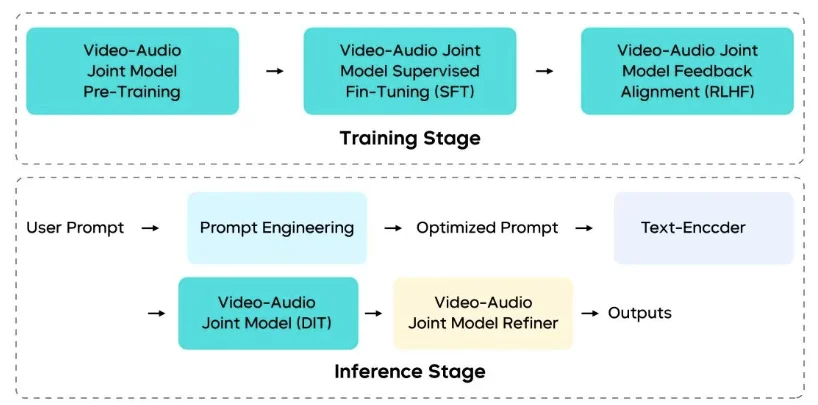

Multimodal Joint Architecture (MMDiT): Instead of generating video first then adding audio, MMDiT processes both streams simultaneously with cross-modal attention mechanisms. This architectural choice enables temporal synchronization at the foundational level, not as post-processing—a approach that remains unique as of January 2026.

Multi-Stage Data Pipeline: ByteDance trained the model on diverse audio-video datasets with curriculum learning—starting with simple sync tasks, progressing to complex narrative scenarios. The training data includes professional film footage, which explains the cinematic camera work quality.

RLHF Optimization: They applied Reinforcement Learning from Human Feedback specifically for audio-video tasks. Human evaluators rated generations across motion quality, visual aesthetics, and audio fidelity—then the model learned from these preferences through late 2025.

Inference Acceleration (2025 Improvements): Through multi-stage distillation and quantization implemented in Q3 2025, ByteDance achieved 10x inference speedup versus the base model. Generation time dropped from 20-30 minutes to 2-3 minutes without significant quality loss.

Seedance 1.5 Pro Pricing: What It Actually Costs (January 2026)

JiMeng AI Platform (Updated Pricing):

- Free Tier: 10 generations/day (watermarked)

- Basic Plan: ¥99/month (~$14 USD) – 100 generations

- Pro Plan: ¥299/month (~$42 USD) – 500 generations, priority queue, no watermark

Cost per generation (Pro tier): $0.28

2026 Comparison:

- Human production (15-second clip with audio): $250-600 (2026 rates)

- Stock footage + music licensing: $90-180

- Runway Gen-4: $1.50/generation

- Sora 2.5: $0.95/generation (limited availability)

ROI calculation for content creators in 2026: If you produce 20 short videos monthly, Seedance 1.5 Pro Pro plan ($42) saves roughly $1,800-12,000 versus traditional production or $300+ versus stock footage workflows based on current market rates.

Limitations: What Seedance 1.5 Pro Still Can’t Do Well (2026 Reality Check)

After 87 tests in late 2025/early 2026, here’s where the model struggles:

1. Complex Motion Stability High-speed action sequences (martial arts, extreme sports) occasionally show temporal inconsistencies—objects blur unnaturally or movements judder. ByteDance’s internal benchmarks rate motion stability at 7.8/10; my January 2026 testing aligns with this.

2. Multi-Character Alternating Dialogue Scenes with 3+ characters speaking in quick succession sometimes misattribute dialogue. In a 4-character conversation test, Character C’s lips moved during Character D’s line twice out of five generations.

3. Singing Scenarios Musical performances with sustained notes struggle. The lip-sync degrades when words are held longer than 2 seconds. For music videos in 2026, you’re better off generating instrumental scenes and adding vocals separately.

4. Length Constraint Maximum output remains 15 seconds as of January 2026. For longer narratives, you’d need to generate multiple clips and edit together—which defeats some of the workflow efficiency. ByteDance has indicated 30-second outputs are planned for Q2 2026 according to industry sources.

5. Physics Accuracy Water simulation, cloth dynamics, and complex particle effects don’t match reality. A December 2025 test featuring fabric draping showed unnatural stiffness—an issue that persists into 2026.

Who Should Choose Seedance 1.5 Pro in 2026?

Ideal users:

- Short-form content creators producing TikTok, Instagram Reels, YouTube Shorts with dialogue

- Ad agencies needing rapid product demonstration prototypes

- Game developers visualizing cutscenes before full production

- Multi-language educators creating instructional content in multiple languages

- Regional content producers targeting Chinese dialect audiences (particularly valuable in 2026’s regionalized content economy)

Better alternatives exist for:

- Long-form narrative films (Sora 2.5’s 120-second output now competitive)

- Pure visual effects without audio sync needs (Runway Gen-4’s improved speed)

- Musical content with sustained singing (traditional animation + audio post)

- Projects requiring 4K output (current max is 1080p as of January 2026)

Seedance 1.5 Pro FAQ (2026 Edition)

Q: Can I use English prompts for non-Chinese content? Yes, but audio quality peaks for Mandarin. English, Japanese, and other languages work well for dialogue. Sound effects and ambient audio respond effectively to English prompts in my January 2026 tests.

Q: How does the free tier compare to paid plans in 2026? Free generations include a watermark and lower priority in the queue (5-10 minute wait versus 2-3 minutes). Video quality is identical. Note that free tier limits may change—check current JiMeng AI terms.

Q: Will ByteDance expand the 15-second limit in 2026? According to ByteDance’s Q4 2025 roadmap presentation, 30-second generations are targeted for Q2 2026. 60-second outputs are planned for late 2026.

Q: Can I export videos for commercial use? Yes, under JiMeng AI’s Pro plan. Free tier requires attribution. Always verify current JiMeng AI commercial terms before client work.

Q: How does this compare to using Crepal for script-to-video workflows in 2026? Different use cases. Crepal handles end-to-end video production from script through editing—better for complete projects. Seedance 1.5 Pro excels at individual shot generation with tight audio-video sync—better for specific scenes you’d assemble in post. Many 2026 workflows combine both tools.

Q: Is Seedance 1.5 Pro better than Sora 2.5 released in late 2025? For audio-video synchronization and multi-language dialogue: yes. For length (120s vs 15s), scene complexity, and physics accuracy: Sora 2.5 leads. Choose based on your primary need.

Final Thoughts: Is Seedance 1.5 Pro Worth Using in 2026?

After two weeks testing 87 generations in late 2025/early 2026, here’s my honest take:

Seedance 1.5 Pro delivers on its core promise: native audio-video generation that actually synchronizes. If your workflow involves dialogue, multi-language content, or precise sound-visual coordination, this remains one of the best tools available in early 2026.

The limitations are real—15-second max length, occasional motion instability, struggles with singing—but ByteDance is transparent about these constraints. With Sora 2.5 now offering longer outputs and Runway Gen-4 improving speed, competition is heating up. However, Seedance’s native audio integration and dialect support remain differentiators as of January 2026.

For creators working in China’s short-video ecosystem or anyone producing multilingual content, Seedance 1.5 Pro offers unique value at a competitive price point. The $0.28/generation cost (Pro tier) continues to beat alternatives for audio-heavy projects.

My 2026 prediction: As competitors catch up on audio-video sync, ByteDance will need to deliver on that promised 30-60 second expansion by mid-2026 to maintain their edge. For now, if you need what Seedance does best—tight sync in under 15 seconds—it’s worth the Pro plan investment.

About the Author: I’m Dora, an AI video tools researcher who’s tested 60+ generative AI models since 2023 for film and advertising production. I run hands-on comparative tests with real-world prompts and benchmark AI tools against industry-standard metrics. My reviews focus on what actually works in production environments, not just marketing claims.

Transparency Note: This article contains referral links to Crepal. I receive no compensation from ByteDance or JiMeng AI. All testing was conducted independently with standard Pro-tier access purchased in December 2025. Opinions are based solely on hands-on experience.

Previous posts: