Meta : Need multilingual video content fast? Learn how to translate one video into multiple languages with AI, while preserving clarity, timing, and audience fit.

You spent weeks scripting, shooting, and editing a product launch video. It performs brilliantly with your English-speaking audience. Now your team wants the same video in Spanish, French, Japanese, Portuguese, and Arabic — by next Friday.

A few years ago, that request would have meant hiring five translation agencies, five voiceover artists, and one very patient video editor. Today, AI handles the heavy lifting in a fraction of the time and cost.

But “translating a video” is more nuanced than running text through Google Translate. Language changes sentence length. Sentence length changes timing. Timing changes lip movement. Lip movement changes believability. Get any of these wrong, and your multilingual video feels off — even if every word is technically correct.

This guide walks you through exactly how to translate one video into multiple languages with AI — the right way. You’ll learn the difference between translation and localization, how to choose between subtitles, voiceover, and lip sync, how to manage timing shifts across languages, and how to quality-check the final output before it reaches a global audience.

Translation vs. Localization: Why Distinction Matters

Most people use “translation” and “localization” interchangeably. In video production, confusing the two is where quality breaks down.

Video Translation

Video translation converts spoken or written words from one language to another. The goal is linguistic accuracy. You take the English script, translate it into German, and overlay the German text or audio onto the original video.

The result is technically correct but often feels mechanical. Idioms land flat. Humor disappears. Cultural references confuse rather than connect.

Video Localization

Video localization adapts the entire viewing experience for a specific audience. This includes:

- Linguistic adaptation — not just translating words, but rewriting phrases so they feel native. “Hit the ground running” doesn’t translate literally into most languages. A localized version uses an equivalent idiom that carries the same energy.

- Visual adjustments — on-screen text, graphics, currency symbols, date formats, even color associations that carry different meanings across cultures.

- Tonal calibration — a casual, first-name tone works in American English marketing. In Japanese or Korean business contexts, the same casualness can feel disrespectful.

- Pacing and rhythm — German sentences tend to run longer than English. Japanese often compresses the same information into fewer syllables. These differences directly affect how audio aligns with visuals.

The bottom line: if you’re translating a video to check a compliance box, basic translation may suffice. If you’re translating a video to actually convert, engage, or educate an audience in another market, you need localization.

AI tools have become remarkably capable at both — but only when you understand what you’re asking for.

Subtitles, Voiceover, or Lip Sync: Choosing the Right Format

Not every multilingual video needs full lip-synced dubbing. The right format depends on your content type, budget, timeline, and audience expectations. Here’s how each option stacks up.

Option 1: AI-Generated Subtitles

What it does: Translates the spoken content and displays it as timed text overlays on the original video. The original audio stays intact.

Best for:

- Tutorial and how-to videos where viewers expect to read along

- Social media content on platforms where most users watch with sound off (Instagram, LinkedIn, Facebook)

- Internal training videos with modest budgets

- Content where the original speaker’s voice and delivery are part of the brand

Advantages:

- Fastest to produce

- Lowest cost

- Preserves original audio authenticity

- Easy to update when scripts change

Limitations:

- Viewers must read while watching — splits attention

- Doesn’t work well for fast-paced content with dense visuals

- Some audiences (particularly in markets accustomed to dubbing) perceive subtitles as lower effort

Quality tip: AI subtitle generators can auto-translate and auto-time captions in seconds. But always review line breaks. A subtitle that splits a noun from its adjective across two lines forces the viewer to mentally reassemble the sentence, which kills comprehension speed.

Option 2: AI Voiceover (Dubbing)

What it does: Replaces the original audio with an AI-generated voice speaking the translated script. The visuals remain unchanged.

Best for:

- Marketing and promotional videos where audio immersion matters

- Explainer videos and product demos

- E-learning content where listening is the primary mode of consumption

- Podcast-style or narration-driven videos where the speaker isn’t visually prominent

Advantages:

- More immersive than subtitles

- Audiences can watch without reading

- Modern AI voices sound natural and support dozens of languages

- Faster and more affordable than hiring voiceover artists for every language

Limitations:

- Audio-visual mismatch when the speaker’s lip movements are visible

- AI voice may not perfectly capture the emotional range of the original delivery

- Translated script length can cause audio to run longer or shorter than the original scene

Quality tip: When generating AI voiceover, pay attention to speech rate. A direct Spanish translation of an English script typically runs 15-25% longer. If you force the AI to speak faster to fit the original timing, it sounds rushed and unnatural. Better to slightly trim the translated script — keep the meaning, reduce the word count.

Option 3: AI Lip Sync

What it does: Not only replaces the audio but also adjusts the speaker’s lip movements to match the new language. The result looks like the speaker is actually speaking the translated language.

Best for:

- CEO or founder messages where face-to-camera delivery builds trust

- Sales videos and personalized outreach where authenticity drives conversion

- Global ad campaigns where the same spokesperson addresses every market

- Any content where the speaker’s face is prominently visible and lip mismatch would be distracting

Advantages:

- Highest level of immersion and authenticity

- Viewers perceive the content as natively produced for their language

- Maintains the emotional connection of face-to-camera delivery

- Dramatically reduces the cost of shooting separate versions per market

Limitations:

- Most computationally intensive option

- Quality varies significantly between AI tools — poor lip sync looks worse than no lip sync

- Requires clean, well-lit source footage for best results

Quality tip: AI lip sync works best when the source video has a clear, front-facing shot of the speaker with good lighting and minimal obstruction. Side angles, hands near the face, or heavy facial hair can reduce accuracy. If you’re planning multilingual distribution from the start, shoot your original video with lip sync in mind.

Quick Comparison Table

| Factor | Subtitles | Voiceover | Lip Sync |

| Production speed | ★★★★★ | ★★★★ | ★★★ |

| Cost | Lowest | Medium | Highest |

| Audience immersion | Moderate | High | Highest |

| Best when speaker is visible | ✓ (acceptable) | △ (slight mismatch) | ✓✓ (seamless) |

| Best when speaker is off-screen | ✓ | ✓✓ (ideal) | N/A |

| Ease of updates | Very easy | Easy | Moderate |

Recommendation for most teams: Start with voiceover for narration-driven content. Use lip sync for any face-to-camera content. Add subtitles as a supplementary layer across all formats — many viewers watch with sound off regardless of language.

How to Control Pacing and Timing Across Languages

This is where most AI-translated videos fall apart — not because the translation is wrong, but because the timing is off.

Every language has a different information density. The same sentence takes different amounts of time to speak depending on the target language.

Typical Expansion and Compression Rates

| Target Language | Approximate Length Change vs. English |

| Spanish | +15% to +25% longer |

| French | +15% to +20% longer |

| German | +10% to +30% longer |

| Portuguese | +15% to +25% longer |

| Japanese | -10% to -20% shorter (spoken) |

| Chinese (Mandarin) | -10% to -20% shorter (spoken) |

| Arabic | +10% to +25% longer |

| Korean | -5% to -10% shorter |

These percentages matter because your video has fixed visual anchors: scene transitions, on-screen animations, product demos, and music cues. When the translated audio runs 25% longer than the original, something has to give.

Three Strategies to Manage Timing Shifts

Strategy 1: Adaptive Script Editing

Instead of translating word-for-word and then compressing the audio, edit the translated script before generating the voiceover. Identify which sentences can be tightened without losing meaning.

Example:

- English: “Our platform allows you to easily create professional-quality videos in just a few minutes.”

- Word-for-word Spanish: “Nuestra plataforma te permite crear fácilmente videos de calidad profesional en solo unos minutos.” (longer)

- Adapted Spanish: “Crea videos profesionales en minutos con nuestra plataforma.” (tighter, same meaning, fits the original timing)

Strategy 2: Dynamic Pause Adjustment

AI voiceover tools can adjust the pacing between sentences and phrases. Instead of uniformly speeding up the entire narration (which sounds unnatural), selectively reduce pauses between sentences while keeping the speaking rate natural.

Strategy 3: Scene-Aware Segmentation

Break the script into segments that align with scene transitions. Translate and time each segment independently rather than treating the entire script as one continuous block. This prevents a timing mismatch in one segment from cascading through the rest of the video.

In tools like CrePal.ai, the AI Director Agent handles this automatically. When you request a multilingual version of your video, the system analyzes scene boundaries, adjusts translated script length per segment, and ensures audio-visual alignment throughout — no manual timing work required.

Quality Review: How to Catch Issues Before Publishing

AI-generated translations are good. They are not infallible. A systematic review process catches the 5-10% of issues that separate a professional multilingual video from an embarrassing one.

The Four-Layer Quality Check

Layer 1: Linguistic Accuracy

Have a native speaker (or reliable native-speaking reviewer) check the translated script for:

- Mistranslations, especially industry-specific terminology

- Unnatural phrasing that sounds like a translation rather than native speech

- Gender and formality errors (critical in languages like French, German, Japanese, Korean)

- Number, date, and currency format accuracy

If you don’t have native speakers on your team, use AI-assisted back-translation as a first filter: translate the output back into English and compare it against the original. Major meaning shifts will surface immediately.

Layer 2: Audio-Visual Sync

Watch the full video with the translated audio. Check for:

- Audio that extends beyond scene transitions

- Gaps of silence where the audio finishes too early

- Lip sync accuracy (if using lip sync): does the mouth movement look natural at normal playback speed?

- Subtitle timing: do captions appear and disappear in rhythm with the speech?

Layer 3: Cultural Appropriateness

This layer requires cultural knowledge, not just language skills. Look for:

- Idioms or humor that don’t translate culturally

- Visual elements that might carry unintended meaning in the target culture (colors, gestures, symbols)

- Is tone mismatches — is the level of formality appropriate for the target market?

Layer 4: Technical Quality

Final technical pass:

- Audio levels consistent between languages

- No audio artifacts, clicks, or unnatural breathing patterns from the AI voice

- Subtitle font readable against all background scenes

- Video export resolution and format correct for the target platform

Streamlined Review Workflow

For teams publishing in five or more languages simultaneously, reviewing every frame of every version isn’t always feasible. Prioritize things like this:

- Full review for your top 2-3 revenue markets

- Script review + spot-check for secondary markets

- Automated back-translation check for all remaining languages

- Native speaker feedback loop — publish, collect feedback, iterate

Best Use Cases: Where Multilingual AI Video Delivers the Highest ROI

Not every video justifies the effort of multilingual translation. Focus your resources on content types where localization directly drives measurable business outcomes.

Global Campaign Videos

When you’re launching a product, feature, or brand campaign across multiple markets simultaneously, multilingual video isn’t optional — it’s the entire point. AI translation lets you produce localized versions of your campaign video in days instead of weeks, ensuring every market launches on schedule.

CrePal approach: Use the AI Director Agent to generate your campaign video in your primary language first. Then create localized versions with AI voiceover or lip sync, adjusting not just language but pacing, tone, and even music style to match regional preferences.

Tutorial and How-To Content

Educational content has the longest shelf life and the highest compounding ROI when localized. A product tutorial translated into ten languages continues generating support deflection, activation, and retention value for months or years.

Pro tip: For tutorials, voiceover plus subtitles is the ideal combination. Viewers can listen in their language while reading along during complex technical steps.

Sales and Outreach Videos

Personalized sales videos where a real person addresses the prospect by name and speaks to their specific use case convert at significantly higher rates than generic content. AI lip sync takes this further — your sales team records one version, and AI generates localized versions that look and sound native.

The impact: A sales rep who speaks only English can now deliver a personalized video message in the prospect’s native language, with natural lip movements and culturally appropriate tone. That’s not just efficiency — it’s a competitive advantage most teams don’t yet have.

E-Learning and Training

Organizations with global workforces need training content in multiple languages. AI voiceover dramatically reduces the cost and turnaround time of producing multilingual training modules, making it feasible to localize even frequently updated compliance or onboarding content.

Social Media and Short-Form Content

Short-form video for TikTok, Instagram Reels, and YouTube Shorts thrives on speed. AI subtitle generation lets you localize a trending piece of content into multiple languages within hours — fast enough to ride the trend before it fades.

How to Get Started with CrePal.ai

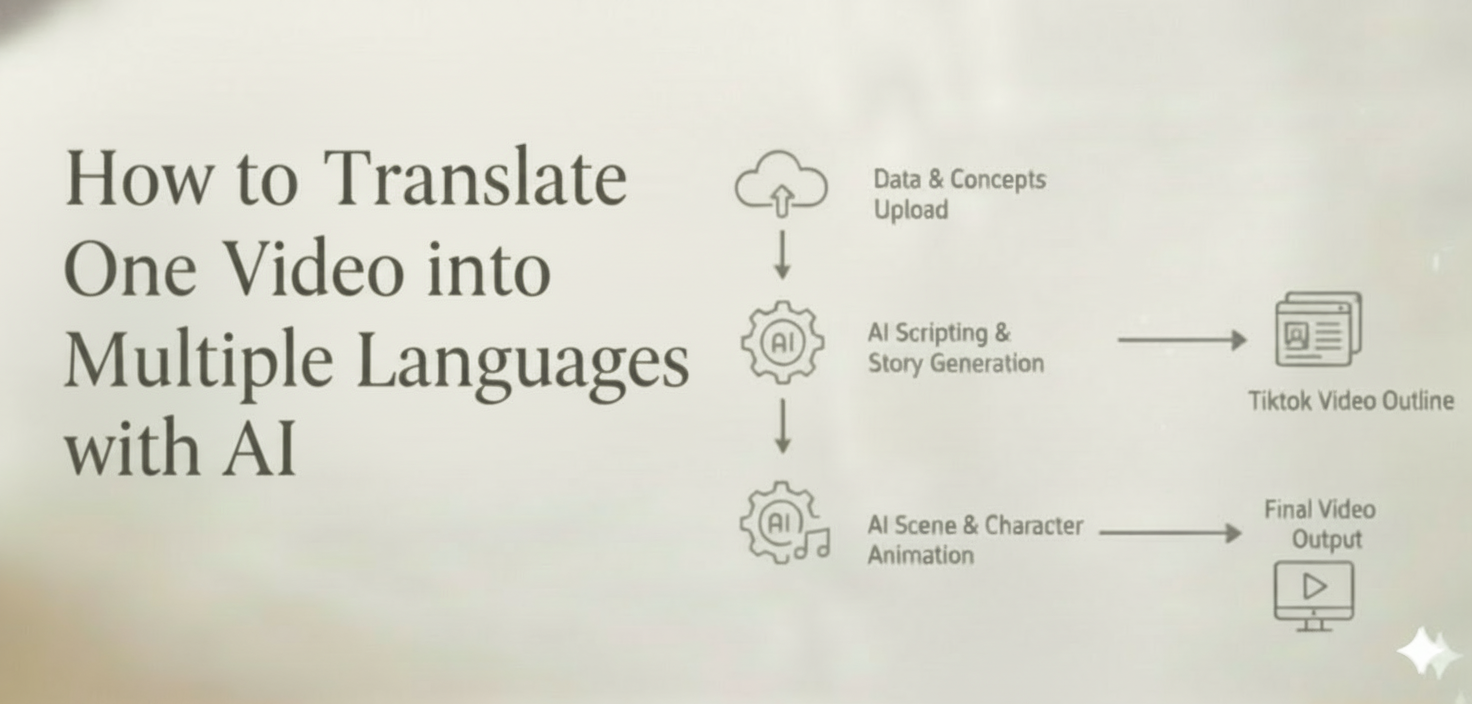

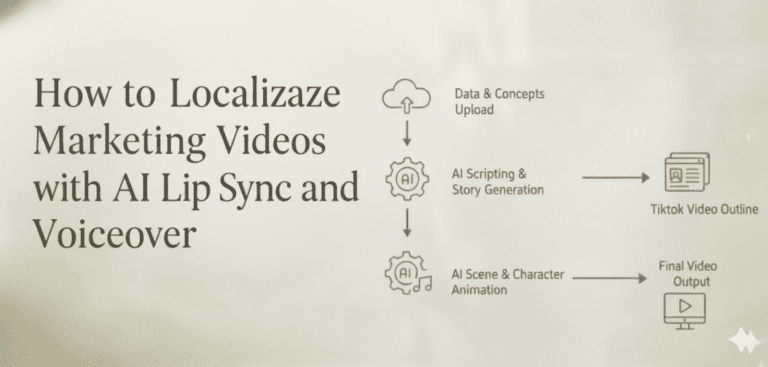

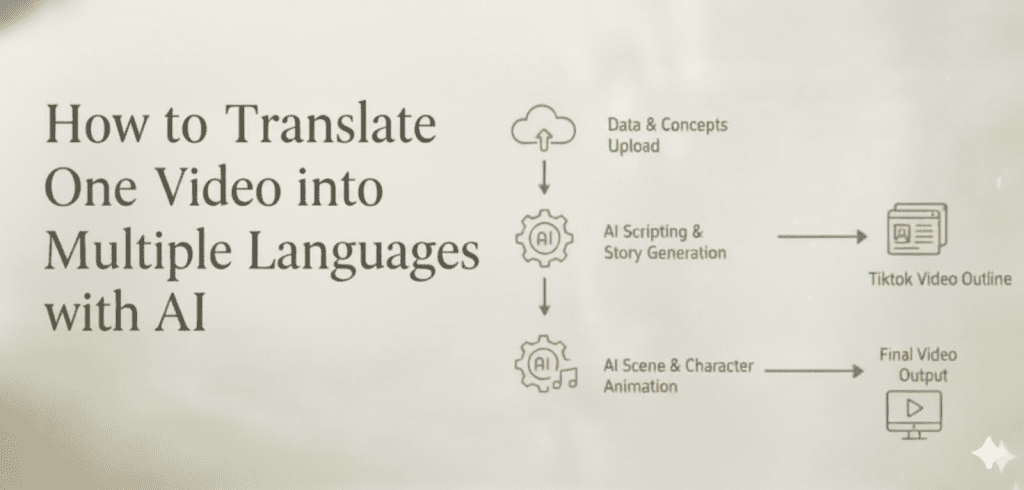

CrePal.ai’s AI Director Agent simplifies the entire multilingual video workflow:

- Create or upload your original video — use CrePal’s AI Story, Explainer Video, or Ads Video mini apps to generate your source content, or upload an existing video.

- Select your target languages — choose from dozens of supported languages.

- Choose your format — subtitles, AI voiceover, or full lip sync depending on your content type and audience.

- Review and refine — uses conversational editing to adjust phrasing, pacing, or tone in any language version. Just tell the AI Director what to change in plain English.

- Export and distribute — download all language versions in platform-ready formats.

The entire process — from a single source video to multiple localized versions — takes minutes instead of weeks. No translation agency. No voiceover casting. No manual timeline editing.

Frequently Asked Questions

How many languages can AI translate a video into at once?

Modern AI video platforms support dozens of languages simultaneously. With CrePal.ai, you can generate voiceover and lip sync versions in all major global languages from a single source video. The practical limit is usually your review bandwidth, not technology.

Will AI-translated voiceovers sound robotic?

Not with current-generation AI voices. Today’s text-to-speech models produce natural-sounding narration with appropriate intonation, pacing, and emotional range. The quality has improved dramatically — most viewers cannot distinguish premium AI voices from human narration in blind tests.

Is AI lip sync convincing enough for professional use?

For clean, well-lit, front-facing footage, yes. AI lip sync technology has reached a quality threshold where it’s being used in commercial advertising, corporate communications, and sales outreach. The key is starting with good source footage — clear face visibility, good lighting, and minimal obstruction around the mouth.

How do I ensure translation accuracy for technical or industry-specific content?

AI translation handles general business and marketing language well. For highly technical, legal, or medical content, add a native-speaker review layer focused specifically on terminology accuracy. Using a glossary or term list as a reference during the review process ensures consistency across all language versions.

What’s the cost difference between AI video translation and traditional methods?

Traditional multilingual video production — involving human translators, voiceover artists, studio recording, and manual video editing — typically costs thousands of dollars per language. AI-powered translation reduces this to a fraction of the cost, often making it economically viable to localize into ten or more languages where previously only two or three were feasible.

Turn One Video into a Global Content Library

The gap between companies that localize video content and those that don’t is widening. Audiences engage more, trust more, and convert more when content speaks their language — literally.

AI has eliminated the traditional barriers of cost, time, and complexity that made multilingual video production impractical for most teams. The technology used to translate one video into multiple languages with AI isn’t just available — it’s accessible, affordable, and increasingly indistinguishable from human-produced localization.

CrePal.ai brings this entire workflow under one roof. As an AI Director Agent, it doesn’t just translate words — it orchestrates the full localization process: script adaptation, voice generation, lip sync, timing adjustment, and conversational editing across every language version.

Your video already has the message. Now give it every audience it deserves.

Start creating multilingual videos with CrePal.ai — free to try

How to Translate One Video into Multiple Languages with AI

Meta Description: Need multilingual video content fast? Learn how to translate one video into multiple languages with AI, while preserving clarity, timing, and audience fit.

You spent weeks scripting, shooting, and editing a product launch video. It performs brilliantly with your English-speaking audience. Now your team wants the same video in Spanish, French, Japanese, Portuguese, and Arabic — by next Friday.

A few years ago, that request would have meant hiring five translation agencies, five voiceover artists, and one very patient video editor. Today, AI handles the heavy lifting in a fraction of the time and cost.

But “translating a video” is more nuanced than running text through Google Translate. Language changes sentence length. Sentence length changes timing. Timing changes lip movement. Lip movement changes believability. Get any of these wrong, and your multilingual video feels off — even if every word is technically correct.

This guide walks you through exactly how to translate one video into multiple languages with AI — the right way. You’ll learn the difference between translation and localization, how to choose between subtitles, voiceover, and lip sync, how to manage timing shifts across languages, and how to quality-check the final output before it reaches a global audience.

Translation vs. Localization: Why the Distinction Matters

Most people use “translation” and “localization” interchangeably. In video production, confusing the two is where quality breaks down.

Video Translation

Video translation converts spoken or written words from one language to another. The goal is linguistic accuracy. You take the English script, translate it into German, and overlay the German text or audio onto the original video.

The result is technically correct but often feels mechanical. Idioms land flat. Humor disappears. Cultural references confuse rather than connect.

Video Localization

Video localization adapts the entire viewing experience for a specific audience. This includes:

- Linguistic adaptation — not just translating words, but rewriting phrases so they feel native. “Hit the ground running” doesn’t translate literally into most languages. A localized version uses an equivalent idiom that carries the same energy.

- Visual adjustments — on-screen text, graphics, currency symbols, date formats, even color associations that carry different meanings across cultures.

- Tonal calibration — a casual, first-name tone works in American English marketing. In Japanese or Korean business contexts, the same casualness can feel disrespectful.

- Pacing and rhythm — German sentences tend to run longer than English. Japanese often compresses the same information into fewer syllables. These differences directly affect how audio aligns with visuals.

The bottom line: if you’re translating a video to check a compliance box, basic translation may suffice. If you’re translating a video to actually convert, engage, or educate an audience in another market, you need localization.

AI tools have become remarkably capable at both — but only when you understand what you’re asking for.

Subtitles, Voiceover, or Lip Sync: Choosing the Right Format

Not every multilingual video needs full lip-synced dubbing. The right format depends on your content type, budget, timeline, and audience expectations. Here’s how each option stacks up.

Option 1: AI-Generated Subtitles

What it does: Translates the spoken content and displays it as timed text overlays on the original video. The original audio stays intact.

Best for:

- Tutorial and how-to videos where viewers expect to read along

- Social media content on platforms where most users watch with sound off (Instagram, LinkedIn, Facebook)

- Internal training videos with modest budgets

- Content where the original speaker’s voice and delivery are part of the brand

Advantages:

- Fastest to produce

- Lowest cost

- Preserves original audio authenticity

- Easy to update when scripts change

Limitations:

- Viewers must read while watching — splits attention

- Doesn’t work well for fast-paced content with dense visuals

- Some audiences (particularly in markets accustomed to dubbing) perceive subtitles as lower effort

Quality tip: AI subtitle generators can auto-translate and auto-time captions in seconds. But always review line breaks. A subtitle that splits a noun from its adjective across two lines forces the viewer to mentally reassemble the sentence, which kills comprehension speed.

Option 2: AI Voiceover (Dubbing)

What it does: Replaces the original audio with an AI-generated voice speaking the translated script. The visuals remain unchanged.

Best for:

- Marketing and promotional videos where audio immersion matters

- Explainer videos and product demos

- E-learning content where listening is the primary mode of consumption

- Podcast-style or narration-driven videos where the speaker isn’t visually prominent

Advantages:

- More immersive than subtitles

- Audiences can watch without reading

- Modern AI voices sound natural and support dozens of languages

- Faster and more affordable than hiring voiceover artists for every language

Limitations:

- Audio-visual mismatch when the speaker’s lip movements are visible

- AI voice may not perfectly capture the emotional range of the original delivery

- Translated script length can cause audio to run longer or shorter than the original scene

Quality tip: When generating AI voiceover, pay attention to speech rate. A direct Spanish translation of an English script typically runs 15-25% longer. If you force the AI to speak faster to fit the original timing, it sounds rushed and unnatural. Better to slightly trim the translated script — keep the meaning, reduce the word count.

Option 3: AI Lip Sync

What it does: Not only replaces the audio but also adjusts the speaker’s lip movements to match the new language. The result looks like the speaker is actually speaking the translated language.

Best for:

- CEO or founder messages where face-to-camera delivery builds trust

- Sales videos and personalized outreach where authenticity drives conversion

- Global ad campaigns where the same spokesperson addresses every market

- Any content where the speaker’s face is prominently visible and lip mismatch would be distracting

Advantages:

- Highest level of immersion and authenticity

- Viewers perceive the content as natively produced for their language

- Maintains the emotional connection of face-to-camera delivery

- Dramatically reduces the cost of shooting separate versions per market

Limitations:

- Most computationally intensive option

- Quality varies significantly between AI tools — poor lip sync looks worse than no lip sync

- Requires clean, well-lit source footage for best results

Quality tip: AI lip sync works best when the source video has a clear, front-facing shot of the speaker with good lighting and minimal obstruction. Side angles, hands near the face, or heavy facial hair can reduce accuracy. If you’re planning multilingual distribution from the start, shoot your original video with lip sync in mind.

Quick Comparison Table

| Factor | Subtitles | Voiceover | Lip Sync |

| Production speed | ★★★★★ | ★★★★ | ★★★ |

| Cost | Lowest | Medium | Highest |

| Audience immersion | Moderate | High | Highest |

| Best when speaker is visible | ✓ (acceptable) | △ (slight mismatch) | ✓✓ (seamless) |

| Best when speaker is off-screen | ✓ | ✓✓ (ideal) | N/A |

| Ease of updates | Very easy | Easy | Moderate |

Recommendation for most teams: Start with voiceover for narration-driven content. Use lip sync for any face-to-camera content. Add subtitles as a supplementary layer across all formats — many viewers watch with sound off regardless of language.

How to Control Pacing and Timing Across Languages

This is where most AI-translated videos fall apart — not because the translation is wrong, but because the timing is off.

Every language has a different information density. The same sentence takes different amounts of time to speak depending on the target language.

Typical Expansion and Compression Rates

| Target Language | Approximate Length Change vs. English |

| Spanish | +15% to +25% longer |

| French | +15% to +20% longer |

| German | +10% to +30% longer |

| Portuguese | +15% to +25% longer |

| Japanese | -10% to -20% shorter (spoken) |

| Chinese (Mandarin) | -10% to -20% shorter (spoken) |

| Arabic | +10% to +25% longer |

| Korean | -5% to -10% shorter |

These percentages matter because your video has fixed visual anchors: scene transitions, on-screen animations, product demos, and music cues. When the translated audio runs 25% longer than the original, something has to give.

Three Strategies to Manage Timing Shifts

Strategy 1: Adaptive Script Editing

Instead of translating word-for-word and then compressing the audio, edit the translated script before generating the voiceover. Identify which sentences can be tightened without losing meaning.

Example:

- English: “Our platform allows you to easily create professional-quality videos in just a few minutes.”

- Word-for-word Spanish: “Nuestra plataforma te permite crear fácilmente videos de calidad profesional en solo unos minutos.” (longer)

- Adapted Spanish: “Crea videos profesionales en minutos con nuestra plataforma.” (tighter, same meaning, fits the original timing)

Strategy 2: Dynamic Pause Adjustment

AI voiceover tools can adjust the pacing between sentences and phrases. Instead of uniformly speeding up the entire narration (which sounds unnatural), selectively reduce pauses between sentences while keeping the speaking rate natural.

Strategy 3: Scene-Aware Segmentation

Break the script into segments that align with scene transitions. Translate and time each segment independently rather than treating the entire script as one continuous block. This prevents a timing mismatch in one segment from cascading through the rest of the video.

In tools like CrePal.ai, the AI Director Agent handles this automatically. When you request a multilingual version of your video, the system analyzes scene boundaries, adjusts translated script length per segment, and ensures audio-visual alignment throughout — no manual timing work required.

Quality Review: How to Catch Issues Before Publishing

AI-generated translations are good. They are not infallible. A systematic review process catches the 5-10% of issues that separate a professional multilingual video from an embarrassing one.

The Four-Layer Quality Check

Layer 1: Linguistic Accuracy

Have a native speaker (or reliable native-speaking reviewer) check the translated script for:

- Mistranslations, especially industry-specific terminology

- Unnatural phrasing that sounds like a translation rather than native speech

- Gender and formality errors (critical in languages like French, German, Japanese, Korean)

- Number, date, and currency format accuracy

If you don’t have native speakers on your team, use AI-assisted back-translation as a first filter: translate the output back into English and compare it against the original. Major meaning shifts will surface immediately.

Layer 2: Audio-Visual Sync

Watch the full video with the translated audio. Check for:

- Audio that extends beyond scene transitions

- Gaps of silence where the audio finishes too early

- Lip sync accuracy (if using lip sync): does the mouth movement look natural at normal playback speed?

- Subtitle timing: do captions appear and disappear in rhythm with the speech?

Layer 3: Cultural Appropriateness

This layer requires cultural knowledge, not just language skills. Look for:

- Idioms or humor that don’t translate culturally

- Visual elements that might carry unintended meaning in the target culture (colors, gestures, symbols)

- Is tone mismatches — is the level of formality appropriate for the target market?

Layer 4: Technical Quality

Final technical pass:

- Audio levels consistent between languages

- No audio artifacts, clicks, or unnatural breathing patterns from the AI voice

- Subtitle font readable against all background scenes

- Video export resolution and format correct for the target platform

Streamlined Review Workflow

For teams publishing in five or more languages simultaneously, reviewing every frame of every version isn’t always feasible. Prioritize things like this:

- Full review for your top 2-3 revenue markets

- Script review + spot-check for secondary markets

- Automated back-translation check for all remaining languages

- Native speaker feedback loop — publish, collect feedback, iterate

Best Use Cases: Where Multilingual AI Video Delivers the Highest ROI

Not every video justifies the effort of multilingual translation. Focus your resources on content types where localization directly drives measurable business outcomes.

Global Campaign Videos

When you’re launching a product, feature, or brand campaign across multiple markets simultaneously, multilingual video isn’t optional — it’s the entire point. AI translation lets you produce localized versions of your campaign video in days instead of weeks, ensuring every market launches on schedule.

CrePal approach: Use the AI Director Agent to generate your campaign video in your primary language first. Then create localized versions with AI voiceover or lip sync, adjusting not just language but pacing, tone, and even music style to match regional preferences.

Tutorial and How-To Content

Educational content has the longest shelf life and the highest compounding ROI when localized. A product tutorial translated into ten languages continues generating support deflection, activation, and retention value for months or years.

Pro tip: For tutorials, voiceover plus subtitles is the ideal combination. Viewers can listen in their language while reading along during complex technical steps.

Sales and Outreach Videos

Personalized sales videos where a real person addresses the prospect by name and speaks to their specific use case convert at significantly higher rates than generic content. AI lip sync takes this further — your sales team records one version, and AI generates localized versions that look and sound native.

The impact: A sales rep who speaks only English can now deliver a personalized video message in the prospect’s native language, with natural lip movements and culturally appropriate tone. That’s not just efficiency — it’s a competitive advantage most teams don’t yet have.

E-Learning and Training

Organizations with global workforces need training content in multiple languages. AI voiceover dramatically reduces the cost and turnaround time of producing multilingual training modules, making it feasible to localize even frequently updated compliance or onboarding content.

Social Media and Short-Form Content

Short-form video for TikTok, Instagram Reels, and YouTube Shorts thrives on speed. AI subtitle generation lets you localize a trending piece of content into multiple languages within hours — fast enough to ride the trend before it fades.

How to Get Started with CrePal.ai

CrePal.ai’s AI Director Agent simplifies the entire multilingual video workflow:

- Create or upload your original video — use CrePal’s AI Story, Explainer Video, or Ads Video mini apps to generate your source content, or upload an existing video.

- Select your target languages — choose from dozens of supported languages.

- Choose your format — subtitles, AI voiceover, or full lip sync depending on your content type and audience.

- Review and refine — use conversational editing to adjust phrasing, pacing, or tone in any language version. Just tell the AI Director what to change in plain English.

- Export and distribute — download all language versions in platform-ready formats.

The entire process — from a single source video to multiple localized versions — takes minutes instead of weeks. No translation agency. No voiceover casting. No manual timeline editing.

Frequently Asked Questions

How many languages can AI translate a video into at once?

Modern AI video platforms support dozens of languages simultaneously. With CrePal.ai, you can generate voiceover and lip sync versions in all major global languages from a single source video. The practical limit is usually your review bandwidth, not technology.

Will AI-translated voiceovers sound robotic?

Not with current-generation AI voices. Today’s text-to-speech models produce natural-sounding narration with appropriate intonation, pacing, and emotional range. The quality has improved dramatically — most viewers cannot distinguish premium AI voices from human narration in blind tests.

Is AI lip sync convincing enough for professional use?

For clean, well-lit, front-facing footage, yes. AI lip sync technology has reached a quality threshold where it’s being used in commercial advertising, corporate communications, and sales outreach. The key is starting with good source footage — clear face visibility, good lighting, and minimal obstruction around the mouth.

How do I ensure translation accuracy for technical or industry-specific content?

AI translation handles general business and marketing language well. For highly technical, legal, or medical content, add a native-speaker review layer focused specifically on terminology accuracy. Using a glossary or term list as a reference during the review process ensures consistency across all language versions.

What’s the cost difference between AI video translation and traditional methods?

Traditional multilingual video production — involving human translators, voiceover artists, studio recording, and manual video editing — typically costs thousands of dollars per language. AI-powered translation reduces this to a fraction of the cost, often making it economically viable to localize into ten or more languages where previously only two or three were feasible.

Turn One Video into a Global Content Library

The gap between companies that localize video content and those that don’t is widening. Audiences engage more, trust more, and convert more when content speaks their language — literally.

AI has eliminated the traditional barriers of cost, time, and complexity that made multilingual video production impractical for most teams. The technology used to translate one video into multiple languages with AI isn’t just available — it’s accessible, affordable, and increasingly indistinguishable from human-produced localization.

CrePal.ai brings this entire workflow under one roof. As an AI Director Agent, it doesn’t just translate words — it orchestrates the full localization process: script adaptation, voice generation, lip sync, timing adjustment, and conversational editing across every language version.

Your video already has the message. Now give it every audience it deserves.

Start creating multilingual videos with CrePal.ai — free to try