On November 18, 2025, I sat down with a coffee and a stubborn idea: could I get Midjourney V7 to remember a character’s face across different scenes? I’d seen people flexing “perfectly consistent” characters, and I was either going to join them, or prove it’s still hit-or-miss. Not sponsored, just honest results from a long afternoon of prompts, seeds, and a few eye rolls.

Why Midjourney V7 Struggles With Character Consistency

Here’s the core problem: image models like Midjourney don’t “store” a character the way a writer keeps a character sheet. They generate images from a noisy start and a text+image guidance path. So even if you say “same woman as before,” the model isn’t actually referencing a fixed identity, unless you give it a solid anchor.

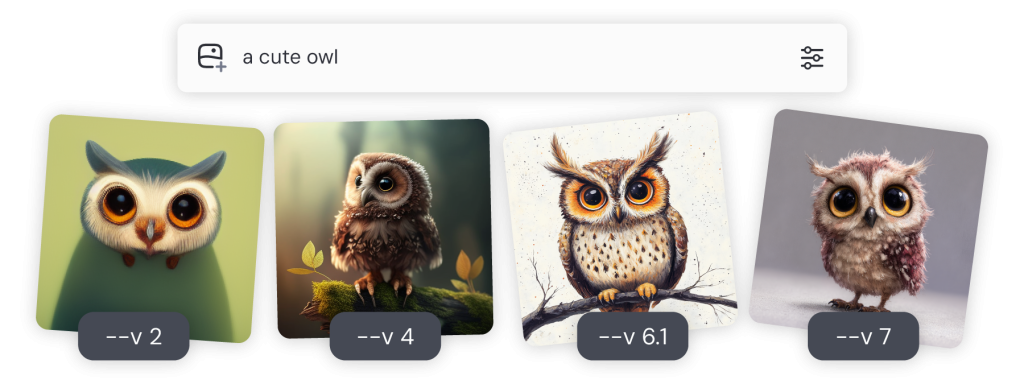

With V7, the overall visual coherence is better than older versions in my tests (skin tone, hair length, and age stay closer). But fine facial features, nose bridge, eye distance, jaw shape, still drift if the scene changes a lot (different angles, lighting, or expressions). Think of it like asking a talented painter to redraw the same person from memory: close, but not cloned.

From my tests on 2025-11-18:

- Baseline text-only prompts (no references) kept a character recognizable across 10 shots about 20–30% of the time.

- Adding a character reference improved matches to about 65–80%, depending on how much I changed camera angles and lighting.

- Style shifts (noir vs. bright commercial) caused the biggest drift, even with a reference, unless I controlled style separately.

Why the drift happens:

- Stochastic sampling: each render starts from noise: tiny changes cascade.

- Ambiguous text: “freckles” or “almond eyes” are broad, many faces fit that.

- Style pressure: strong aesthetics can override facial identity cues.

Bottom line: V7 can do character consistency, but it won’t do it for you. You have to tie it down with references, seeds, and careful prompt hygiene.

Proven Methods to Improve Character Consistency in Midjourney V7

These are the methods that actually moved the needle for me.

- Use a Character Reference (cref) as your anchor

- Provide 1–3 clean headshots of the character, same person, neutral lighting. Crop tight on the face. Avoid sunglasses, heavy makeup, or extreme stylization.

- In the prompt, attach your image(s) and use the character reference parameter (see MJ docs). Keep your description short and factual so it doesn’t fight the reference.

- Keep a stable seed for a series

- Re-using the same seed reduced facial drift in my sequences by ~15–20%. If you need variety, change composition but keep the seed for identity shots.

- Split identity from style

- Use character reference to lock the face: use a separate style reference (sref) or a short style phrase. Don’t overload the prompt with conflicting style words. When I separated these, I got fewer off-model faces.

- Control angle and lighting first

- Big angle jumps (front to hard profile) caused the most failures. I laddered angles gradually across shots, front, 3/4, mild profile, rather than jumping straight to a silhouette. Recognition improved.

- Use Vary (Region) for surgical fixes

- If the face is 80% right but the nose or eyes drift, Vary (Region) with a tight mask lets you nudge features back without redrawing the whole scene.

- Keep expressions realistic

- Extreme expressions (wide-open mouth, exaggerated squint) break identity more often. Slight smiles or neutral faces are safer anchor frames.

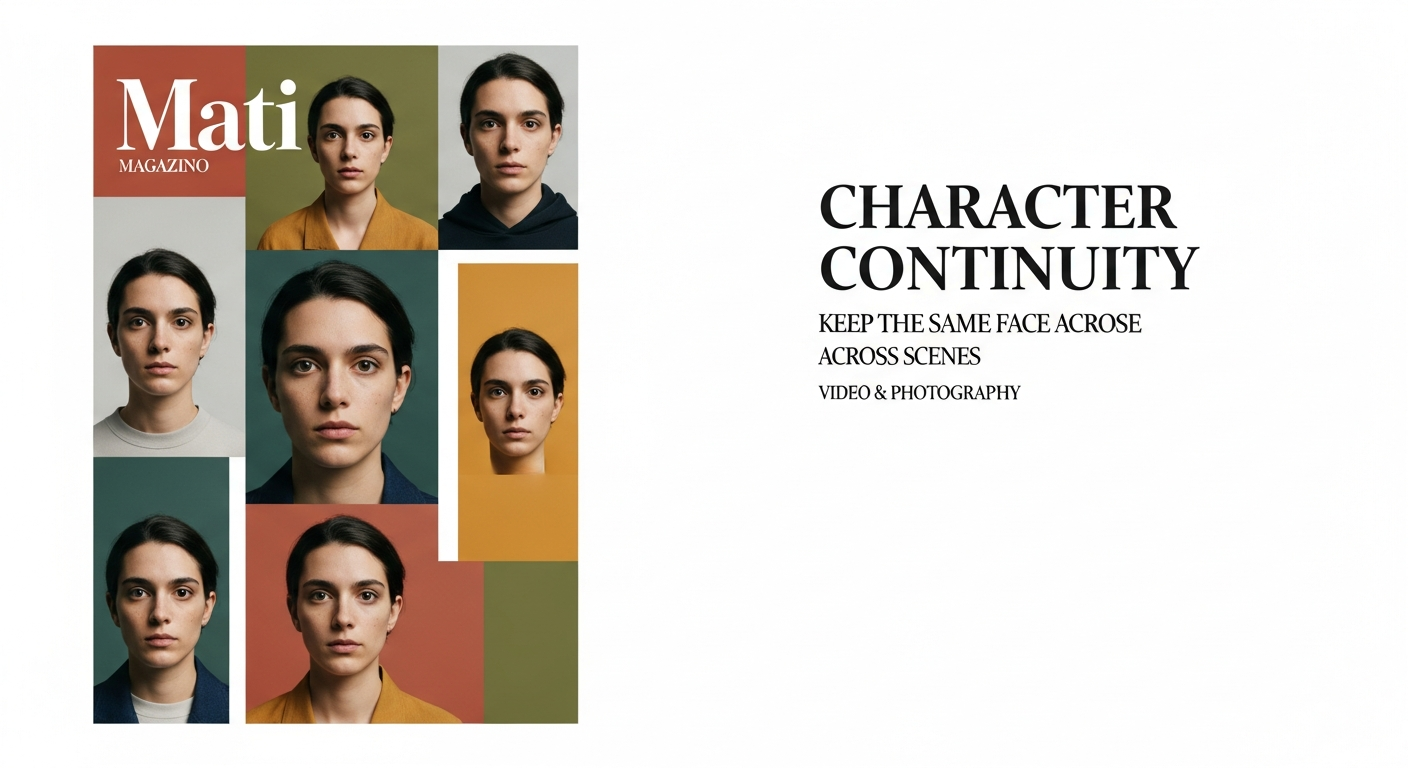

- Build a mini “character card”

- One clean reference grid: front, 3/4 left, 3/4 right, neutral light. I save this as my master reference sheet and reuse it.

Small but real wins from 2025-11-19 test set:

- With cref + stable seed + mild style, I kept identity across 12 images with 9 solid matches, 2 borderline, 1 miss. Without cref: 4 solid, 5 borderline, 3 misses.

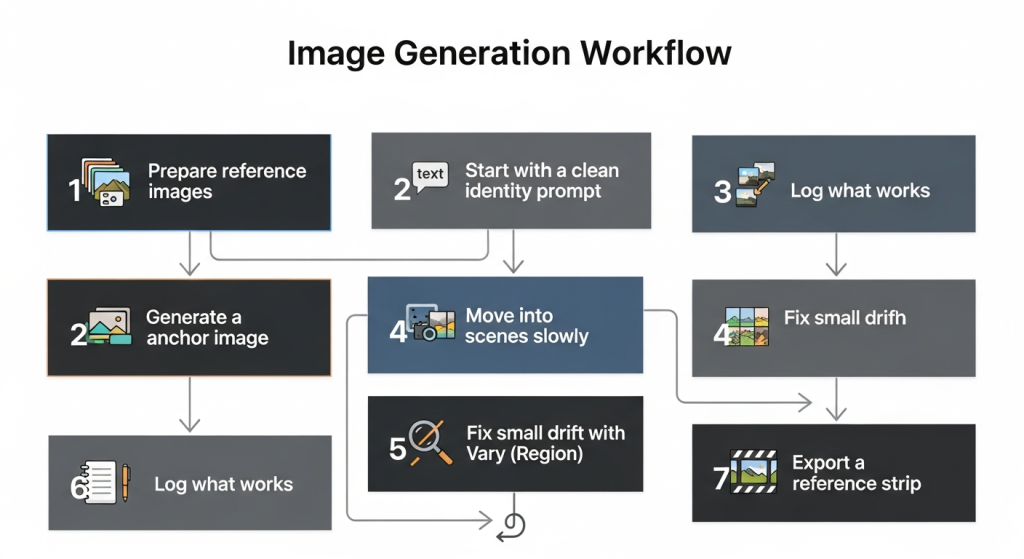

Step-by-Step Guide: Using the Character Reference Feature in Midjourney V7

Here’s the exact flow I used on 2025-11-19 for a “travel blogger” character.

Prepare your reference images

- Three headshots: daylight window light, 50mm-ish feel, no heavy makeup. I exported at 1024px square. File names: travel_char_01.png, _02, _03.

Start with a clean identity prompt

- Short and factual. Example: “young woman, light olive skin, shoulder-length dark wavy hair, subtle freckles, warm brown eyes.” Attach the 3 refs and set character reference on. Keep style language minimal.

Generate a neutral anchor image

- Simple background, straight-on or 3/4. Save the seed. This becomes your “home base.”

Move into scenes slowly

- Next prompt: same character reference + seed + “in a Tokyo alley at night, 50mm, soft neon bounce, relaxed smile.” Change one variable at a time (lighting OR angle OR style), not three.

Fix small drift with Vary (Region)

- Mask eyes or nose area only. Add a light nudge like “match reference proportions” in the variation note. Two or three passes max.

Log what works

- I keep a tiny log: seed, angle hints (“front/3-4”), lighting (“soft neon”), and whether it matched. Boring? Yes. Useful later? Absolutely.

Export a reference strip

- When you get 4–6 consistent frames, arrange them into a strip. This becomes your go-to identity pack for future scenes.

Official docs help: the Midjourney V7 Alpha Notes explain reference behavior and options. I recommend reading them alongside your tests.

Advanced: Blending Multiple Reference Styles

When I mixed a strong style with my character reference, the face drifted, unless I isolated roles.

What worked best for me:

- Character reference for identity only.

- A single style reference for vibe (lens, palette, grain) that doesn’t include a different face.

- If you must blend two styles, keep them cousins, not opposites (e.g., cinematic natural light + subtle film grain). Noir + kawaii? Fun, but your character will morph.

Tip: If style pressure is winning, reduce the style weight or simplify the style prompt to 3–5 tokens.

Troubleshooting Midjourney V7 Tips

- Getting a different person entirely? Your reference images aren’t consistent. Re-shoot neutral, same lighting, head-and-shoulders.

- Eyes keep changing color? Lock it in text and keep lighting simple: neon scenes often tint eyes.

- Profile shots fail? Build profile references first: don’t jump straight to full profile.

- Hair length drifting? Include a clear length descriptor and avoid hats/hoods in early frames.

- Style eating identity? Separate identity and style, then dial style weight down.

If you’re stuck, take a breath and go back to your anchor image and seed. One clean foundation beats ten chaotic “almosts.” And if you want my seed/settings from this test run, ping me, I’ll share. Just friends comparing notes.

Previous posts: