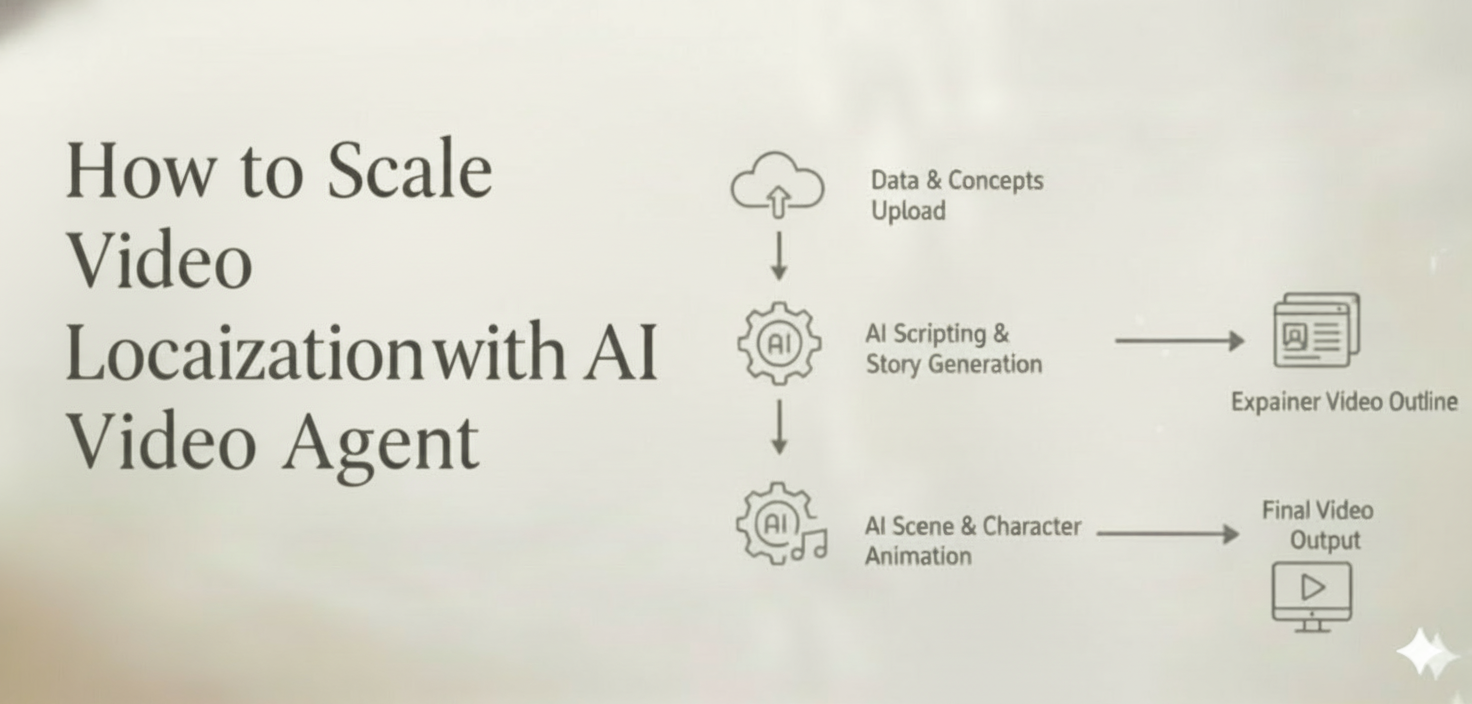

Meta: See how teams can scale video localization with an AI video agent. Plan versions, manage edits, and export campaign-ready assets faster across markets and languages.

Your campaign is performing well in one market. Leadership wants it in twelve more by end of quarter. Different languages, different aspect ratios, different cultural nuances—and and the same three-person creative team that’s already stretched thin.

This is the moment most video localization efforts stall. Not because the strategy is wrong, but because the production model cannot keep up. Translating a single hero video into region-ready variants has traditionally meant re-editing timelines, re-recording voiceovers, re-designing text overlays, and coordinating across vendors, freelancers, and internal reviewers for every single version.

In this guide, we break down exactly why multi-market video localization breaks teams—and how an AI video agent like CrePal.ai fundamentally changes math on output, consistency, and turnaround time.

Why Teams Struggle to Localize Video at Scale

Video localization is not a creative problem. It is an operations problem disguised as a creative one.

Most brand teams and agencies have the strategic clarity to know what they need: the same narrative adapted for different regions, languages, and platforms. The breakdown happens in execution. Here is why:

Volume Multiplies Faster Than Headcount

A single campaign video destined for five markets, three platforms each, with two language variants per market produces 30 distinct deliverables. Double the campaign cadence and you are looking at 60 assets per cycle. No team scales headcount at that rate.

Every Version Requires a Full Production Pass

Traditional localization treats each variant almost like a new project. Swap the voiceover? The timing shifts, so the edit changes. Change the text overlays? Now the motion graphics need re-rendering. Adjust to a vertical format? The entire composition must be reconsidered. Each “small change” triggers a cascade of manual adjustments.

Coordination Overhead Eats the Timeline

Between translators, voiceover artists, motion designers, editors, and regional reviewers, a single localized version can involve five to eight handoffs. Multiply that across markets and the project management burden alone becomes a full-time role.

The result: campaigns launch late, creative quality drifts between versions, and teams burn out producing volume instead of doing strategic work.

Where Traditional Localization Workflows Break

To understand the fix, it helps to map exactly where the traditional workflow fractures.

Breakpoint 1: Script and Voiceover Turnaround

Translating scripts, booking voiceover talent, recording, reviewing, and re-recording for tone accuracy is one of the slowest links in the chain. A single language variant can take days.

Breakpoint 2: Manual Re-Editing Per Format

Adapting a 16:9 hero video into 9:16 for TikTok or Reels is not a crop—it is a re-edit. Key visual elements shift, text placement changes, pacing often needs adjustment. Most teams do this by hand in Premiere or After Effects, timeline by timeline.

Breakpoint 3: Style and Character Drift

When different editors, freelancers, or regional partners handle different versions, visual consistency erodes. Colors shift. Typography varies. Character appearances change between scenes. The brand feels fragmented across markets.

Breakpoint 4: Review and Approval Bottlenecks

Stakeholders in each region need to review and approve. Without a centralized system, feedback lives in email threads, Slack messages, and spreadsheets. Revisions get lost. Versions get confused. Deadlines slip.

These are not edge cases. They are the default experience for any team trying to localize video beyond two or three markets.

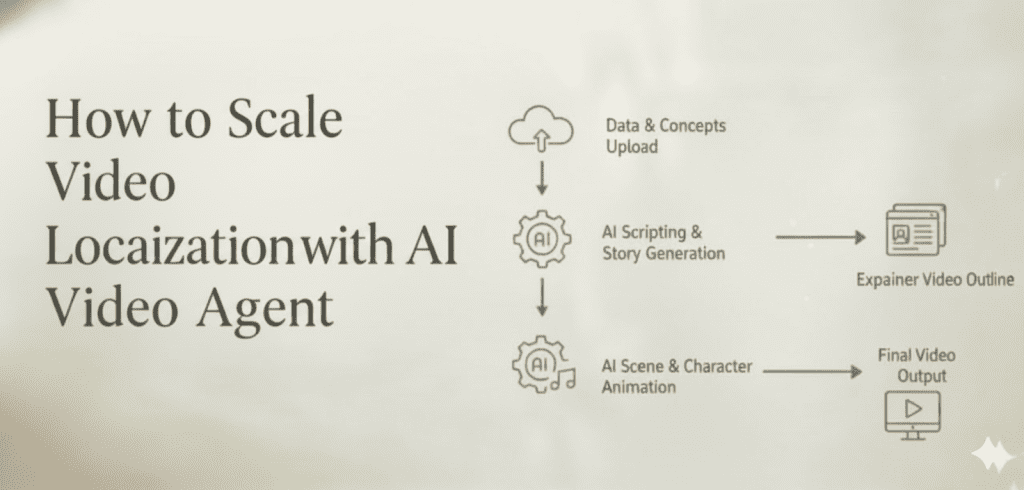

How an AI Video Agent Changes the Workflow

An AI video agent is not another editing tool added to the stack. It is a fundamentally different production model—one where a single intelligent system handles planning, generation, and iteration across all versions.

CrePal.ai operates as an AI Director Agent: you describe what you need in natural language, and the agent orchestrates scriptwriting, scene generation, model selection, voiceover, soundtrack, and assembly automatically. For localization, this architecture unlocks capabilities that manual workflows simply cannot match.

One Brief, Multiple Versions

Describe your campaign once. The AI Director Agent generates a base video, then produces region-specific variants—adjusting language, voiceover, text overlays, pacing, and cultural references—without requiring a separate production pass for each version. One prompt can spin into dozens of market-ready assets.

Conversational Editing Across All Variants

Need to adjust the tone of the German voiceover? Shorten the intro for the TikTok cut? Change the background music for the Middle East version? With CrePal’s conversational editing, you make changes by describing them in plain language. No timeline scrubbing, no re-rendering, no switching between software.

Multi-Model Intelligence for Optimal Output

CrePal integrates models like Google Veo, Pika Labs, Runway, Seedance, and Suno—automatically selecting the best model for each element of each version. The lip sync model handles talking-head variants. The music model generates region-appropriate soundtracks. The video generation model maintains visual quality across aspect ratios. You get the best available output for every component without manually managing multiple platforms.

Character and Style Consistency at Scale

This is where the agent model matters most for localization. CrePal maintains character consistency and style coherence across every version—same brand colors, same character appearances, same visual language—regardless of how many variants you produce. The brand stays unified even at 30+ deliverables per campaign.

How Agencies and Brand Teams Can Collaborate with an AI Agent

Adopting an AI video agent does not mean replacing your creative team. It means restructuring who does what—and dramatically increasing what is possible.

Strategic Roles Stay Human

Campaign strategy, brand positioning, audience insight, cultural nuance decisions—these remain firmly in human hands. The AI agent does not decide your message. It executes your vision at scale.

The Agent Handles Production Execution

Scriptwriting, scene generation, voiceover synthesis, format adaptation, music scoring, and assembly—these high-volume, repetitive production tasks shift to the agent. Your editors and designers move from production labor to creative direction and quality oversight.

Review Becomes Iterative, Not Sequential

Instead of waiting for a finished version to review, teams can interact with the agent throughout the process. Ask for a rough cut, give feedback in natural language, see revisions in minutes instead of days. The feedback loop compresses from weeks to hours.

A Practical Team Structure

| Role | Traditional Workflow | With AI Video Agent |

| Creative Director | Briefs each version separately | Sets direction once, reviews variants |

| Editor / Designer | Manually edits every version | Directs agent, handles final QA |

| Project Manager | Coordinates 5–8 vendors per version | Manages one agent-driven pipeline |

| Translator / VO Artist | External vendor, days per language | AI-generated, refined through conversation |

| Regional Reviewer | Reviews after full production | Reviews and iterates in real time |

The team does not shrink—it, it refocuses. More time on strategy and creative quality. Less time on mechanical production.

How to Balance OutputVolume and Brand Consistency

The core tension in localization is this: every additional version increases the risk of brand drift. More assets, more chances for inconsistency.

An AI video agent resolves this tension structurally, not through more oversight.

Centralized creative logic. The agent holds your brand parameters—visual style, color palette, character design, tone of voice—as as persistent context. Every version it generates inherits these parameters by default.

Template-level control with version-level flexibility. You define the creative framework once. The agent adapts within that framework for each market, format, and language—without breaking the boundaries you set.

Instant consistency audits. Because all versions are generated through one system, you can compare variants side by side, catch drift immediately, and correct through a single conversational instruction that propagates across versions.

The result is something most teams have never experienced: high volume and high consistency simultaneously, without exponentially increasing review cycles or headcount.*

Start Scaling Video Localization Today

If your team is spending more time managing production logistics than shaping creative strategy, the workflow is the bottleneck—not your people.

CrePal.ai’s AI Director Agent is purpose-built for this exact challenge. One brief becomes a full multi-scene video. One video becomes dozens of localized, platform-ready variants. One conversation replaces weeks of back-and-forth across tools and vendors.

Get Started with CrePal.ai and see how your next campaign can go from one market to twelve without adding a single production headcount.

FAQ

Q: Can CrePal.ai generate localized video versions in multiple languages? A: Yes. CrePal’s AI Director Agent can produce video variants with different languages, voiceovers, text overlays, and cultural adaptations—all from a single creative brief. You describe the changes you need in natural language, and the agent generates each version automatically.

Q: How does CrePal maintain brand consistency across many video versions? A: CrePal holds your brand parameters—character design, color palette, visual style, tone—as persistent context. Every version generated inherits these settings by default, ensuring consistency across markets and formats without manual policing.

Q: Is CrePal suitable for agencies managing multiple client campaigns? A: Absolutely. Agencies can use CrePal to scale video production across clients and markets, reducing the coordination overhead of managing freelancers, editors, and voiceover vendors for each version. The conversational editing interface lets teams iterate rapidly without specialized software skills.

Q: What AI models does CrePal use for video localization? A: CrePal integrates multiple top-tier AI models including Google Veo, Pika Labs, Runway, Seedance, and Suno. The AI Director Agent intelligently selects the optimal model for each component—video generation, lip sync, music, voiceover—to deliver the best possible output for every variant.

Q: How fast can CrePal produce localized video variants compared to traditional workflows? A: Traditional localization workflows typically require days to weeks per variant due to manual editing, vendor coordination, and review cycles. With CrePal, teams can generate and iterate on multiple localized versions in minutes to hours, compressing production timelines dramatically.