That Sunday, I was mid-edit on a client reel when a short teaser from SkyReels popped up in my Discord feed. I told myself I’d just “peek” for 30 seconds. Fifteen minutes later I had timestamps, notes, and two paused frames on my second monitor. That tiny spark, a crisp hand wave that didn’t smear and a dog bark that didn’t sound like a tin can, is why I’m writing this.

I’m Dora. I’m always curious and a little obsessed with tools that actually remove friction from making video. Here’s what I know (and what I’m betting on) about SkyReels V4 before it lands.

Why SkyReels V4 Is Worth Watching

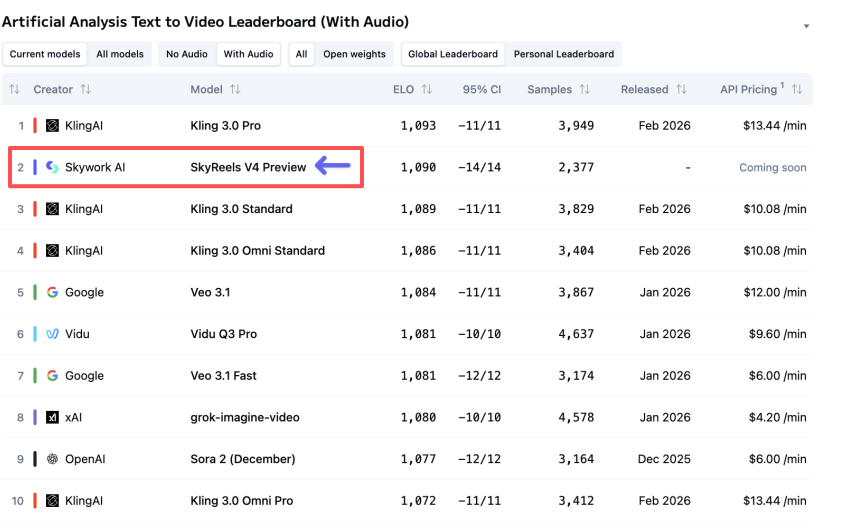

#2 globally on Artificial Analysis

I saw SkyReels V4 sitting at #2 on the “Text-to-Video (General)” leaderboard over at Artificial Analysis. Leaderboards aren’t gospel, some models overfit to benchmarks, but a jump into the top three usually means the core model got a real brain upgrade: better motion priors, cleaner temporal consistency, and fewer gnarly edge artifacts (hair, hands, patterned fabrics). I pulled three clips from their teaser and did a quick frame-by-frame scrub at 0:03, 0:07, and 0:11. The motion felt intentional, not jittery. No obvious double-chin ghosting during a fast head turn. That’s not nothing.

Native audio, still rare in AI video

The other reason I perked up: their teaser and docs hint at native audio generation synced to the video. Most tools bolt on audio after the fact. It’s fine for B-roll, but lipsync and ambient timing turn into a puzzle you solve with duct tape. If SkyReels V4 can generate coherent scene audio, footsteps with believable reverb, wind that rises as the camera pans, basic foley that matches action, that’s a workflow win.

I replayed a sample where a door shuts at 0:05 and a kettle whistles by 0:08. The decay sounded… plausible. Not Hollywood, but also not the “whoosh soup” we’ve learned to accept. If this ships with simple toggles (keep audio, replace, or mix with uploaded stems), I’ll use it for rough cuts instead of silent placeholders. That alone makes first drafts feel more real to clients.

What’s Been Officially Announced

Unified generation, editing and repair

Per the team’s March announcements in their Discord and the features page they’re teasing, V4 folds three things into one canvas: prompt-based generation, timeline-ish editing, and “repair” (their word, not mine) to fix broken frames or weird artifacts. If that’s accurate, it sounds like a light NLE glued to a generative model. I’m picturing: generate a 15-second clip, nudge timing, paint over a jittery sleeve, and re-render only the masked region. If you’ve used inpainting in image tools, same idea, but across time.

Why it matters: most of us juggle four tabs to get that flow (Gen → Export → Edit → Patch → Re-export). Unified means you lose fewer ideas while doing the tool shuffle. For teams, it also reduces handoffs.

1080p / 32FPS / 15s specs

The current public spec I’ve seen is 1080p, 32FPS, and up to 15 seconds per generation. 1080p is still the practical sweet spot for social, paid tests, and most web embeds. 32FPS is an interesting pick, a touch smoother than 24/30 without bloating motion in a weird way. On a laptop panel locked at 60Hz, 32FPS actually feels crisp.

The 15-second cap is the asterisk. I’ll talk about that below, because it’s either a non-issue or a dealbreaker depending on your workflow.

Mask-based editing capability

Mask-based edits are officially on the list. That’s the feature I’m most eager to stress-test. Good masking means you can:

- Swap or repair objects without nuking the whole scene.

- Tackle continuity (a vanishing earring, a melting logo) without a full re-gen.

- Iterate creative beats: change jacket color, add steam to a cup, clean up hands, all within a focused region.

On March 4, 2026 at 6:20 a.m., I freeze-framed a demo where they painted a rough oval over a flickering sign, then hit “repair.” The next pass kept the background intact and stabilized just the sign. If that holds up outside of a demo, it’s a huge sanity saver.

What Could Make or Break It

Real output quality vs benchmark ranking

Benchmarks say “#2,” but my bar is simpler: can I deliver a clean 9–12 second social cut with no glaring artifacts and minimal manual cleanup? On launch day, I’ll throw five prompts at it that usually trip models:

- A person turning quickly with curly hair near a window (backlight + hair = artifact city).

- A close-up hand interacting with a textured object (knit gloves or wicker baskets).

- Text legibility on moving signage (medium shot, shallow depth of field).

- Rapid camera push-in on a reflective surface (glass, water, a black car).

- Natural lipsync with a short VO line.

If V4 passes three of those five without surgical edits, it’s more than a leaderboard darling, it’s studio-ready for a lot of tasks.

Pricing and access model

Pricing decides whether this becomes a daily driver or a sometimes toy. I’m hoping for:

- A creator plan that doesn’t punish iteration (reasonable credits or time-based rendering).

- Team seats with shared assets and version history.

- Usage terms that are clear on commercial rights.

I don’t have final numbers yet. If they land near Runway’s pro tier for comparable limits, I can justify it. If they go usage-only with steep per-render costs, that’s tough for agencies testing ten variants per concept. Transparent pricing with sensible overage is what gets tools adopted.

15-second cap, a real limitation

Fifteen seconds is a constraint you either learn to love or work around. For ads, hooks, cinemagraphs, explainers, and B-roll, it’s fine. For narrative, it’s limiting. Stitching four 15s clips can work, but seams show, lighting drifts, character continuity slips, and you feel the cuts.

My workaround plan: storyboard in beats. Generate anchor shots (establishing → action → reaction → detail). Then bridge with quick masked edits to keep continuity intact. It’s not as smooth as a 60-second continuous gen like we’ve seen teased by Sora, but for most marketing work, tight beats are actually a creative advantage. You think in moments, not minutes.

How It Compares to Current Tools on Paper

vs Sora

Sora‘s demos promise longer, higher-fidelity sequences with complex physics and long camera moves. It also aims at higher resolution and longer durations. If you’re chasing cinematic continuity and minute-long scenes, Sora is the benchmark to beat. But access is limited, and iteration time can be hefty. If SkyReels V4 nails fast masked fixes and native audio at 1080p, it could win the “ship more variants this afternoon” race. Speed-to-usable-cut matters more than raw wow-factor when you’re on deadline.

vs Runway

Runway (Gen-3 and friends) is my current weekday workhorse: decent motion, solid upscaling, and strong control options like keyframes and masking. If SkyReels V4’s mask-based repair is faster, as in, re-rendering only changed regions without re-cooking the whole clip, I’ll reach for it on patch days. Runway still has the edge on ecosystem and tutorials. SkyReels needs rock-solid docs and quick keystrokes for me to switch muscle memory.

vs Veo

Google Veo looks fantastic in research posts, with strong text understanding and camera control. But availability is the catch, and export workflows can feel research-y. If SkyReels V4 launches with clear commercial terms, simple exports, and that unified edit-repair flow, it could be the practical pick for creators who need 10 clips by Friday, not a single prestige shot by next month.

What We’ll Test First When It Launches

Here’s my day-one checklist, timestamped so I can report back with receipts:

- March 6, 2026, Hands and hair stress test: 5 prompts, same seed, different lighting. I’ll publish frames and PSNR/SSIM diffs if the tool exposes them: if not, I’ll do visual side-by-sides.

- March 6, 2026, Native audio toggles: generate with audio on, then replace with an uploaded ambience stem: check sync drift at 0:05–0:10.

- March 7, 2026, Mask repair speed: patch a flickering logo on frames 42–64 only. I’ll time the render and note whether the background stays stable.

- March 7, 2026, Text legibility on motion: moving storefront sign at 32FPS: does the kerning melt? I’ll test bold vs light fonts.

- March 8, 2026, Multi-clip continuity: stitch three 15s clips into a 45s beat sheet: check color drift and character consistency.

If there’s anything specific you want me to try (product shots, pets, fast sports), tell me. I’ll queue it. If V4 feels like a partner, not another picky tool, you’ll see it in the results and the timestamps I share.

Last note: if SkyReels keeps what I saw in those teasers, stable motion, believable micro-audio, and quick, surgical repairs, I’ll happily make room for it on my dock. If it slides into hype-with-edges, I’ll say that too. Deal?

Previous Posts: