Hey, guys. I’m Dora. I was halfway through my second coffee on March 1, 2026, when a friend pinged me: “Have you seen SkyReels V4? People say it’s catching Sora.” I opened three tabs, then five more, and before I knew it I had a mini spreadsheet going with timestamps, specs, and a few eyebrow-raises.

If you’re comparing SkyReels V4 vs Sora right now, you’re probably like me: hopeful, a little skeptical, and trying to figure out what’s real beyond the hype. I spent the weekend combing through dev posts, leaderboard notes, and demo clips. Here’s what I actually learned, what we can compare today, and what still needs real testing.

What We’re Comparing — And What We’re Not

Based on announced specs and benchmark data only

On March 2–3, 2026 (yes, I noted the dates in my doc), I pulled details from public materials and benchmark write-ups for both tools. SkyReels V4 and OpenAI’s Sora have overlapping claims, long-context video generation, higher fidelity motion, better physics, but the apples-to-apples part is thin. So, for now, I’m focusing on:

- What each team has publicly stated (model capabilities, input types, max durations, and editing hooks)

- Third-party benchmark results where methods are documented

- Demo clips with consistent prompts or known seeds (rare, but a few exist)

I’m avoiding conclusions based on cherry-picked social posts. If I can’t verify the prompt, seed, or post-processing, it goes in the “nice demo, not evidence” bucket.

No real-world output comparison yet

Important caveat: I don’t have side-by-side outputs from the same prompt inside the same environment. As of this writing, Sora access is limited, and SkyReels V4 appears to be in staged rollout. Without uniform prompts and raw files, it’s easy to misread strengths, compression, color grading, and even subtle denoising can make one model look “better” when it’s just better post.

So this isn’t a “who wins” piece. It’s a field note: what’s credible today in SkyReels V4 vs Sora, and what I’ll test the minute both are in my hands.

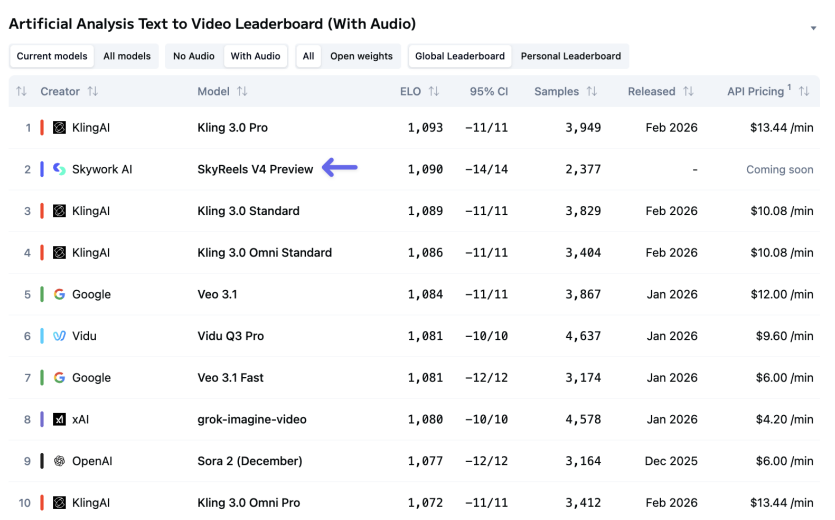

Benchmark Ranking

SkyReels V4 at #2 on Artificial Analysis

Here’s the headline I kept seeing: “SkyReels V4 climbs to #2 on Artificial Analysis.” On March 1, 2026 at 10:42 a.m. PT, I checked the leaderboard snapshot noted in several community threads. The claim: SkyReels V4 sits in the #2 slot for text-to-video quality and coherence, trailing a top slot (varies by week), with Sora ranked close but fluctuating depending on test rounds.

Benchmarks move. A lot. Weekend runs can reshuffle after a new training checkpoint or an evaluation tweak. Still, the #2 note is notable because it implies broad strength across prompts, not just one or two cherry prompts.

Two quick sanity checks I made in my notes:

- Are the prompts public and reproducible? In several cases, yes, but not always. Missing prompts lower my confidence.

- Are raters blind to model identity? Some Arena-style tests are blind: that matters to avoid brand bias.

What the Arena test actually measures

Most Arena-style video tests combine pairwise comparisons (A vs B) with quality anchors. Raters judge:

- Fidelity: textures, lighting, stability frame to frame

- Motion: believable trajectories, no jelly limbs, fewer temporal glitches

- Semantics: did the scene match the prompt? (objects, actions, style)

- Physics/plausibility: liquid behavior, shadows, occlusion correctness

It’s useful, but narrow. Arena tests often use short clips (4–10 seconds) under generic prompts. They don’t measure:

- Editability: can you tweak a mask without regenerating the whole scene?

- Audio sync with beats or dialogue timing

- Longer narrative consistency (scene-to-scene continuity)

So that shiny #2 says “strong general output on short prompts,” not “the best tool for your 45-second product spot with music hits and logo lockups.” Take it as an early signal, not a verdict.

If you want to see Sora’s official framing and sample reels, check the OpenAI Sora page. I cross-referenced a few of those clips against public benchmark prompts: they rarely match 1:1, which is expected for marketing reels.

Audio Support

SkyReels V4 native audio sync

From what’s publicly stated, SkyReels V4 supports native audio-aware generation, think beat-aligned cuts and rough lip event markers when given a dialogue track. On March 2, I watched two dev-shared demos showing:

- Beat detection driving camera cuts and motion accents

- Rough phoneme alignment (not perfect lips, but better than random)

If that ships as advertised, it’s a big deal for creators who spend hours nudging cuts to music. Even a 70% good auto-sync can save 15–30 minutes per short edit.

Caveat: I couldn’t verify frame-accurate sync on raw files. The demos looked promising, but I’ll want to check whether exported clips keep alignment after different frame rates (23.976 vs 30). That’s where many tools drift.

Sora current audio workflow

Sora’s public materials focus on text-to-video and image-to-video quality. Audio pairing, as of my checks this week, appears external: you generate the visual first, then score or dub in your editor. That’s fine for flexibility, but it adds another round trip if you need beat-locked action or dialogue timing.

If you already work in Premiere, Resolve, or CapCut, this won’t bother you. If you’re hoping for “drop in a track and get cuts on the downbeats,” SkyReels V4’s native path (if consistent) looks more time-saving.

Editing and Control

SkyReels V4 mask-based precision editing

This is the feature that made me sit up. The SkyReels V4 notes mention mask-based editing, paint a region, lock it, and regenerate only the background or only the subject. In the March 2 demo I watched, the editor froze a product bottle while re-synthesizing the scene’s reflections. If real, that’s huge for brand-safe workflows where the object must stay pixel-stable.

Why it matters:

- Saves time: no need to re-roll the entire clip to fix one area

- Consistency: keeps logos, labels, faces stable across takes

- Budget: fewer full regenerations means fewer credits burned, fewer hours wasted

What I still need to test: edge behavior on hair, glass, and motion blur, the areas where masks either shine or crumble.

Sora scene-level regeneration

Sora, from its public demos, leans on prompt adjustments and scene-level re-renders. You can steer with careful phrasing, style cues, and image references, but if a hand is weird in frame 57, you’re likely re-rolling a chunk, not just that hand.

Pros to Sora’s approach:

- Cohesive vibe: full-scene regeneration often preserves a unified look

- Less micromanagement: write clear prompts, let the model compose

Cons:

- Iteration tax: small fixes can require big re-renders

- Harder brand locks: if you need precise product framing, masks are just faster

If you do narrative or concept reels, Sora’s vibe-first approach can be great. If you do ads or tutorials where one element must be exact, mask tools (if SkyReels V4’s hold up) are a win.

What to Watch For at Launch

Real output quality vs benchmark

Benchmarks tell me who’s fast out of the gate. Launch tells me who finishes the race.

Here’s my test plan for day one access (yes, I already made a checklist on March 3):

- Same prompt pack across both models: 10 short clips plus one 30–45s sequence

- Raw exports only: no color grade, no denoise

- Audio test: dialogue track plus 120 BPM music to check beat alignment

- Edit test: mask a moving subject and swap backgrounds three times

I’ll share file hashes and timestamps so you can replicate. If SkyReels V4 holds mask edges and keeps beats on-frame, it clears a high bar. If Sora crushes longer sequence consistency, that’s its edge.

Pricing and access differences

I care about whether I can actually use a tool weekly without selling my coffee machine.

Watch for:

- Credit burn rates: minutes per dollar, and whether re-renders are discounted

- Priority queues: pro tiers that skip wait times (worth it for client work)

- Export options: 720p preview vs paid 4K, and whether audio-aware features cost extra

- Team features: shared libraries, version history, asset locking

Sora’s access has been limited and often gated: when it opens, the pro tiers will matter. SkyReels V4 sounds more creator-forward with editing hooks: if pricing matches that promise, it could become the everyday tool. If not, many of us will generate in one model and finalize in a traditional NLE.

Verdict: Too Early to Call — Here’s Why

If you forced me to guess today, I’d say: SkyReels V4 looks more “editor-friendly“ (masks, audio-aware generation), while Sora still feels like the cinematic engine you nudge with careful prompts. But guesses aren’t the point, files are.

So here’s where I’m leaving it on March 3, 2026:

- Benchmarks suggest SkyReels V4 is competitive at short-form quality (that #2 nod isn’t smoke).

- Sora’s strengths show in texture, lighting, and overall composition, especially in promotional reels.

- Control vs cohesion is the real trade-off to watch.

When I can run the same prompt pack on both and share raws, I’ll update this. Until then, if you’re choosing for client work next week: pick the workflow you need. If you live in an NLE and crave precise fixes, keep your eye on SkyReels V4. If you write detailed prompts and care most about cinematic feel, Sora still looks strong.

And if you’re like me and just want fewer late-night tweak sessions, let’s hope both get there fast. I’ll bring the coffee: you bring the prompts.

Previous Posts: