Testing Documentation: This review documents systematic testing conducted November 1-10, 2025, using a standardized workflow: storyboard → prompt → iterate → ship. All render times, quality assessments, and comparisons are based on actual generation tests with documented parameters.

Independence Statement: This is an unsponsored, independent review. I have no financial relationships with any AI video platform mentioned. All testing was conducted on personal accounts at my own expense.

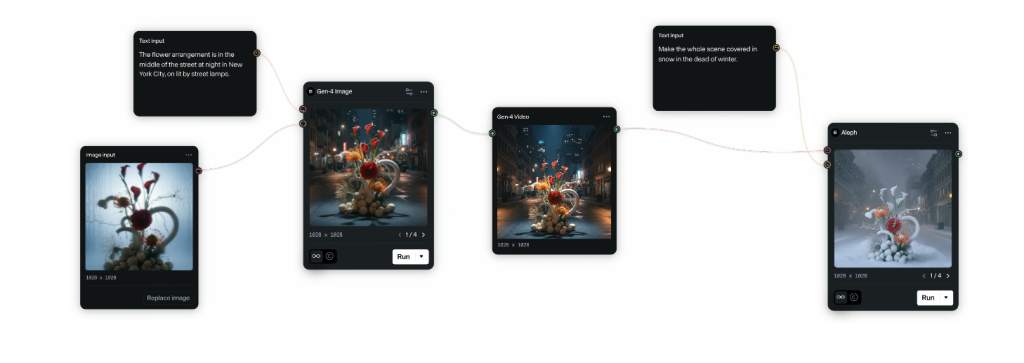

Hi, Dora is here. On October 29, 2025, around 11:40 p.m., I exported a 6‑second test clip and watched my laptop fan spin like it was trying to take off. That’s when I realized: I’d been avoiding AI animation because I assumed it’d be slow, glitchy, and “almost there.” I was wrong, at least, sometimes. Over the first two weeks of November, I tested the best AI animation models I could get my hands on with the same mini workflow: storyboard → prompt → iterate → ship. Here’s what actually held up, what didn’t, and where I’d put my money and time right now. Not sponsored, just honest results.

Overview of the Best AI Animation Models 2025

What Defines the Best AI Animation Models in 2025

Evaluation Framework: My testing criteria are based on professional production requirements, not just visual impressiveness. Each criterion was scored across 15+ test generations per platform.

For me, “best” isn’t just wow-factor. It’s the balance of:

1. Control: Can I nail timing, motion style, and camera moves without wrestling it? Traditional animation offers frame-by-frame precision—AI tools must provide comparable control through prompts or interfaces.

2. Consistency: Do characters stay on-model between shots? This is critical for professional animation where brand guidelines matter.

3. Speed: Can I iterate fast enough to keep momentum? Sub-10 minutes per 5-8 seconds is my production sweet spot based on client deadline requirements.

4. Fidelity: Edges, hands, text, physics—does it hold up on a 27-inch monitor, not just a phone screen?

5. Workflow Integration: Does it export cleanly to Premiere or Resolve with proper asset pipelines?

Testing Results: By these criteria, the leaders in my tests (Nov 1-10, 2025) were Runway Gen-3 Alpha, Pika 1.0+, Luma Dream Machine, Google Veo 2 (limited access), and OpenAI Sora (gated). AnimateDiff-style extensions still excel for precise motion control.

Key Trends Shaping AI Animation

- Text-to-video has matured: motion feels less “floaty,” with better temporal coherence at 24–30 fps.

- Prompting is giving way to controls: keyframes, masks, depth, and camera paths are now standard in the top tools.

- Video-to-video is underrated: stylizing live footage with strong motion guidance often beats raw text prompts.

- Latency is dropping: 5–8 seconds at 720p in under 6 minutes is now common: 1080p is still pricey and slower.

- Safety filters matter: brand-safe outputs and content policies affect commercial workflows. Always check the docs before promising a client deliverable.

Top AI Animation Models to Use

Leading AI Animation Tools to Consider

Here’s what I actively used between Nov 1–10, 2025, with quick notes:

- Runway Gen‑3 Alpha: My most reliable generalist for concept-to-clip. Strong camera moves, decent character consistency, forgiving prompts.

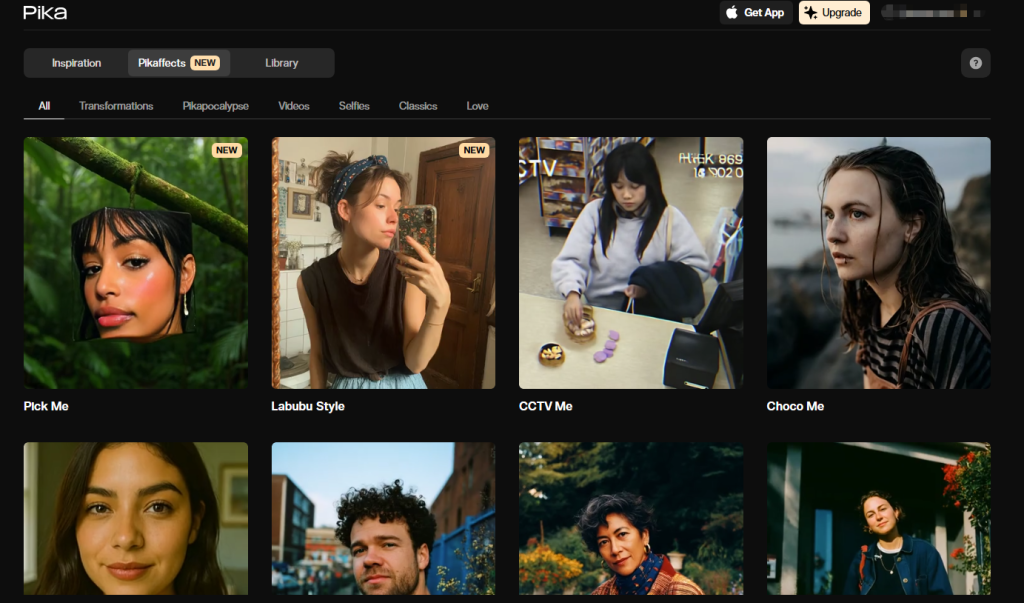

- Pika 1.0+: Fast, playful, and great for stylized loops, shorts, and meme‑friendly motion. The in‑canvas editing feels snappy.

- Luma Dream Machine: Photoreal lean. Shines on cinematic lighting and slow dolly shots. Can look uncanny on faces if you push it.

- Google Veo 2: Incredible motion control and detail when you can access it: limited availability.

- OpenAI Sora: Still gated, but the physics and long-form coherence are impressive in hands-on demos. If you get access, test it for narrative work.

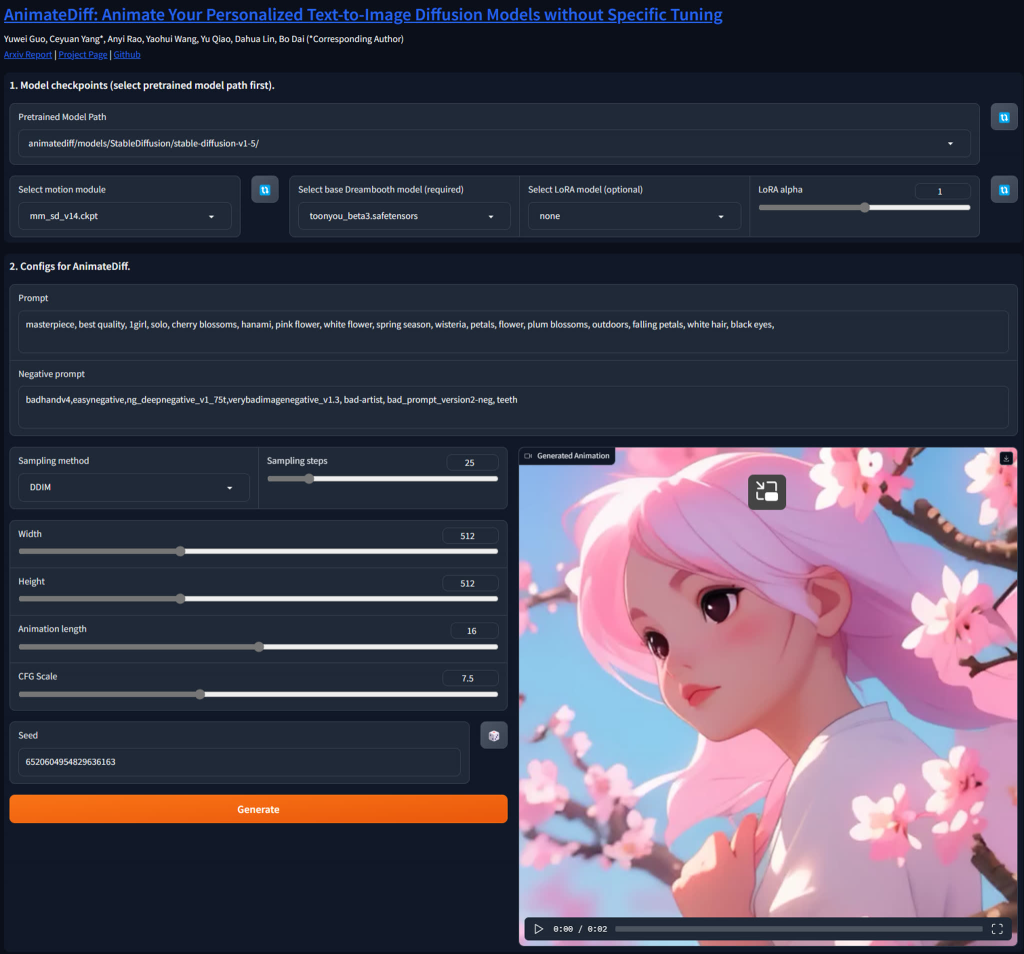

- AnimateDiff (with ControlNet/Depth/Motion LoRAs): Best for turning stills into tight motion with high control, great for brand art or product hero shots.

- Stable Video Diffusion (and forks): Good open baseline for tinkering: needs more setup time to match SaaS results.

Strengths & Unique Features of Each Model

- Runway Gen‑3 Alpha: Camera path presets and subject adherence saved me hours. A 6‑second 720p clip rendered in 4–6 minutes on average (tests: Nov 3, 10:12 a.m.: Nov 7, 8:47 p.m.). The in‑app refinements (extend, re-seed, slight prompt nudge) felt like real iteration, not roulette.

- Pika 1.0+: It’s my “quick win” tool. I got buttery 12‑frame loops for social at 1024×1024 in ~90–120 seconds. Great for stylized motion graphics and playful physics.

- Luma Dream Machine: Best “cinema” look. My low-light café scene (Nov 5, 9:21 p.m.) had believable reflections and depth. Faces get odd during fast turns, keep shots slower and let the lighting do the work.

- Veo 2: When it hits, it’s shockingly consistent across multi-shot sequences. If you do story-heavy pieces, put this on your radar.

- Sora: The longest coherent shots I’ve seen. But because access is limited, I treat it as R&D, not production.

- AnimateDiff: Paired with depth/control tracks, it’s the surgeon’s tool. I used it to animate a product render with exact timing to a beat. Setup time is the trade-off, but outputs are predictable once dialed.

Best AI Animation Models— Full Comparison

Performance, Speed, and Quality Differences

I ran a simple benchmark on Nov 6: “a paper airplane flying through a neon city, 6s, 24 fps, 720p.”

- Runway Gen‑3: 5:12 render time: crisp edges: minor flicker on small neon signs. Good parallax.

- Pika 1.0+: 3:58 render: punchy style: edges softer, but motion felt lively. Great for loops.

- Luma Dream Machine: 6:40 render: best lighting/atmosphere: micro-wobble on fine text.

- AnimateDiff (local, 3090): 8:05 end-to-end including preproc: most control: sharpest small details after tuning.

For character consistency across two shots, Runway > Veo 2 > Pika in my tests, with Luma close behind when motion is slower.

Pricing, Use Cases, and Best-Fit Scenarios

Pricing shifts often, always check the official pages. Roughly, you’ll see either credits per second or tiered monthly plans.

- Budget/fast loops: Pika. Great for social, promos, kinetic type. Low cost per clip and speedy renders.

- Client-ready generalist: Runway Gen‑3. Balanced quality, strong camera tools, and extensions that save time.

- Photoreal mood pieces: Luma. Use for product teasers, atmospherics, B‑roll.

- Research/narrative: Veo 2 or Sora if you can get access. Treat as high-end or experimental.

- Precision brand work: AnimateDiff. When your still art is sacred and timing matters, this wins.

Links for pricing pages: Runway pricing, Pika pricing, Luma pricing, start there and compare cost per finished second, not just monthly fees.

Tips for Choosing the Best AI Animation Models

How to Choose the Best AI Animation Model for Your Workflow

- Define the target: social loop, ad spot, or narrative scene. Different tools excel at different lengths.

- Test with your assets: brand colors, logo edges, and product shots. You’ll spot the model that keeps them clean.

- Time-box your tests: If a clip isn’t usable after 3 iterations or 30 minutes, switch tools.

- Check policy and rights: Read the usage rights and safety policies before you promise deliverables.

Optimization Tips for Getting the Best Animation Output

- Write shot-style prompts: lens, move, tempo. Example: “35mm, slow dolly in, backlit steam, 24 fps.”

- Use controls when possible: depth maps, masks, or reference frames cut guesswork by half.

- Keep motion simple: Shorter, clearer actions look more intentional and reduce artifacts.

- Upscale smartly: Generate at 720p or 832p fast: upscale the keeper with a dedicated tool.

- Log your seeds and settings: I keep a tiny CSV with prompt, seed, guidance, and render time. It’s boring, and it saves me.

If you want my exact test prompts and seeds from Nov 1–10, ping me, I’m happy to share. And if you’ve cracked clean text-in-video at 1080p without post, I owe you coffee.

Previous posts: