I’m Dora — I was editing a short clip at 1 AM when a message popped into my group chat. Someone had shared an AI-generated video that stopped the whole conversation cold. Not because it was technically impressive — because nobody could quite tell how it was made, where the content came from, or where the ethical and legal lines actually were.

That moment stuck with me. “NSFW video AI” is a phrase everyone searches, but few define cleanly. If you create content for a living — or you’re trying to understand the technology responsibly — you deserve clear, fact-based information.

Here’s what I’ve pieced together from months of testing, legal tracking, technical documentation, and open-source community resources.

What Is NSFW Video AI?

NSFW stands for “Not Safe For Work.” In the AI context, it refers to content that mainstream platforms restrict — typically nudity, sexual themes, explicit imagery, or other material flagged by content policies. NSFW video AI describes systems that generate or transform video into such content.

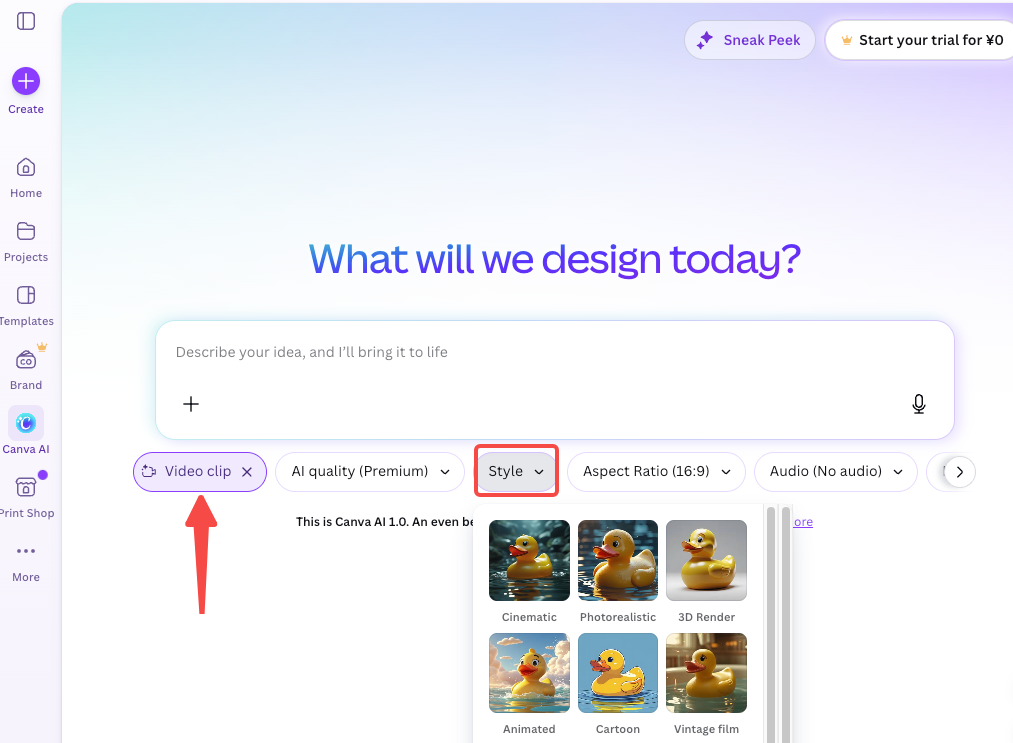

The label is imprecise. Some use it for any uncensored AI video tool; others reserve it for explicit adult material. Platforms apply broad filters covering everything from suggestive poses to full nudity. The underlying technology — primarily diffusion-based models — mirrors mainstream tools like Runway, Kling, and OpenAI’s Sora. The primary difference lies in safety filters and fine-tuning (or their absence).

Text-to-video vs image-to-video vs video-to-video

The three main input modes behave very differently, and knowing which one you’re dealing with changes everything about what to expect.

Text-to-video takes a written prompt and generates a clip from scratch. You describe what you want — character, setting, action, camera movement — and the model builds it. This is the hardest mode to get consistent results from and the most dependent on training data quality.

Image-to-video starts with a still image and animates it. You provide a reference photo, optionally add a motion prompt, and the model generates movement around that visual anchor. This produces more predictable results because the subject is already established.

Video-to-video takes existing footage and transforms it — changing style, adding effects, or swapping visual elements. This is where face-swap and deepfake-adjacent use cases live, and where consent and legal exposure get most serious.

Each mode has its own quality ceiling and its own set of ethical landmines.

How NSFW Video AI Works

Source image, motion prompt, and generation model

Here’s the basic flow for the most common workflow — image-to-video generation:

- You provide a source image, either AI-generated or uploaded directly.

- You add a motion prompt describing what should move and how.

- The model generates a sequence of frames following the source and the motion instruction.

- Those frames are stitched into a short clip, usually 3–6 seconds.

The core component is the generation model. As MIT Technology Review’s explainer on AI video generation breaks it down: the model starts with random noise and iteratively cleans it up into a coherent image — the same technique used for static image generation, scaled across a sequence of frames. When paired with a language model that understands your prompt, the denoising process is steered toward what you described.

Why video is harder than image generation

I had to genuinely unlearn some assumptions here. Generating a single good image is one problem. Generating 30 coherent frames that flow together smoothly is a different problem entirely.

The key challenge is temporal consistency. Early video AI systems generated frames independently — which produced flickering, morphing subjects, and physics that made no sense. Modern systems solve this with temporal attention layers: neural network components that let the model evaluate all frames together rather than one at a time. The model learns that a person’s hand position at frame 10 constrains what that hand can look like at frame 11, that lighting stays consistent within a scene, that objects maintain identity across time. The 2024 academic survey of video diffusion models on arXiv covers this architectural evolution in detail if you want to go deeper.

“Better” does not mean “solved,” though. Hands are still a mess. Hair moving through space still breaks. Two people interacting in the same frame? Often a disaster. These aren’t NSFW-specific failures — they’re fundamental limitations of the current model generation. Explicit content just makes them more visible, because expected anatomy is specific and any deviation is more obvious.

Common Creator Workflows

Image-to-video clips

The most practical entry point. Generate or select a still image, run it through an image-to-video model, get a short animated clip. The source image handles most of the visual work — the model just needs to animate it convincingly.

Results vary wildly. Subtle motion prompts (“slight breeze, slow breathing”) tend to work better than dramatic ones. The less movement you demand, the more coherent the output usually is. I’ve spent entire evenings iterating on 4-second clips — small prompt tweaks can produce completely different, and sometimes completely broken, outputs.

Short concept videos

Some creators string multiple image-to-video clips together to build something resembling a scene. Each clip is generated separately, then edited together in post. It’s slow and consistency between clips is a real challenge — characters can look slightly different from one generation to the next. Maintaining a coherent “look” across multiple generations is one of the harder unsolved problems in this workflow.

Self-hosted experiments

This is where things get technically demanding — and where most uncensored experimentation actually happens. Tools like AnimateDiff, whose official implementation is published on GitHub and works within ComfyUI, let you run video generation locally with full control over model weights and content filters. No platform terms. No cloud moderation. Just your GPU and whatever you decide to put in.

The tradeoff is real. You need a capable NVIDIA card — realistically 12GB VRAM minimum for 512×512 16-frame outputs — and you need to know your way around Python environments, model files, and node-based workflow graphs. It’s not a one-click experience. But for creators who want control over the full generation pipeline, it’s the most flexible option available.

What Mainstream Tools Usually Restrict

Every major commercial AI video platform — Runway, Kling, Pika, Sora, Hailuo — uses content policies and inference-time filters to block explicit or adult content. These aren’t buried in terms of service. They’re enforced at the model level through classifiers that detect and reject prompts or flag outputs that cross policy lines.

What exactly gets blocked varies. Most platforms draw a hard line at nudity and sexual content. Some block violence. Some flag suggestive content even when it’s not explicitly sexual. The definitions are inconsistent, which creates friction for creators working in adjacent spaces — swimwear, figurative art, romance narratives — who aren’t generating anything explicitly adult.

The commercial rationale is liability. Platform-hosted tools are accountable for what they produce in a way that self-hosted open-source models aren’t. That accountability shapes everything from training data decisions to inference-time filtering.

Limits, Risks, and Compliance Boundaries

Laws have evolved rapidly. Always consult current legal advice for your jurisdiction.

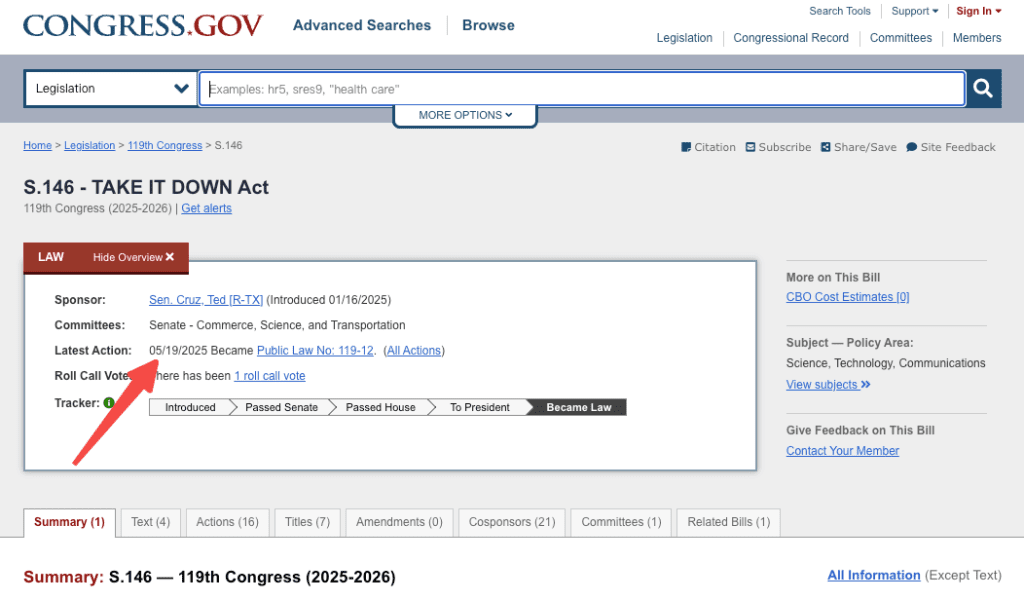

United States: The TAKE IT DOWN Act (S.146) was signed into law on May 19, 2025. It criminalizes the knowing publication of non-consensual intimate imagery, including AI-generated deepfakes (“digital forgeries”). Platforms must implement notice-and-takedown processes (effective one year after signing). Penalties include fines and imprisonment (up to three years in cases involving minors). Many states have additional or complementary laws.

European Union: The EU AI Act entered into force on August 1, 2024. Transparency obligations for AI-generated content (including deepfakes) apply progressively, with broader rules for high-risk systems and synthetic media labeling requirements phasing in through 2026–2027. Significant fines apply for serious violations.

Australia: The Criminal Code Amendment (Deepfake Sexual Material) Act 2024 commenced on September 3, 2024. It prohibits non-consensual sharing of explicit material (including AI-generated), with penalties up to 6–7 years imprisonment. New South Wales strengthened rules effective February 16, 2026, explicitly covering AI-generated intimate images.

Canada: Bill C-63 (Online Harms Act) did not pass in its prior form. Existing Criminal Code provisions (e.g., Section 162.1 on non-consensual intimate images) may apply in some cases; provincial civil remedies exist in places like Quebec, Manitoba, and British Columbia. Legislation in this area continues to develop.

Core ethical and legal red lines (universal advice):

- No non-consensual use of real people’s likenesses.

- Absolutely no content involving minors (real or fictional depictions in sexual contexts).

- Respect platform terms and applicable obscenity laws.

Quality issues (anatomy, consistency) still matter for professional work, even in permitted creative contexts.

FAQ

Can mainstream AI video tools generate NSFW content?

No. Commercial platforms like Runway, Kling, Pika, and Sora enforce content restrictions at the model level. Prompt engineering won’t reliably bypass these — modern systems assess semantic context and intent, not just individual keywords.

Is local NSFW video generation free?

Open-source models like those built on Stable Diffusion with AnimateDiff can be run locally without subscription costs, but “free” understates the actual investment: capable GPU hardware, technical setup time, and the patience to debug a workflow that doesn’t always cooperate. It’s free the way building your own furniture is free.

What are the biggest quality issues?

The consistent pain points across every workflow I’ve tested: anatomical inconsistency across frames — especially hands and faces — motion artifacts when subjects are too close to the camera, visual drift in longer clips where characters gradually morph into something different, and lighting that doesn’t stay coherent through a scene. These are model-level constraints that no prompt refinement fully fixes. You work around them, not through them.

One Last Thing

NSFW video AI sits at the intersection of rapidly advancing technology, platform policies, and tightening legal frameworks. Start with technical fundamentals, map your use case against applicable rules, and prioritize ethics and consent. The creative potential is real, but so are the responsibilities. Legal landscapes (especially U.S. states and EU enforcement) continue developing—cross-reference official sources for the latest.

This revision strengthens verifiability with key citations, corrects/aligns dates and details to verified records as of May 2026, removes unsubstantiated claims, and maintains a neutral, informative tone suitable for sensitive topics.

Previous Posts: