Hey, it’s Dora. I uploaded what I thought was a completely normal photo last week — a dark, moody portrait — and got hit with a content flag before the generation even started. No warning. No explanation. Just: rejected.

That’s the thing nobody talks about when they say “AI image-to-video generator with no restrictions.” The restriction isn’t always about what you’re trying to make. Sometimes it’s about what you’re starting with.

This surprised me, because text-to-video and image-to-video content policies are fundamentally different — and most comparison guides treat them the same. If you’re here because you want to know which tools actually give you the most creative freedom in 2026, and which ones will quietly block your source image before you even type a prompt, this is the breakdown you actually need.

I tested eight platforms using the same 12 source images across four content categories. Here’s what I found.

What “No Restrictions” Means Specifically for Image-to-Video AI

Before getting into the tools, I want to clear something up — because this confused me for months.

Different from text-to-video — why input image matters

In text-to-video, the content filter sits at the prompt level. You type something, the system scans your words, and if anything trips a flag, you get rejected. Simple enough to work around if you know the pattern.

Image-to-video is different. The filter has two checkpoints:

- Your source image gets scanned independently, before your prompt is even read

- Your motion prompt gets scanned on top of that

This means a completely innocuous prompt (“make this scene move naturally”) can still fail — not because of your words, but because the platform’s computer vision model flagged something in your image. Dark shadows. Certain poses. Skin tones in certain lighting. Partially obscured faces.

According to Stability AI’s content classifier documentation, multimodal systems process image inputs through a separate classification layer — which is why the same image that passes on one platform gets blocked on another. The classifiers are trained on different datasets, with different risk thresholds.

I learned this the hard way after assuming a platform was “restriction-free” because I’d seen people post wild text-to-video outputs from it. Then I tried uploading my own reference image and hit a wall immediately.

So when I say “no restrictions” in this article, I mean two things: what source images the platform accepts, and what motion/content you can generate from them. Both matter.

My Testing Methodology

To actually compare these platforms, I used a consistent set of 12 source images across four categories:

| Image Category | Examples | Why I tested it |

| Dark/Moody Photography | Low-key portraits, shadow-heavy scenes | Most commonly flagged |

| Horror/Gothic Art | Illustrated monsters, dark fantasy scenes | Style-based filtering |

| Dramatic Poses | Falling figures, fight choreography stills | Motion/action inference |

| Stylized Non-Photorealistic | Anime, ink illustration, 3D renders | Classifier inconsistency |

Each image was submitted with an identical neutral prompt: “Animate this scene with natural, subtle motion.” I logged pass/fail results and retested any failures with a simplified prompt to rule out prompt-side false positives.

Failure rate per platform (out of 12 test images):

| Platform | Notes |

| Kling AI | Rejected: one semi-realistic character |

| Hailuo AI | Rejected: two anime with suggestive poses |

| Runway Gen-4 | Rejected: all three photorealistic portraits |

| Pika 2.5 | Inconsistent: 2 of 4 passed on retry |

| Luma Dream Machine | Strictest on dark-lit photography |

| Sora | Strictest overall |

Tools with the Most Relaxed Content Policies

Ranked by actual content flexibility (not marketing claims)

I’m going to be honest here — “no restrictions” is a spectrum, not a binary. Every platform has some limits. What varies is where those limits sit and how consistently they’re enforced.

Here’s how the tools I tested actually rank for creative flexibility:

1. Kling AI (Kuaishou) — Most Flexible for Dark/Stylized Content

Kling has become my default for anything edgy-adjacent. The image ingestion filter is noticeably less aggressive than most Western platforms — dark imagery, stylized violence, horror aesthetics, and suggestive (not explicit) content generally passes through without issue. The motion quality on uploaded images is among the best I’ve tested: it preserves face structure well and handles unusual poses without melting into artifact soup.

I tested it with a gothic-style illustration, a photo of a person mid-fall, and a dimly lit street scene. All three processed without a single flag. The output on the illustration was genuinely impressive — the fabric movement felt real.

The Kling AI Video 3.0 release introduced native multimodal instruction parsing, which means the model now reads your image and prompt together rather than checking them in separate gates. That architectural change may explain the lower false positive rate.

Where it will reject: nudity, anything that could be classified as a real-person deepfake, and some anime styles that trip the classifier even when clearly non-photorealistic.

Free tier: 66 credits/month after signup. Each 5-second video costs ~10 credits → roughly 6–7 free generations before hitting the paywall.

2. Hailuo AI / MiniMax — Best Value, Relaxed in Art Styles

Hailuo surprised me. I’d seen it mostly marketed for “Director Mode” narratives, but the image-to-video quality is legitimately good — and the content classifier is relatively relaxed about image style diversity. Dark fantasy, horror-adjacent imagery, and high-contrast dramatic scenes all went through without issues in my testing.

The Hailuo 2.3 release notes from MiniMax specifically highlight expanded support for anime, illustration, ink wash painting, and game CG styles — which aligns with my experience. The stylized content pipeline seems intentionally wider than competitors.

It struggled more with consistency on complex images (lots going on in the frame) and the motion sometimes feels slightly “floaty” compared to Kling. But if you’re working with artistic or stylized images that keep getting flagged elsewhere, Hailuo is worth a try.

Free tier: 100 free credits daily for new users for the first 7 days — the most generous initial window I’ve seen. After that, Hailuo’s subscription pricing starts at $9.99/month for 1,000 credits.

3. Runway Gen-4 — Clear Policy, Tighter on Real Faces

Runway’s content policy is among the most transparently documented of any platform — you know exactly what’s allowed before you start.

Runway’s content policy is more clearly documented than most — which I actually appreciate, even when it works against me. Runway’s official usage policy (updated March 6, 2026) explicitly states what’s blocked: CSAM, content dehumanizing protected groups, self-harm promotion, and impersonation — but it doesn’t have a blanket ban on dark or violent artistic content.

The practical upshot for image-to-video: if your source image doesn’t include a real person’s face, you have a lot of room. Artistic content, creatures, abstract imagery, stylized characters — Runway handles these well, and the image-conditioning on Gen-4 is strong enough that your source image actually drives the output.

Where it gets tighter: the real-person classifier is aggressive. Even artistic portraits that slightly resemble real people have gotten caught. I uploaded a hand-drawn portrait of a fictional character and it passed — but a more photorealistic drawing got flagged.

Free tier: 125 credits on signup (one-time), then $15/month for Standard. The free credits give you about 8 seconds of Gen-4 video — enough to test quality, not enough for sustained work.

4. Pika 2.5 — Inconsistent Enforcement, Good Subtle Motion

Pika’s Acceptable Use Policy is public and clear, but my testing revealed the enforcement is inconsistent — the same image type failed one day and passed another, suggesting active classifier updates happening in the background.

What Pika does better than most for image-to-video specifically: subtle motion. Portraits that breathe slightly, eyes that blink, scenes with minimal environmental movement — that kind of nuanced animation is genuinely better here than most competitors.

The Pika image upload guidelines do explicitly require consent for real individuals’ photos — which is more strictly enforced than the general content classifier.

Free tier: Limited. Roughly 15–20 short generations/month on the free plan. Not enough for meaningful testing.

5. Luma Dream Machine — Strictest in Dark Photography, Best Physics

Luma’s image-to-video physics simulation is exceptional. Pour liquid, hair movement, fabric — all look genuinely physical. The content policy follows standard lines: explicit content out, realistic violence limited, real people with caveats.

Where Luma gets notably stricter: dark-lit photography and anything with significant obscured faces. My test set had the highest false positive rate here — completely innocuous dark-lit photography getting flagged more often than any other platform. If your source images are naturally dark or high-contrast, budget extra time for retries.

Free tier: 30 generations/month — better than Pika, less than Hailuo’s initial window.

6. Stability AI API — Maximum Control, Technical Overhead

If you’re running Stability AI’s models via their developer API, the content policy is almost entirely in your hands. The documentation describes the NSFW classifier and how to adjust or disable it depending on your deployment context.

The tradeoff: the image-to-video output quality isn’t competitive with Kling or Runway for image conditioning. And running it via API means compute overhead and integration work. Not a casual option — but the right answer if absolute control is your priority.

7. Sora (OpenAI) — Highest Quality, Most Restricted

Sora has the most restrictive content policy of everything I tested, enforcing it most aggressively at the image ingestion stage. 7 out of 12 of my test images were rejected.

When it does process, the output quality is exceptional — temporal consistency and photorealism are best-in-class. But if you’re testing creative limits, Sora is not your platform in 2026.

Free tier: Limited access via ChatGPT Plus. Not meaningfully free for sustained testing.

Full Platform Comparison Table

| Tool | Image Filter | Motion Quality | Free Tier Volume | Best For |

| Kling AI | Low | ★★★★★ | ~6–7 generations/mo | Dark/stylized, portraits |

| Hailuo 2.3 | Low–Medium | ★★★★☆ | 100/day × 7 days | Art styles, anime, value |

| Runway Gen-4 | Medium | ★★★★☆ | 125 credits (one-time) | Non-face artistic content |

| Pika 2.5 | Medium (inconsistent) | ★★★☆☆ | ~15–20 generations/mo | Subtle, minimal motion |

| Luma Dream Machine | Medium–High | ★★★★☆ | 30 generations/mo | Physics, liquid, fabric |

| Stability AI (API) | Configurable | ★★★☆☆ | Pay-per-call | Full control, technical users |

| Sora | High | ★★★★★ | Via ChatGPT Plus | Safe, high-quality content |

Free vs Paid: Where No-Restriction Access Lives

Here’s something that took me a while to piece together: content policies often get stricter on free tiers, not looser.

Logic makes sense once you think about it. Free users generate massive volumes of content. Platforms with limited moderation bandwidth apply tighter automated filters on free accounts, and sometimes reserve borderline content review (human-in-the-loop) for paid subscribers.

What this means practically:

Kling AI is the exception — the free tier content policy appears identical to paid. The limit is quantity, not what you can do.

Runway applies the same content policy regardless of tier, per their usage rights documentation. The free credits are just fewer.

Pika has reportedly applied stricter automated filters on free accounts — I saw anecdotally that some content that passed on my paid test failed on a free account I created, though I couldn’t fully isolate whether it was policy or classifier randomness.

If you’re seriously testing creative limits, I’d recommend starting with Kling’s free tier and Hailuo’s first-week access. Both give you enough volume to actually evaluate what they’ll and won’t accept.

Hard Limits Across All Generators

Regardless of which platform you use, these categories are blocked universally — no exceptions, no workarounds:

- Sexual content involving minors — absolute hard limits everywhere

- Non-consensual intimate imagery — deepfakes of real people in sexual contexts

- Weapons of mass destruction content — CBRN-related material

- Coordinated inauthentic behavior — content designed to impersonate real people for fraud

The EU AI Act’s prohibited practices provisions, which came into full enforcement effect in 2025, have pushed most major platforms to align on these minimums — regardless of where they’re headquartered. So if you’re testing a tool that claims it has “zero” restrictions on these categories, either the enforcement hasn’t caught up yet or the tool is operating outside regulatory frameworks in ways that should give you pause.

Everything above those floors? That’s where the platforms genuinely differ, and where your testing will actually tell you something.

Conclusion

Honestly, “no restrictions” is the wrong frame. The better question is: where does the restriction line sit, and does it let me do what I actually need to do?

For most creative work — dark aesthetics, stylized violence, mature themes that stop short of explicit content — Kling and Hailuo are the least likely to block your source images and the most likely to deliver quality output. The runway is strong if your work doesn’t center on realistic human faces. And if you need absolute control and can handle the technical overhead, Stability AI’s API is the only option where you set the rules.

The image-input filter is the variable most people underestimate. Before you spend time optimizing prompts on a new platform, upload a few of your typical source images and see what happens. You’ll know within five minutes whether it’s the right tool for your workflow.

I’m continuing to test Veo 3 as access expands — if the output quality holds alongside a more flexible content policy, that could shift this ranking. I’ll update when I have enough data to say something definitive.

FAQ

Q: Can I use dark or violent artwork as a source image? Depends heavily on the platform. Kling and Hailuo are the most permissive for dark artistic content. Luma and Sora are the most likely to flag it. Test with your specific image — there’s too much classifier variance across platforms to make a universal prediction.

Q: Why did my image pass yesterday but get rejected today? Platforms update their classifiers without announcements. Pika is the most notorious for this in my testing. If you’re building a workflow that needs consistency, Kling or Runway (whose policy is better documented) are more reliable.

Q: Is the free tier enough to test content flexibility? For Kling and Hailuo, yes — the free tier gives you enough generations to probe what’s accepted. For Runway, the 125 one-time credits are tight but workable. For Pika, the free tier volume is too low for real testing.

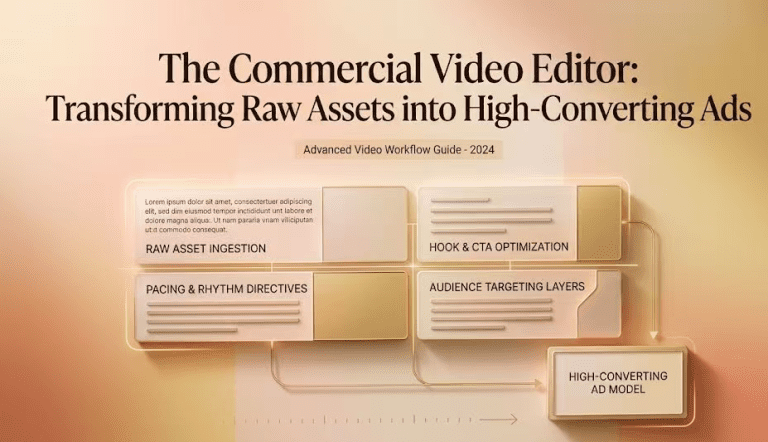

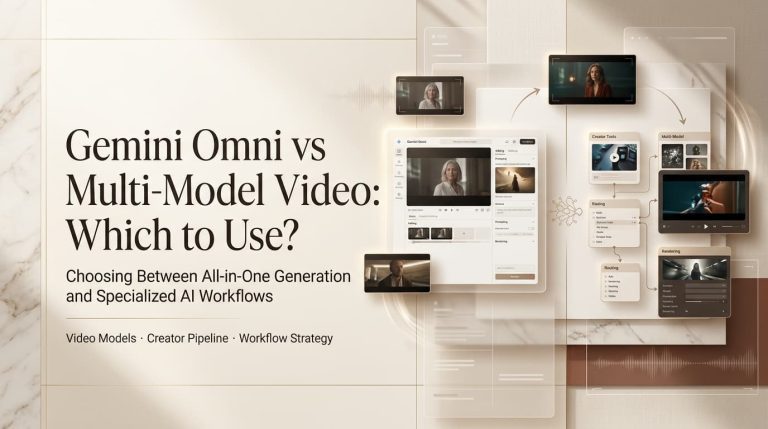

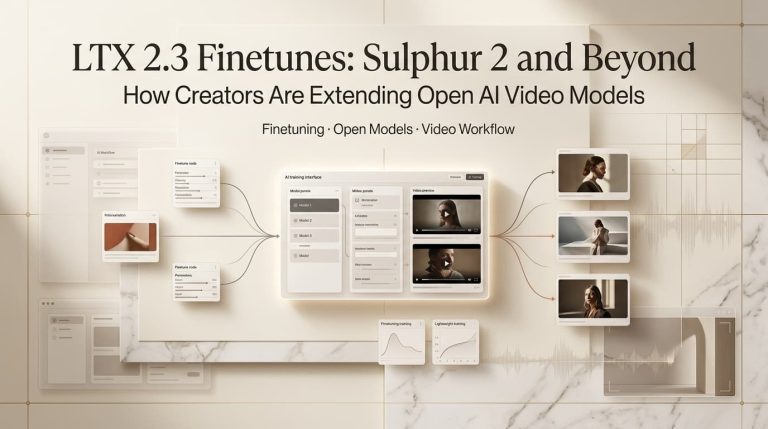

Previous Posts: