I was staring at a half-finished product video on November 12, 2025, and my editor crashed again. Out of frustration, I opened a fresh tab and thought: fine, let’s see if these “AI video” models can actually carry an ad from start to finish without eating my week.

Here’s what I learned testing the best AI video models for ads across two real-world scenarios: a short product spot and a human-presenter style clip. If you make ads for a living (or you’re an indie creator who has to), this should save you time, money, and a few headaches.

Why Ad Videos Need the Best AI Video Models for Ads

Ad videos are fast, tight, and unforgiving. A stray frame, a melted hand, or weird text on packaging can break trust instantly. The best AI video models for ads need three things:

- Control: camera moves, timing, and brand-safe visuals.

- Consistency: the product should look the same across shots.

- Speed: quick iterations mean more testing and better ROAS.

I’m not chasing cinema here: I’m chasing conversions. If a model can give me clean 5–15 second spots at 1080p with reliable motion and zero uncanny valley, I’m interested. If not, it’s another tab I won’t open again.

Top AI Video Models for Ads Overview

• Runway for Ad Generation

I used Runway (Gen-3) on November 14, 2025, for a 10-second tabletop ad. Prompt-to-video with a product focus was surprisingly clean. Motion control with keyframes felt stable, and I liked the “directors mode” vibe, basic but usable. Text in-scene is still hit-or-miss, so I avoided it and added copy in post. Export at 1080p looked crisp: 4K upscale is fine for social but not true 4K.

Good for: quick product shots, moody lighting tests, and fast iterations for A/B ad creatives.

Docs: https://research.runwayml.com/ and the product help center are solid for parameters.

• Sora for Advertising Use

Sora‘s results look like a dream, but as of November 2025, access is limited and not production-friendly for most teams. I got to try a short clip through a friend’s access on Nov 18. Quality? Wild. Long shots, consistent physics, and fewer artifacts in complex scenes. But… it’s not broadly available, and there’s no public pricing. If you can get in, it’s a powerhouse for concept ads and pitch decks. For weekly ad output at scale, the bottleneck is access, not capability.

• Kling for Commercial Videos

Kling (from Kuaishou) surprised me on Nov 20 with sharp, high-motion scenes; car-to-product transitions looked dynamic. It’s excellent at cinematic movement and dramatic lighting. But availability is still patchy outside China, and the interface feels geared to flashy demos more than controlled ad beats. Great for hero shots or spectacle: trickier for tight brand control.

• Luma for Ad-Style Content

Luma’s Dream Machine (tested Nov 16) is my “steady workhorse.” It handles product close-ups with fewer glitches and offers decent camera control via prompts. Skin tones are better than Gen-1 era tools. Some hand geometry issues show up in close-up human shots, so I frame around them. Turnaround time is fast, which matters when you’re drafting 6 variants for paid social.

Product Video Test

Test date: Nov 16–20, 2025

Prompt: “5-second ad, matte-black ceramic mug on a wooden table, soft morning light, slow 180° dolly, steam rising, reflective logo, minimal style.”

- Runway (Gen-3): Generated in ~2 minutes. The steam looked natural, the reflections were believable, and the dolly was smooth. Minor issue: logo warped on 2 of 6 runs. I fixed it by keeping the logo off the mug and comping it in post. Best run scored an 8/10 from me.

- Luma Dream Machine: ~1–3 minutes per run. Steam and light falloff looked great. The mug shape stayed consistent across cuts. Slight flicker on the tabletop grain if you look frame-by-frame. Best run: 8.5/10.

- Kling: ~2–5 minutes. Most cinematic motion by default, bolder camera path, dramatic highlights. But the mug sometimes drifted in size between frames, which breaks continuity in ads. Best run: 7.5/10.

- Sora (limited): Ultra-clean motion, consistent texture, and the steam behaved like real physics. It nailed the reflective logo once, which shocked me. If I had routine access, this would be my first pick for hero product shots. Best run: 9.5/10.

Takeaway: For daily ad production right now, Luma and Runway feel most reliable. Sora is the dream machine, when it’s accessible.

Human Presenter Test

Test date: Nov 18–21, 2025

Scenario: 12-second UGC-style ad. A woman at a desk holds the product, smiles, says one line, points to screen text.

- Runway: Good framing and mood. Hands sometimes look “almost right” but not fully. Lip-sync is not something I trust here: I added VO in post and cut to B-roll at the syllables that didn’t match. Usable with editing.

- Luma: Best skin tones of the bunch in my tests. Mouth shapes improved vs summer 2024 models but still wobble on certain phonemes. For silent/talking-to-camera without close lip detail, it’s fine. With clear VO lip-sync? Not yet.

- Kling: Strong dramatic look, but the face softened when the head turned, like a beauty filter you didn’t ask for. Eye line drifted twice. I’d keep Kling for stylized cuts, not direct-to-camera.

- Sora (limited): Most consistent facial geometry and micro-expressions. If they crack open access and let us control timing beats, human-led ads will level up fast.

Reality check: For presenter-led ads where the mouth must match VO, I still reach for avatar tools (e.g., HeyGen, Synthesia) or real footage. These text-to-video models are close, but not quite there for precise lip-sync.

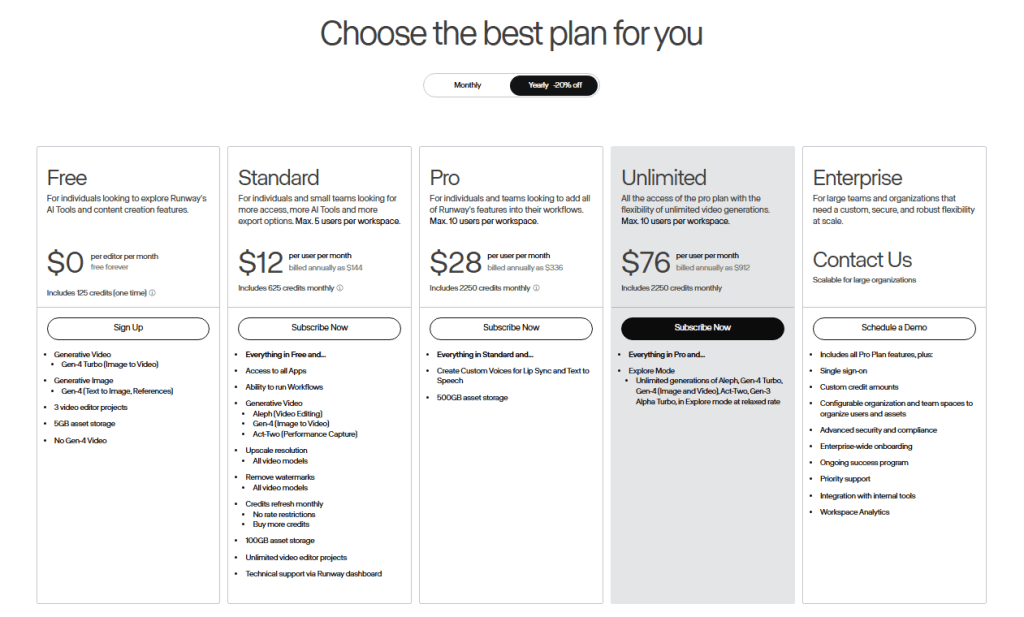

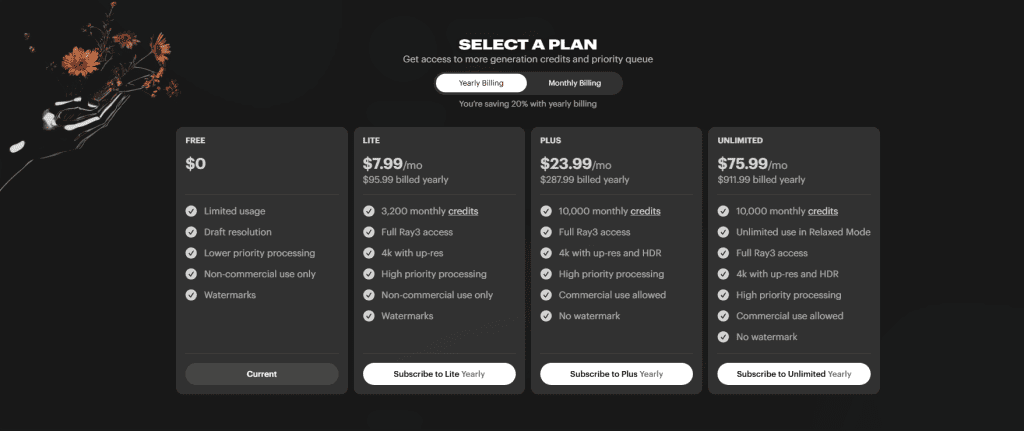

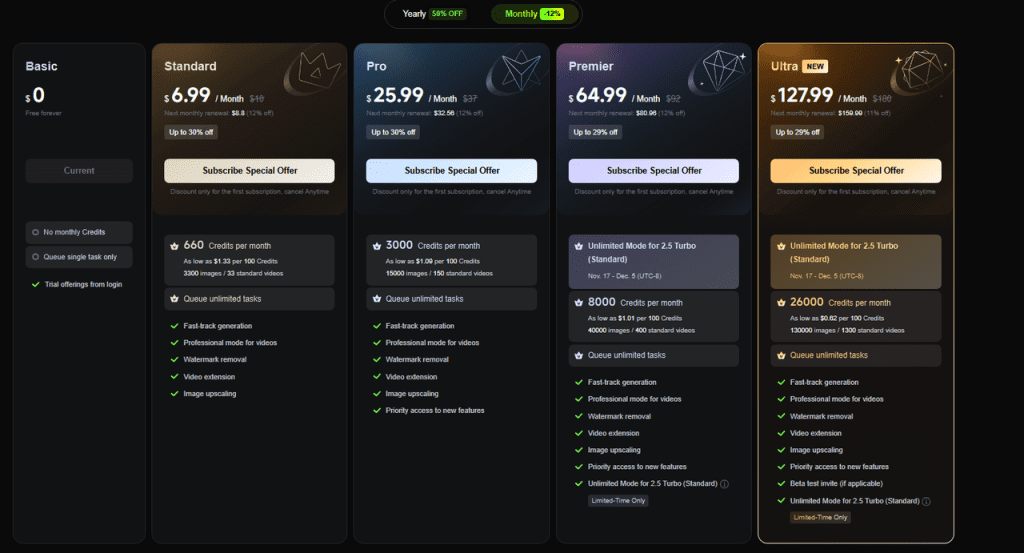

Price vs Output in AI Video Models for Advertising

As of November 2025:

- Runway: Paid tiers with credit-based renders. In my use, budget around a few cents to a couple bucks per 10–15s clip depending on resolution/length. It’s predictable for teams.

- Luma: Free tier is tight: paid unlocks faster queues and longer clips. Cost per usable ad draft was similar to Runway in my runs.

- Kling: Access and pricing vary by region/invite. Treat it as experimental unless your team already has a pipeline.

- Sora: No public pricing. Great if you have enterprise access: otherwise plan alternatives.

If you’re optimizing for CPM/CPA, the trade-off is time-to-iteration. A model that gives you three usable variants in 15 minutes is “cheaper,” even if per-render costs are higher. On that metric, Luma and Runway are winning today.

Recommendations

- If you need reliable product ads this week, Luma Dream Machine or Runway Gen-3. Keep text overlays in post, and avoid tight shots of hands.

- If you’re pitching big ideas, Try to access Sora for concept reels. It sells vision to clients fast.

- If your brand vibe is high-energy cinematic, Experiment with Kling for hero shots: comp the product/logo after.

- For presenter-led ads with synced VO: Use real footage or dedicated avatar tools, then blend AI B-roll.

Workflow tips I actually use:

- Lock your look: Save a short “style seed” video you like and iterate from it: consistency matters more than novelty.

- Keep edits human: Add typography, logos, and callouts in post where you control every pixel.

- Test like a marketer: Cut 3 variants, change only one thing (hook, angle, CTA). Ship the winner.

Previous posts: