Hey there, it’s Dora. I was refreshing the Artificial Analysis Video Arena on a random Tuesday in early April when a name I’d never seen sat at the top of both the text-to-video and image-to-video rankings.

HappyHorse-1.0. No team page. No press release. GitHub links that said “coming soon.”

The Elo scores were real — built from blind pairwise votes where users pick between two clips without knowing which model made which. Nearly 15,000 votes and it was still holding first place. That’s not a fluke.

One honest framing note: HappyHorse has no public API and no released weights, so I can’t run my own generations. What I can do is scrutinize the Arena vote data directly, flag which numbers are team-reported versus independently verified, and cross-reference multiple sources. That’s the frame for everything below.

The Short Answer First

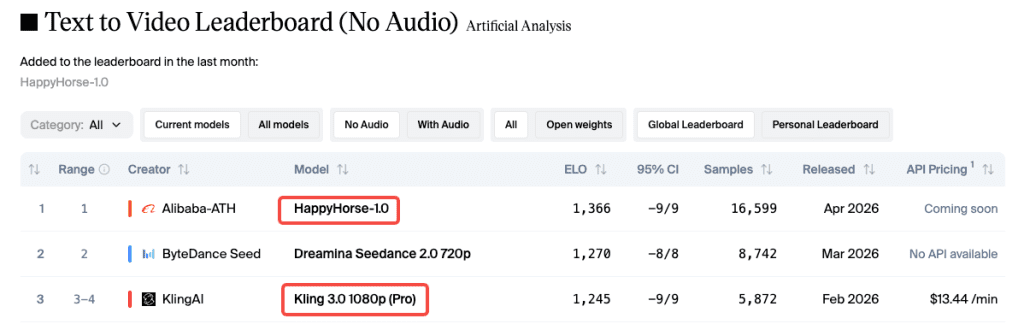

Blind-vote quality: HappyHorse leads clearly in the no-audio tracks — by about 120 Elo points over Kling 3.0. The with-audio gap is much tighter, effectively a tie with Seedance 2.0.

Right now, in a real pipeline: Kling 3.0. Public API, transparent pricing, 600 million videos of production history. HappyHorse has none of that yet.

Meet Both Models

HappyHorse 1.0 (Alibaba)

HappyHorse appeared pseudonymously on April 7, 2026. Alibaba confirmed ownership on April 10: it’s built by the Future Life Lab inside Alibaba’s Taotian Group, led by Zhang Di — former VP of Kuaishou and technical head of Kling AI, who joined Alibaba at the end of 2025.

The architecture is the unusual part. Rather than a standard diffusion pipeline handling video and audio separately, HappyHorse uses a unified single-stream Transformer — 40 layers, no cross-attention — processing text, video, and audio tokens together in one forward pass. Native audiovisual sync, no post-processing layering.

What’s team-reported and not yet independently verified: 15 billion parameters; clip length of 5–8 seconds; lip-sync in 7 languages. Alibaba committed to open-sourcing the model — as of late April, GitHub and HuggingFace both show “coming soon.” API rollout was expected around April 30 via Alibaba Cloud. Treat that as an estimate, not a confirmed date.

Kling 3.0 (Kuaishou)

Kling has been in production since June 2024. Per the official Kuaishou IR announcement, Kling 3.0 launched February 5, 2026, serves over 60 million creators, and has produced more than 600 million videos — numbers you can actually verify.

Built on the Multi-modal Visual Language (MVL) framework: native 1080p (4K in the Omni tier), native audio in five languages, and a multi-shot storyboard tool where you specify duration, shot size, perspective, and camera movement per shot. API is live today via fal.ai.

My own testing: ~60 generations across portrait, product, and action scenes over six weeks. Solid consistency for 3–8 second clips; reference-image input beats text-only for consistent faces.

Leaderboard Snapshot — Current Elo

The Arena runs blind pairwise voting — users compare two clips from the same prompt, no model labels. Elo scores update continuously, same mechanism as chess ratings. A 20–30 point gap translates to roughly a 53% head-to-head win rate.

One caveat: Seedance 2.0 has 7,500+ vote samples in T2V; HappyHorse’s sample count isn’t publicly broken out. Newer entries are more volatile early — but 15,000 votes holding first place is meaningful.

T2V / I2V no-audio rankings

| Model | T2V (no audio) | I2V (no audio) |

| HappyHorse-1.0 | 1,366 Elo | 1,399 Elo |

| Dreamina Seedance 2.0 | 1,270 Elo | 1,346 Elo |

| Kling 3.0 Pro 1080p | 1,245 Elo | Outside top 5 |

A 120-point T2V gap between HappyHorse and Kling 3.0 Pro is a real preference signal across diverse prompts — not cherry-picked examples.

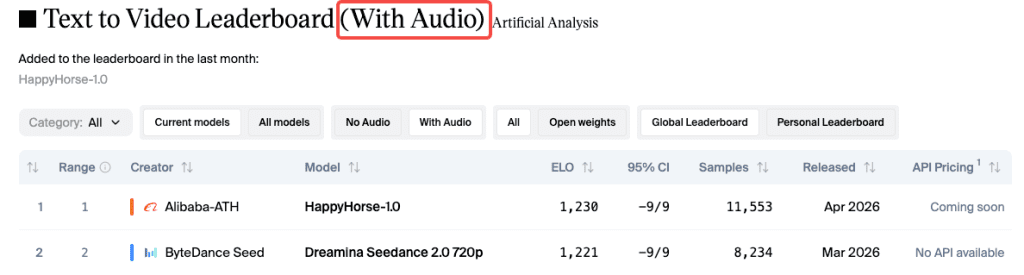

With-audio categories

T2V with audio: HappyHorse leads at 1,230 vs. Seedance at 1,221 — a 9-point gap within statistical noise. Kling 3.0 Omni sits at 1,101. I2V with audio: Seedance 2.0 leads at 1,182, HappyHorse second at 1,167.

For audio-critical work — talking head, multilingual explainer — neither model has a decisive edge.

What HappyHorse Does Better

Motion & prompt adherence on layered scenes

This is what the vote data is pointing at. Community analysis of Arena outputs consistently flags HappyHorse’s strength with facial expression, skin texture, and natural body motion. The single-stream architecture — processing all modalities together — appears to produce better spatial coherence when a human is the central subject. Layered prompts with simultaneous foreground and background action hold together more cohesively than in most separate-pipeline approaches.

Speed claim (~38s on H100)

The 38-second 1080p figure on a single H100 is team-reported and unverified — multiple third-party sites flag this explicitly. If true, it’s 30–40% faster than Seedance 2.0 under equivalent hardware. DMD-2 distillation is the mechanism: 8 denoising steps instead of 50+, no Classifier-Free Guidance. Architecturally plausible, but needs a third-party benchmark before I’d treat it as a workflow input.

Where Kling 3.0 Still Leads

Native 1080p production track record

Kling 3.0 has actually shipped — 600 million videos means documented failure modes, community prompting strategies, and answers when something breaks. Physics improvements in 3.0 are real in my testing — cloth dynamics, hair, and water surfaces all track more believably than Kling 2.x.

Accessibility — public API available today

You can integrate Kling 3.0 via API on fal.ai right now: 1080p output, native audio, multi-shot storyboard, documented pricing. The April 30 HappyHorse API date was an estimate, not a confirmed launch. Production pipelines need certainty.

Longer clip support

Kling 3.0 supports clips up to 15 seconds. HappyHorse’s documented clip length is 5–8 seconds. Hard constraint, not a quality judgment — if your workflow needs continuous shots longer than 8 seconds, Kling 3.0 is your only option between these two right now.

Ironic Context — Kling Architect Now Leads HappyHorse

Worth stating plainly: Zhang Di built Kling at Kuaishou, moved to Alibaba at the end of 2025, and within a few months built the model that now outranks it on every silent-video leaderboard. The technical lineage is visible — both models prioritize human motion fidelity and push toward native multimodal generation over pipeline approaches. Zhang Di had a thesis; he’s now stress-testing it against his own previous work.

In a Real Production Workflow — When to Pick Which

Time-sensitive commercial deliverable → Kling

Deadline, client, invoice. Six weeks of personal API testing confirms Kling 3.0 is stable and the documentation holds up when something breaks. It’s the only choice between these two that you can actually build against today.

Pre-viz / concept exploration → HappyHorse

For pitching creative directions or testing how a human performance reads before production commits, HappyHorse’s blind-vote quality lead in portrait and motion scenarios suggests stronger first-pass material. Worth setting up access the moment weights or the API drops.

Multi-model orchestration → use both

HappyHorse for human-centric close work and expression-heavy scenes; Kling 3.0 for longer narrative sequences and multi-shot storyboarding. Assemble in post. These aren’t really competing tools — they’re complementary ones with meaningfully different strengths.

Access Today — Where You Can Run Each

HappyHorse 1.0

- Artificial Analysis Video Arena: ✅ (vote only — no direct generation)

- Public API: ❌ (expected ~April 30, unconfirmed)

- Open-source weights: ❌ (committed but not yet released)

- fal.ai: “coming soon”

Kling 3.0

- klingai.com: ✅ — free tier (66 daily credits, 720p, watermarked)

- fal.ai API: ✅

- Vercel AI Gateway: ✅

Decision Guide

Pick HappyHorse if…

You’re doing pre-viz or human-centric exploration, output quality per clip is the priority, or you want early positioning when weights go public. The planned open-source release would also enable self-hosted deployment.

Pick Kling 3.0 if…

You need to ship now. You need clips over 8 seconds. You need multi-shot storyboard control within a single generation, or API reliability with documented SLAs. For enterprise workflows with data handling requirements, Kling’s production track record is the only verifiable reference point between these two.

Conclusion

The leaderboard gap is real — 15,000 blind votes, 120 Elo points over Kling 3.0 Pro in T2V. Users consistently prefer HappyHorse outputs when they don’t know which model made them.

But “best in a blind test” and “best for your workflow today” are still different questions. Kling 3.0 is the one you can build on. HappyHorse is the one to position for early.

Zhang Di built Kling, left, and built something that outranks it. That’s a consistent thesis, not a fluke. When the API goes live, it’s worth a serious evaluation — start thinking about where it fits before that moment arrives.

FAQ

Q: Is HappyHorse-1.0 actually better than Kling 3.0? In blind-vote Arena tests, HappyHorse 1.0 currently ranks higher than Kling 3.0 in several categories, especially text-to-video without audio. However, Kling 3.0 is far more proven in real production workflows, so “better” depends on whether you prioritize benchmark quality or deployment readiness.

Q: Can I use HappyHorse-1.0 right now? Not yet. As of now, HappyHorse 1.0 does not have a public API, open-source weights, or an official release platform. It can only be evaluated through leaderboard-style systems like the Artificial Analysis Video Arena.

Q: Which model is better for commercial use today? Kling 3.0 is the safer choice for commercial use because it has a stable API, pricing structure, and production track record. HappyHorse is more of an emerging model that may become relevant once public access and tooling are released.

Q: Are the HappyHorse benchmark results reliable? They are directionally useful but should be interpreted carefully. The Arena uses blind pairwise voting, which reduces bias, but HappyHorse has a smaller public evaluation footprint and some technical details are still team-reported rather than independently verified.

Previous Posts: