Hey you guys! I’m Dora — and last week I did something a little obsessive even by my standards. I had a short product clip to make for a client. Nothing fancy: 5 seconds, clean motion, portrait format for Reels. I had both LTX 2.3 and WAN 2.2 open on my machine at the same time. And instead of just picking one and getting the job done, I ended up running the same prompt through both models for three hours straight, taking notes like a complete nerd.

This article, therehence, is the result of that session. If you’re trying to decide between these two open-source models right now — here’s what I actually found.

Quick Verdict (one-line answer for each use case)

Pick LTX 2.3 if: You shoot portrait content (TikTok, Reels), want to run fully local, need fast iteration, or care about audio-video sync in one pipeline.

Pick WAN 2.2 if: Motion realism is your top priority, you want proven open-weight stability, and you don’t mind a heavier ComfyUI setup.

Core Specs Comparison Table (resolution, fps, audio, VRAM, speed)

| LTX 2.3 | WAN 2.2 | |

| Parameters | 22B | 14B (standard) / 1.3B (lite) |

| Max resolution | 4K (native) / 1080p portrait | 720p local; 1080p via API |

| FPS options | 24 / 25 / 48 / 50 | 16 / 24 |

| Max clip length | ~12s (quality); 20s at 540p | ~10s |

| Native audio | ✅ Yes (unified pipeline) | ❌ No |

| VRAM minimum | 8GB (community fork); 32GB official desktop | 24GB (14B); 8GB (1.3B) |

| Open weights | ✅ Apache 2.0 | ✅ Apache 2.0 |

| Speed vs WAN 2.2 | 18–19x faster (official claim, unverified by independent testing) | Baseline |

Important context on WANversions: WAN 2.2 is the latest version with publicly downloadable model weights as of March 2026. Alibaba has since released WAN 2.5-Preview and WAN 2.6, but neither version published model weights through the official open-source channels — so for true self-hosted, local deployment, WAN 2.2 is still the reference. If you want 2.6’s features (multi-shot, lip-sync), you’re going through a cloud API, which is a different workflow entirely.

Quality Comparison

Motion Realism

This is where WAN 2.2 genuinely earns its reputation. I prompted both models with “a person walking through a crowded Tokyo street at night, camera following from behind.” WAN 2.2’s result had that slight, almost physical weight to it — the coat movement, the subtle crowd drift. It felt observed, not generated, as VideoWeb KI shows.

LTX 2.3 wasn’t bad. But the motion had a smoother, slightly more “animated” quality. Not uncanny — just different. WAN’s motion stability has been refined across versions to behave more intelligently and feel more physically plausible.

If your content lives or dies on organic human motion — testimonial videos, lifestyle clips, anything where “it has to look real” — WAN 2.2 is still ahead.

Face and Object Consistency

Closer call here. LTX 2.3’s new VAE architecture visibly improved texture and facial sharpness. The update introduces a new VAE architecture for sharper fine details, textures, and facial features, alongside better prompt understanding for more accurate scene composition.

WAN 2.2’s consistency is solid for short clips, but I noticed some drift on face identity past the 6-second mark. LTX held steadier on longer outputs. Round goes to LTX 2.3.

Prompt Adherence

LTX 2.3 won this one. The model’s 4x larger text connector means complex prompts — multiple subjects, spatial relationships, stylistic instructions — now resolve more accurately. I tested a multi-subject prompt (“a dog and a child running toward the camera, golden hour, shallow depth of field”) and LTX 2.3 nailed it on the second try. WAN 2.2 dropped one subject entirely on three consecutive attempts.

Speed and VRAM

Here’s where things get interesting — and where I need to be honest about the numbers. What I can say from my own session: LTX 2.3 felt noticeably faster for iteration. A 5-second clip at 720p that took me roughly 4–5 minutes on WAN 2.2 was done in under a minute on LTX 2.3. Whether that’s 18x or 8x, I couldn’t tell you — but the speed difference is real and it matters when you’re running 20 test generations to find the one keeper.

On VRAM: LTX 2.3 (22B parameters) requires at least 32GB VRAM as a baseline for the official desktop app, but with FP8 or GGUF quantization it can run on smaller cards. The community has already pushed it down to 8GB. WAN 2.2’s 1.3B model fits on a consumer RTX 4090 (24GB) or RTX 5090 (32GB), while the 14B model produces better motion quality but needs more headroom.

Audio Capability

LTX 2.3 wins, no contest. It has native audio generation built into the same pass as the video — speech, ambient sound, SFX. The synchronized audio is good for ambient sounds, environmental effects, and general soundscapes, though it’s not yet competitive with dedicated music generation or voice synthesis tools.

WAN 2.2 has no native audio. You add sound to your posts, every time. For a lot of workflows, that’s totally fine. But if you’re building short-form social clips or product videos where sound-to-motion sync matters, LTX 2.3 saves a step.

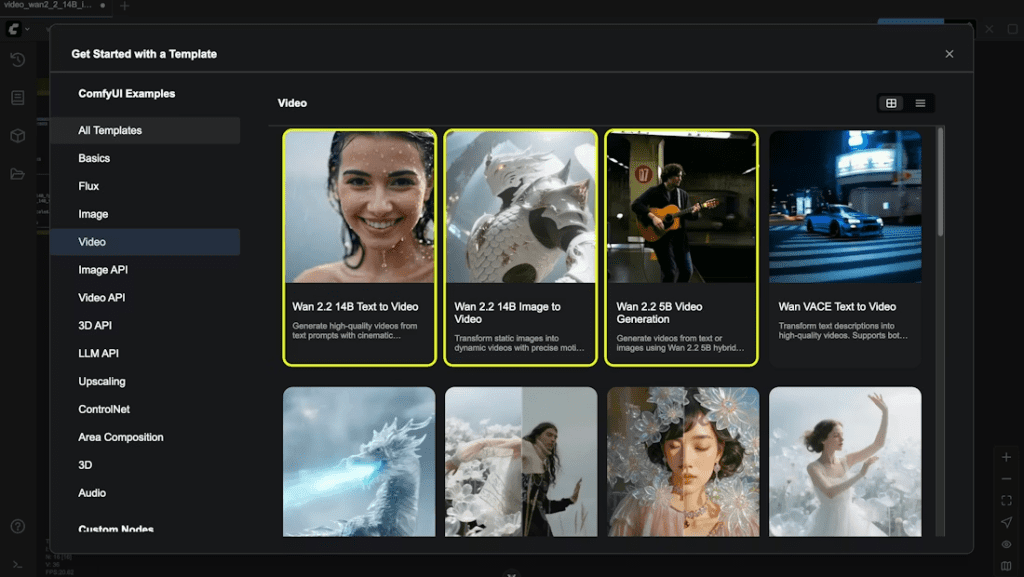

ComfyUI Setup Effort

Both models work in ComfyUI, but WAN 2.2 has had longer to mature there. The node ecosystem is more documented, the community workflows are more tested, and there are fewer surprises.

LTX 2.3 is newer, so the custom nodes are still catching up. NVIDIA published an official ComfyUI guide specifically for LTX 2.3 which is worth bookmarking — it covers quantization, frame count rules (the Nx8+1 thing will bite you if you skip it), and CFG settings. If you’re starting fresh with ComfyUI, both have learning curves. If you already have a WAN 2.2 setup, upgrading weights to 2.2 from 2.1 requires no infrastructure changes. For existing setups, the upgrade is a weight swap with no infrastructure changes required.

Best Use Cases: When to Pick LTX 2.3

- Short-form social content — Native 9:16 portrait output, trained on portrait data (not cropped from landscape). This is a bigger deal than it sounds for Reels/TikTok quality.

- Fast iteration cycles — Speed wins when you need to test 15 versions of a product clip before a deadline.

- Audio-driven content — The unified audio-video pipeline is genuinely useful for creators who want sync without a DAW session afterward.

- Privacy-sensitive work — Runs fully local. Nothing leaves your machine, which matters for client work and proprietary footage.

- Creators on a budget — Open weights, Apache 2.0, free for companies under $10M revenue.

Best Use Cases: When to Pick WAN 2.2

- Motion realism is non-negotiable — Lifestyle content, human-centric clips, anything that needs to feel physically grounded.

- Established ComfyUI pipelines — If you’ve already built workflows around WAN, staying on 2.2 is the path of least resistance.

- Lower-parameter efficiency — The 1.3B model runs on modest hardware. LTX 2.3 at 22B is heavier even with quantization.

- Stable, proven output — WAN 2.2 is battle-tested. LTX 2.3 is excellent but still has edge cases in the community reports.

Decision Checklist

Ask yourself these before choosing:

- Do you need portrait/vertical output? → LTX 2.3

- Is motion realism your #1 priority? → WAN 2.2

- Do you need audio in the same generation pass? → LTX 2.3

- Are you on a GPU with under 16GB VRAM? → WAN 2.2 (1.3B variant)

- Do you need to iterate fast on many drafts? → LTX 2.3

- Do you already have a WAN ComfyUI workflow? → Stay on WAN 2.2 unless portrait/audio matters

- Is your content human-focused (faces, movement)? → WAN 2.2 for short clips; LTX 2.3 for anything over 6 seconds

- Is privacy/local-only a hard requirement? → LTX 2.3

FAQ

Q: What is the main difference between LTX 2.3 and WAN 2.2?

The biggest difference lies in their priorities. LTX 2.3 focuses on speed, prompt accuracy, portrait output, and built-in audio generation, making it ideal for fast-paced content creation. WAN 2.2, on the other hand, excels in motion realism, producing more physically believable and natural movement, especially in human-centric scenes.

Q: Which model is better for short-form social media content like TikTok or Reels?

LTX 2.3 is the better choice for short-form social content. It supports native 9:16 portrait output (instead of cropping), runs faster for rapid iteration, and includes synchronized audio generation—saving time in editing workflows.

Q: Does either model support audio generation?

Yes, but only LTX 2.3 has native audio generation built directly into the video creation process. It can generate ambient sound, speech, and effects in sync with the visuals. WAN 2.2 does not support audio, so sound must be added separately in post-production.

Previous Posts: