Hey, this is Dora, inviting you guys to picture this: It’s 1 a.m., and I’m glued to my screen, eyes locked on the Meta Ads dashboard. A video ad I had spent three straight days perfecting was stuck at just 0.8% CTR. I kept replaying the first three seconds, knowing deep down the hook wasn’t working — but I couldn’t figure out what was missing.

Frustrated, I finally caved and did a real test. I took that same ad creative and ran it through several AI creative analysis tools, one after another.

Some tools instantly spotted the exact problem I suspected. Others gave vague, almost useless feedback. And a few genuinely surprised me with insights I hadn’t considered.

Here’s the honest breakdown of what I found.

What “AI Creative Analysis” Actually Means

This term gets thrown around a lot, and tools use it to mean very different things. Before I get into rankings, let me be clear about what we’re actually talking about.

Performance Prediction vs Post-Launch Analysis vs Creative Scoring

These are three different things — and most tools only do one well:

- Performance prediction = the tool scores your ad before you launch it, based on trained models from millions of historical ads

- Post-launch analysis = the tool pulls your live campaign data and tells you why your CTR or completion rate dropped

- Creative scoring = a structured, rubric-based rating of your ad’s visual and copy elements (hook strength, CTA clarity, text density, etc.)

Some tools advertise all three but really only nail one. I noticed this fast.

What Signals These Tools Use (Hooks, Pacing, Emotion, Text Density)

The better tools analyze signals like hook retention (do people stick past second 3?), emotional arc using facial expression modeling, visual attention heatmaps based on eye-tracking research, text density on screen, pacing rhythm cuts per second, and audio clarity scores.

The weaker ones basically just run your ad image through a sentiment model and call it “AI analysis.” You’ll recognize them fast — their feedback sounds like a horoscope.

How We Evaluated These Tools

I tested eight tools across using the same three ad creatives: a 15-second video ad, a static image ad, and a carousel. I ran each through free tiers where available, then paid tiers for the finalists.

Depth of Analysis, Actionability of Insights, Platform Integrations

My scoring criteria:

- Depth: Does it go beyond surface-level scores into why something works or doesn’t?

- Actionability: Can I take the feedback and immediately improve the creative?

- Platform integrations: Does it connect natively to Meta Ads, Google Ads, or TikTok?

Best AI Ad Tools with Creative Analysis (Ranked)

Tool 1 — Best for Pre-Launch Creative Scoring

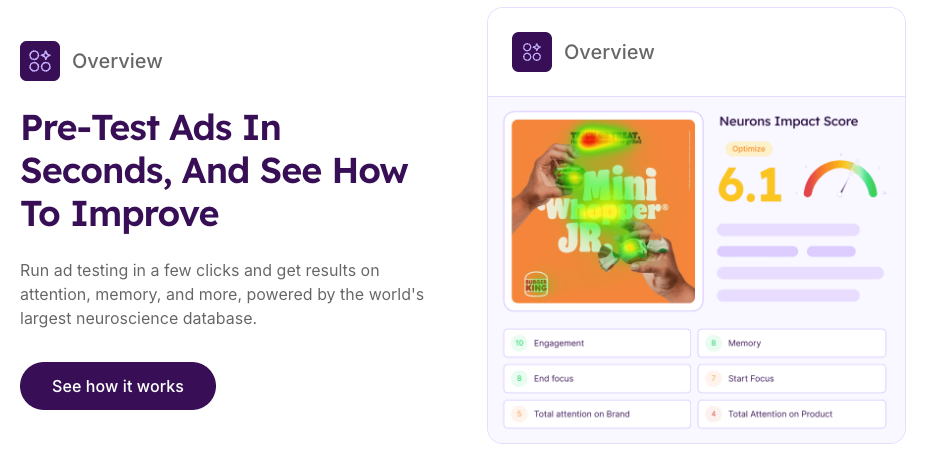

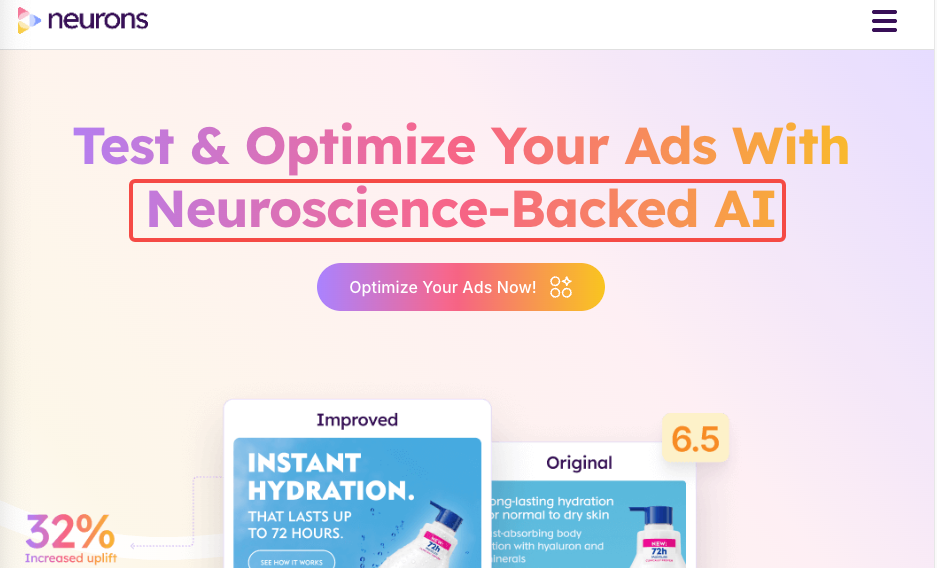

Neurons was the one that genuinely shocked me. You upload your static or video creativity and it runs it through a predictive model trained in neuroscience and cognitive load research — actual academic foundations, not just marketing fluff. The attention heatmap it generated for my static ad pinpointed exactly where a viewer’s eye would land first, and it wasn’t my CTA. That explained a lot.

What I loved: the “Predict” score breaks down into Focus, Clarity, and Cognitive Demand — three numbers I could actually act on. What I didn’t love: no native Meta Ads integration, so post-launch analysis means manual export. Pre-launch though? It’s the strongest I tested.

Best for: Brands that run heavy static or display creative and want to validate before spending budget.

Pricing: Starts at ~$499/month for teams. Free demo available.

Tool 2 — Best for Post-Launch Performance Breakdown

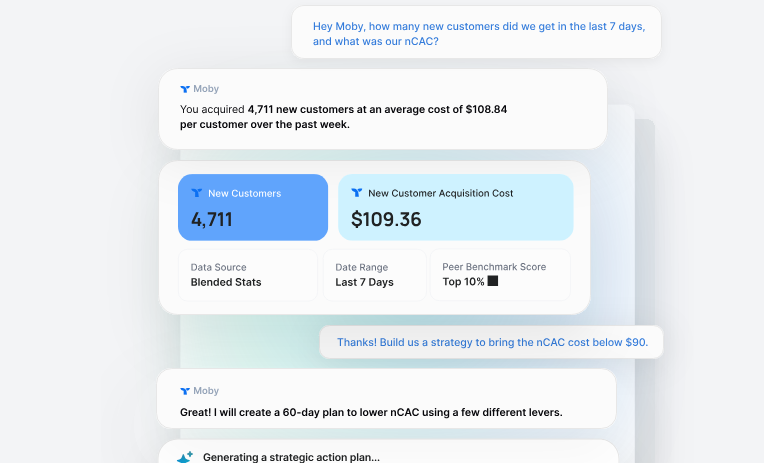

If Neurons is about prediction, Triple Whale is about diagnosis. Once you connect your Meta Ads account, the Creative Cockpit pulls performance data and clusters your ads by creative type, then surfaces which hooks, formats, and CTAs are statistically outperforming othe00rs in your own account. This is the part that other tools miss — they benchmark you against generic industry data, not your audience’s behavior.

I ran my 15-second video through it after three days live. It flagged that my “question hook” format (opening with a rhetorical question) was consistently underperforming against my “demo hook” format by 34% on thumb-stop rate. That’s a useful insight.

The downside: it requires at least a few weeks of campaign data to be meaningful. Don’t expect much from it on day one.

Best for: DTC brands or agencies managing multiple active Meta campaigns.

Pricing: Included in Triple Whale Growth plan (~$129/month+, scales with ad spend).

Tool 3 — Best for Video Ad Hook Analysis

Vidmob sits in a different category — it’s technically an enterprise creative intelligence platform, but they’ve made the analysis layer more accessible in 2026. What sets it apart for video is the frame-by-frame breakdown. It doesn’t just score your video holistically; it tells you which specific second caused drop-off based on your platform data.

I connected a Google Video campaign and it showed me a visual timeline — my video’s retention curve overlaid with scene-change markers. The drop happened exactly at second 4, right when my on-screen text appeared. Vidmob flagged it as “cognitive interruption” — too much text, introduced too fast, following a fast-cut sequence. I hadn’t even noticed the pacing issue until I saw it visualized.

Best for: Video-heavy brands, agencies, or in-house teams running YouTube or TikTok campaigns at scale.

Pricing: Custom enterprise pricing; request a demo.

Tool 4 — Best Free or Low-Cost Option

For solo creators and small teams, AdCreative.ai is where I’d start. It won’t give you the depth of Neurons or the diagnostic power of Triple Whale, but the creative scoring feature on their Starter plan is legitimately useful — especially for static ads and social media creatives.

It scores ads on a 0–100 scale across conversion likelihood, brand consistency, and visual appeal. The scores correlate reasonably with the actual CTR in my testing — not perfectly, but better than nothing. The platform also integrates with Google Ads workflows for basic performance data pulls.

One thing I appreciated: it tells you what to change, not just that something’s wrong. “Increase contrast on CTA button” or “Reduce text by 30%” — specific, actionable, beginner-friendly.

Best for: Freelancers, small businesses, or creators testing ad creative for the first time.

Pricing: Free trial available; paid plans from ~$29/month.

Feature Comparison Table (Analysis Type / Platform Integration / Free Tier / Actionability / Best For)

| Tool | Analysis Type | Platform Integration | Free Tier | Actionability | Best For |

| Neurons AI | Pre-launch scoring | None (manual export) | Demo only | ⭐⭐⭐⭐⭐ | Static/display creative validation |

| Triple Whale | Post-launch diagnosis | Meta Ads (native) | No | ⭐⭐⭐⭐⭐ | DTC brands with active campaigns |

| Vidmob | Video frame analysis | Meta, Google, TikTok | No | ⭐⭐⭐⭐ | Enterprise video campaigns |

| AdCreative.ai | Creative scoring | Google Ads (basic) | Yes (limited) | ⭐⭐⭐⭐ | Solo creators, small teams |

What Good Creative Analysis Actually Tells You

Hook Rate, Hold Rate, and What Benchmarks Matter

Here’s where I see creators get confused — they optimize for CTR when they should be looking at hook rate and hold rate first. Hook rate = % of people who watch past the first 3 seconds. Hold rate = % who make it to the halfway point.

Industry benchmarks as of Q1 2026 (based on aggregated data from Meta’s performance insights):

- Average hook rate for video ads: 55–65%

- Strong hook rate: 70%+

- Average hold rate (50% completion): 35–45%

- Strong hold rate: 50%+

If your hold rate is low but your hook rate is decent, your middle is where the problem is — not the opening. Good creative analysis tools will tell you this. Bad ones will just give you a single “engagement score.”

When AI Analysis Gets It Wrong — Known Failure Patterns

I’d be doing you a disservice if I didn’t mention this. AI creative analysis has real blind spots:

- Cultural nuance: Most models are trained heavily on US/Western ad data. If your target audience is Southeast Asian or LATAM, the emotional scoring is often off.

- Niche products: A tool trained on DTC beauty ads won’t score a B2B SaaS ad well.

- Audio dependency: Some video analysis tools completely ignore audio, which matters enormously for retention — especially in mobile-first formats where 69% of users watch with sound on.

Use the scores as directional signals, not verdicts.

How to Use Creative Analysis in Your Ad Workflow

Before Launch: Scoring and Iteration

My current workflow: I build 2–3 creative variations, run them through Neurons for attention heatmaps, then check AdCreative.ai for a quick score sanity check. If a variation scores more than 15 points lower, I revise before spending a dollar. This has genuinely cut my wasted early-budget spend.

After Launch: Diagnosis and Creative Refresh

After 3–5 days live, I pull performance data into Triple Whale. I look at hook rate first. If it’s below 55%, the opening needs a redesign. If hook rate is fine but hold rate drops at the midpoint, I use Vidmob’s timeline breakdown to identify exactly where. Then I make one change, not five — so I know what moved the needle.

FAQ

Q: What does AI creative analysis actually measure in an ad?

A: Depending on the tool, it measures visual attention distribution, emotional tone, text density, pacing, hook strength (first 3 seconds), and completion likelihood. Better tools combine multiple signals rather than relying on a single score.

Q: Can AI predict ad performance before launch?

A: Partially. Tools like Neurons can predict attention patterns and cognitive load with reasonable accuracy based on neuroscience models. But actual CTR depends on audience targeting, bidding, and market context that no pre-launch tool can fully account for. Treat it as a quality filter, not a guarantee.

Q: What’s the difference between AI creative scoring and A/B testing?

A: A/B testing tells you which ad won after spending real budget. AI creative scoring tells you why one might perform better before you spend — and often surfaces specific elements to fix. They’re complementary, not competing approaches.

Q: Which AI ad analysis tool integrates with Meta and Google Ads?

A: Triple Whale has the strongest native Meta integration for post-launch analysis. Vidmob supports Meta, Google, and TikTok. AdCreative.ai has basic Google Ads connectivity. Neurons currently requires manual export.

Verdict: Which Tool for Which Team Size

- Solo creator / freelancer: Start with AdCreative.ai’s free tier. It’s not perfect, but it’s genuinely useful and won’t drain your budget.

- Small team (2–10 people): Neurons for pre-launch validation + AdCreative.ai for quick iteration. Budget around $500–600/month total.

- Growth-stage DTC brand: Triple Whale is non-negotiable if you’re running Meta at any real scale. Pair it with Neurons for the creative pipeline.

- Agency or enterprise: Vidmob’s depth is worth the enterprise price if you’re managing video campaigns across multiple platforms. Frame-level analysis alone saves hours of guesswork.

I keep coming back to Triple Whale and Neurons as my personal daily pair — one tells me what to build, the other tells me if it’s working. But honestly? Even using just one of these properly beats flying blind on gut instinct.

Previous Posts: