I was deep in a rabbit hole of AI image tools one evening when a friend sent me a screenshot — a photorealistic image, obviously AI-generated, and obviously not safe for work. “Dora, did you know tools can do this now?” she asked. I did. But I realized I’d never actually sat down and explained what’s happening under the hood, what the real technical limits are, and — because this matters more than ever in 2026 — what the legal lines look like with real, verifiable specificity.

So here it is. Not a tool list. Not a how-to. A clear-eyed explainer on what an AI NSFW image is, how these systems generate them, where the boundaries sit technically and legally, and what any creator working in or adjacent to this space genuinely needs to understand.

Legal notice: This article is informational only and does not constitute legal advice. Laws vary by jurisdiction and are actively evolving. Consult a licensed attorney for your specific situation.

What Is an AI NSFW Image?

NSFW stands for “Not Safe For Work” — a catch-all label covering sexually explicit imagery, graphic nudity, and adult content broadly. An AI NSFW image is any image meeting that description generated in whole or significant part by a machine learning model rather than photographed or hand-drawn by a person.

Sounds simple. Gets complicated fast.

A base diffusion model doesn’t “know” what’s NSFW in a human sense. It learned from billions of image-text pairs, and those pairs included adult content. The model absorbed statistical patterns — what visual features correlate with which text descriptions. When you prompt it toward something explicit, it’s not “deciding” to create adult content. It’s pattern-matching to produce pixels that statistically fit your description.

That shapes everything downstream: how content filters work, why they can sometimes be circumvented, and why legal frameworks are scrambling to catch up.

For practical purposes, three categories exist:

Fully synthetic — no real person’s likeness is involved. The model generates a fictional body or character from scratch.

Likeness-based — a real person’s face or body is used as a reference, and the model generates content depicting them in explicit scenarios. This is drawing the most legal attention right now.

Style-transfer or “nudification” — an existing image of a clothed person is altered to remove or add clothing. The Grok incident of late December 2025 — when users generated non-consensual altered images of real women and girls directly through X — made this category impossible to ignore. EU regulators opened investigations under the Digital Services Act and GDPR within days.

The technical process behind all three categories is similar. The ethical and legal status is very different.

How AI Generates NSFW Images

Diffusion models in plain language

The engine behind most AI image generation is a diffusion model. During training, the model learns to reverse a destruction process: starting from pure noise, it gradually recovers a coherent image guided by a text prompt. Trained across billions of image-text pairs — including adult content — the model learned those visual patterns alongside everything else. A closed commercial platform like Midjourney filters explicit prompts before generation. An open-source model running locally has no such filter by default. That gap is the core story of AI NSFW image generation.

Fine-tunes and LoRAs

A base model like Stable Diffusion is a generalist. Fine-tuning trains it further on a focused dataset so it specializes. LoRA (Low-Rank Adaptation) is the fine-tuning technique worth understanding because it’s everywhere in the AI image community.

The Hugging Face technical overview of LoRA explains the key mechanic: instead of retraining the entire multi-gigabyte model, LoRA freezes the original weights and injects small trainable adapter layers into specific attention blocks. The resulting file is typically under 10 MB. You stack it on top of a base model at inference time to shift its output toward whatever it was trained on.

In practice, LoRAs are how communities achieve highly specific styles, body types, or character aesthetics without the computational cost of full retraining. They’re also how certain communities add specific people’s likenesses — which is where serious ethical and legal problems begin.

Where AI NSFW Images Come From

Three main channels, in rough order of scale:

Open-source models running locally. Stable Diffusion variants remain the backbone. Someone with a capable GPU can run these offline, apply uncensored checkpoints or LoRAs, and generate explicit content with no intermediary. Platforms like CivitAI host thousands of models explicitly tagged for adult content.

Dedicated adult AI platforms. A wave of subscription services built on uncensored model deployments, handling the infrastructure while users prompt through a browser. Content policies vary widely by platform and jurisdiction.

Misuse of consumer tools. This is the category making consistent headlines. Commercial tools have safety filters, but communities probe for workarounds. The Grok incident of December 2025 became the most visible example: users generated altered explicit images of real women and girls through X’s integrated chatbot with minimal friction. EU investigations under the DSA and GDPR followed almost immediately.

Real Capabilities and Real Limits

Photorealistic fully synthetic adult imagery is achievable with fine-tuned models (SDXL and successors). Anatomy, lighting, and style control have improved markedly. Persistent weaknesses include perfect hand/finger consistency, teeth, multi-image character identity, and complex readable text in scenes. These remain common “AI tells.”

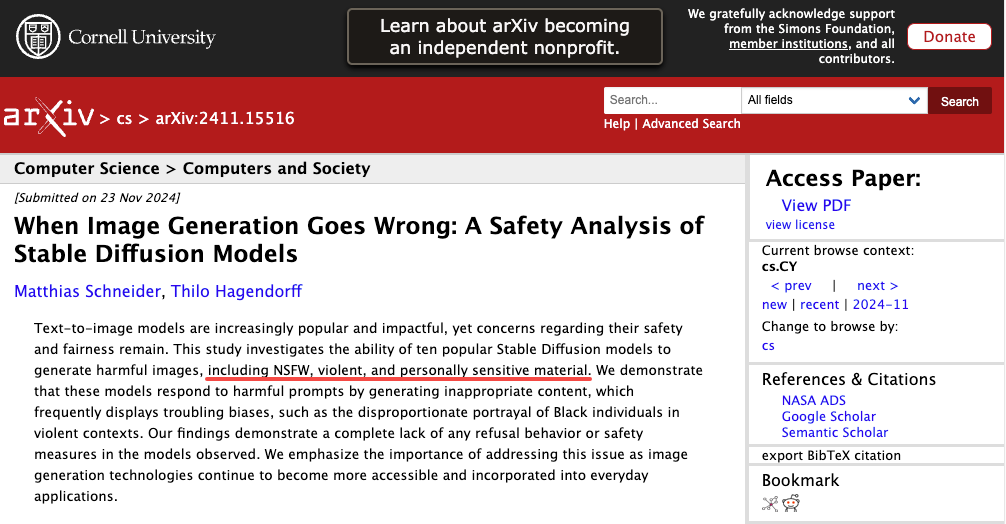

Detection has also advanced quickly. A 2025 study on arXiv showed that analyzing the noise patterns during the generation process can identify NSFW content with over 91% accuracy, even before the image is fully created. This suggests detection is rapidly catching up to generation.

Commercial platform filters are also real, not theatrical. API providers run classifiers at the prompt, generation, and output levels. None are perfect, but bypassing them consistently requires real effort and almost always violates terms of service.

Legal and Ethical Boundaries

This is the section worth reading carefully, because the landscape shifted dramatically in 2025 and is still moving as of this writing.

Disclaimer: This is informational overview, not legal advice. Laws vary by jurisdiction and are actively evolving. Consult a licensed attorney for guidance on your specific situation.

United States — Federal law

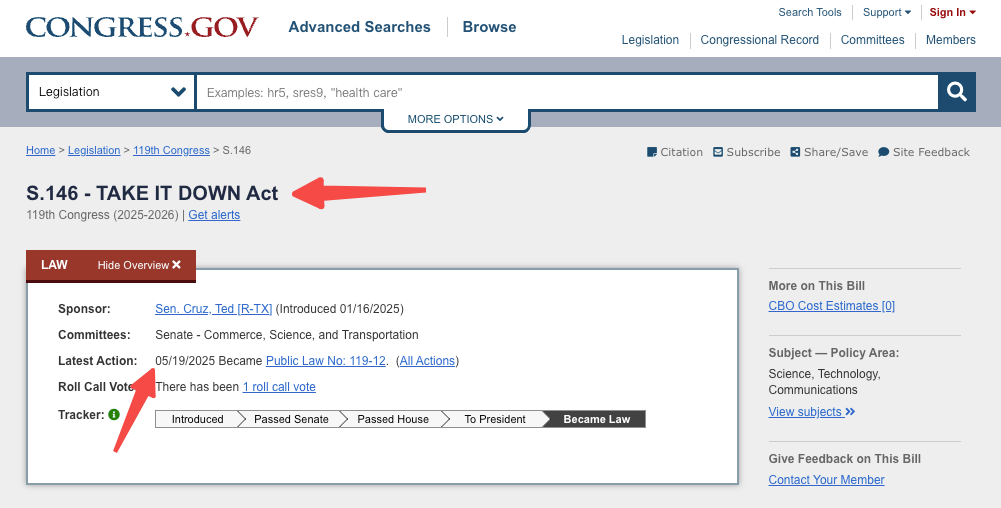

TAKE IT DOWN Act (Pub. L. No. 119-12, S. 146, 119th Cong., enacted May 19, 2025)

This is the first US federal law directly criminalizing the non-consensual publication of intimate imagery, including AI-generated deepfakes. Per the full statutory text at Congress.gov, the penalty structure is as follows:

Threatening to publish (involving digital forgeries of minors): up to 30 months

Publishing non-consensual intimate imagery of an adult: fine and/or up to 2 years imprisonment

Publishing non-consensual intimate imagery of a minor: fine and/or up to 3 years imprisonment

Threatening to publish (involving digital forgeries of adults): up to 18 months

The law requires platforms that host user content to set up a reporting system by May 19, 2026, and remove flagged content within 48 hours. The Congressional Research Service’s legal analysis (CRS LSB11314, May 2025) — prepared by the Library of Congress for Congress — confirms this penalty structure and notes the FTC holds enforcement authority: platform non-compliance constitutes an unfair or deceptive trade practice under Section 18(a)(1)(B) of the FTC Act (15 U.S.C. § 57a). The first federal conviction under this Act came in April 2026 — an Ohio man who created and distributed explicit AI deepfakes of adults and children in his neighborhood.

Under the PROTECT Act of 2003 and 18 U.S.C. § 1466A, it is illegal to create or distribute obscene sexual images of minors, including fully AI-generated ones. Penalties are severe, with distribution carrying up to 20 years in prison. While a 2025 court case questioned whether private possession of purely synthetic material is illegal, that issue is still being appealed. Production and distribution remain clearly prosecutable.

The DEFIANCE Act, passed by the Senate in January 2026, would allow victims of non-consensual explicit deepfakes to sue for damages of up to $150,000, or $250,000 in more serious cases. It is still awaiting approval in the House.

European Union

On May 7, 2026, the Council of the EU and European Parliament reached a provisional agreement under Omnibus VII. Per the official EU Council press release No. 299/26:

“The co-legislators added a new provision in the AI act, prohibiting AI practices regarding the generation of non-consensual sexual and intimate content or child sexual abuse material (CSAM).”

This adds an explicit ban on nudifier AI systems to Article 5 of Regulation (EU) 2024/1689, applying from December 2, 2026 once formally adopted and published in the Official Journal.

State level (US)

By mid-2025, about 47 states had passed some form of deepfake law, though the details vary widely. States like California, Texas, and New York have stronger frameworks that combine criminal penalties, civil lawsuits, and platform reporting requirements. It’s important to check the rules in your specific state.

The commercial use question

Fully synthetic content without a real person’s likeness is treated differently from deepfakes, but it is not unregulated. Model licenses, likeness rights, local laws, and the policies of payment processors and hosting platforms all still apply. These are complex legal issues that usually require professional advice.

Common Misconceptions

“AI can’t generate realistic people.” It can. Modern fine-tuned models can create highly realistic human images. The real issue is consent and legality, not capability.

“Fully synthetic content is legally safe everywhere.” Not necessarily. Laws like 18 U.S.C. § 1466A can apply even to AI-generated images of minors, and some legal questions are still being decided in court. Rules also vary by country.

“Detection tools can’t identify AI images.” Detection has improved a lot. Systems analyzing generation patterns, metadata, and visual artifacts can now identify AI images with high accuracy.

“Private generation is always legal.” Not always. For explicit content involving minors, production and distribution are clearly illegal, and even private possession is still being debated in courts.

“All open-source models will stay uncensored.” Not guaranteed. While they often launch without filters, growing regulatory pressure is likely to change how they are distributed and used.

FAQ

Are AI NSFW images legal?

It depends on content, jurisdiction, and use. Non-consensual likeness-based content is heavily restricted. Purely synthetic adult content of fictional adults is generally in a safer zone but not unregulated. Minor depictions in sexual contexts trigger strict federal rules.

Can I use them commercially?

Possibly, with serious caveats. Model license, likeness rights, adult content laws in your jurisdiction, and payment processor and hosting provider policies all apply independently. Treat this as a question for an attorney with relevant IP and content law experience, not a blog post.

Are AI-generated NSFW images detectable?

Increasingly yes — detection systems now hit over 90% accuracy in research settings. Metadata, artifact patterns, and noise-signal classifiers all contribute.

Final Thoughts

What hit me most while putting this together wasn’t the technical progress — it was how fast the legal ground is moving. Federal criminal legislation with specific, tiered prison terms. A civil damages bill through the Senate. The first federal conviction on record. And the EU amending Regulation (EU) 2024/1689 — by official Council press release issued yesterday — to explicitly ban nudifier AI systems from December 2026.

If you’re a creator working in AI imagery, understanding these boundaries isn’t optional anymore. The technical capability has outpaced most people’s awareness of the legal risk. That gap is worth closing.

Previous Posts: