Hi, I’m Dora. I keep a messy spreadsheet on my desk. One column is “AI image tools I’ve tested this year.” Another is “still allows what it allowed last quarter.” That second column has gotten shorter — fast. Last month I went to re-run a workflow I’d documented in July, and the platform’s policy page now read like a different product. Same brand, same login, completely different rules. So when creators ask me which ai image generators that allow nsfw content are still safe bets in 2026, I tell them the honest answer first: the list is shorter than the internet thinks, and “allows” almost always comes with footnotes.

Here’s what I want to do in this post. Not rank tools. Not push you toward any specific platform. Just lay out what “NSFW-allowed” actually means across the major players right now, the four policy patterns I keep seeing repeat, and the checks I do before I trust any platform with a project. The policy landscape shifted hard between mid-2025 and early 2026 — Midjourney’s updated Terms of Service (effective February 12, 2026) is one of several rewrites you can read for yourself — and if you’re operating on assumptions from a year-old YouTube tutorial, you’re flying blind.

A note before I start. I’m Dora. Not a lawyer. Not sponsored by anyone in this post. Just someone who tests this stuff because it directly affects how I work.

What “Allows NSFW” Means Across Image Platforms

This is the part most “best NSFW AI” listicles skip, and it’s where most creators get burned.

“NSFW” isn’t one thing. The acronym is doing way too much work. On most platform policy pages it covers four very different categories: artistic nudity (think classical figure studies), suggestive or boudoir-style imagery, explicit sexual content, and violent or disturbing content. A platform can permit one of those and ban the other three. In practice, almost all of them do exactly that.

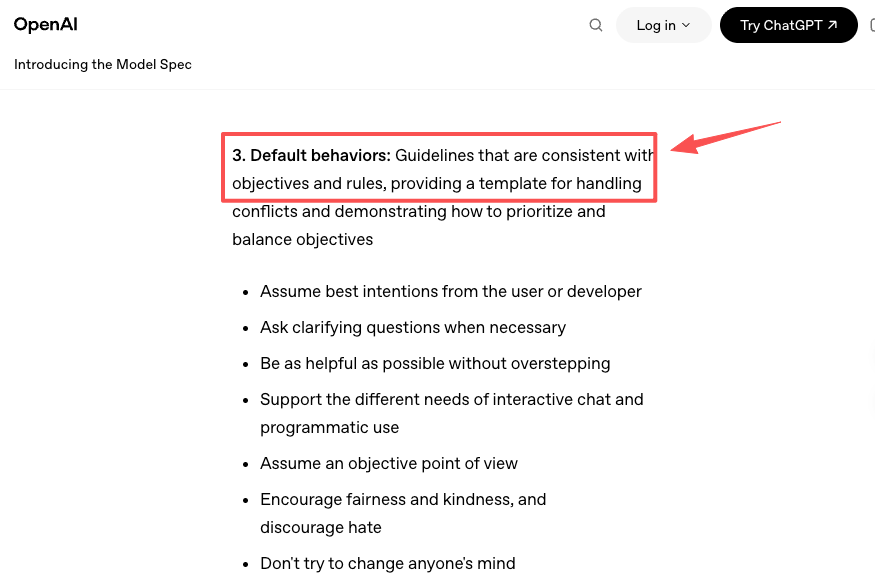

Then there’s the published policy vs. shipped model gap. A platform’s terms might technically permit mature artistic themes, but the model itself refuses prompts that go anywhere near them. OpenAI users have been complaining about this for over a year — that gap between “the rules say yes, the model says no” is exactly why OpenAI publicly signaled it would build an age-gated adult mode for ChatGPT, with deployment pushed into early 2026. The takeaway: reading the ToS isn’t enough. You also have to test what the model will actually generate.

And finally, scope. “Allowed on the platform’s hosted app” is very different from “allowed in the open-source weights you can run locally.” A model can be permissive in one place and locked down in another, even when the brand name is the same.

So when I say a platform “allows” something, I’m trying to be precise: I mean the published terms permit it, the deployed model will produce it, and the platform isn’t currently in the middle of a regulatory storm about it. All three. Otherwise it’s a maybe at best.

Platform Policy Types

After testing across most of the ai image platforms allowing nsfw to varying degrees, I find they sort cleanly into three buckets. Knowing which bucket a tool is in tells you 80% of what you need to know before you even open it.

Strictly filtered platforms

This is the biggest group, and it includes most of the names you’d recognize.

Midjourney is the cleanest example. Its Community Guidelines state that all content must be Safe For Work, and the updated Terms of Service reaffirm that users may not generate NSFW imagery. Multi-layered moderation — keyword filters plus AI classifiers — backs it up. Even fairly innocent prompts that touch on certain words get blocked. I’ve had “boudoir lighting” rejected on a fully clothed portrait test. Not exaggerating.

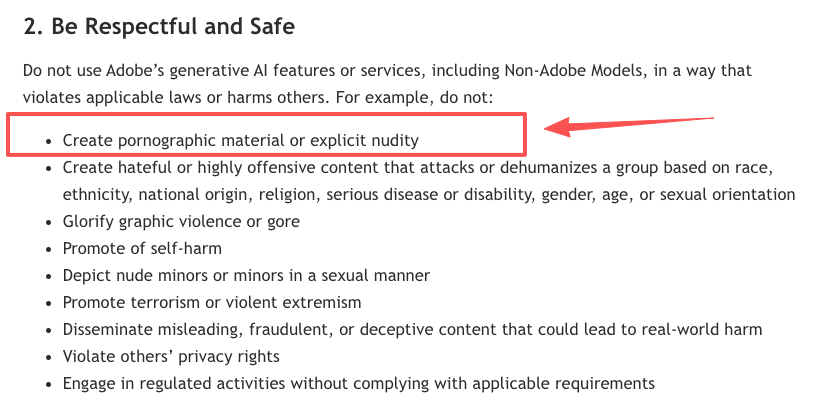

Adobe Firefly sits in the same bucket but for a different reason. Adobe’s pitch is “commercially safe,” and its Generative AI User Guidelines prohibit nudity, sexual content, and a long list of adjacent themes. Honestly, Firefly is so cautious that professional photographers have complained on Adobe’s own forums about it blocking edits to fully-clothed images. If your work touches anything ambiguous, Firefly will probably refuse before it even tries.

DALL·E (OpenAI) is similar — strict refusals on adult themes, with OpenAI signaling future age-gated changes but not yet delivering a shipped feature you can use as of early 2026.

If you need consistent commercial output and you’re not doing anything edgy, this group is where most creators should live. They’re predictable. They’re enterprise-friendly. They just won’t bend.

Adult-friendly hosted platforms

These are nsfw friendly image platforms that actually permit mature content as a designed feature, not a loophole. The group is small.

Grok Imagine (xAI) is the highest-profile one. Its Spicy Mode, launched August 2025, explicitly permits partial nudity and mature themes for paid subscribers who pass age verification. It’s the only adult-content image generator embedded inside a mainstream social platform. It’s also why xAI has been under formal investigation by the California Attorney General since January 14, 2026 — the rollout produced a series of high-profile incidents, and policies have tightened multiple times since launch. As of early 2026, Spicy Mode still works, but it now blocks explicit pornography, real-person deepfakes, and any content involving minors. The feature is also disabled entirely in some regions, including Indonesia, Malaysia, and parts of the EU.

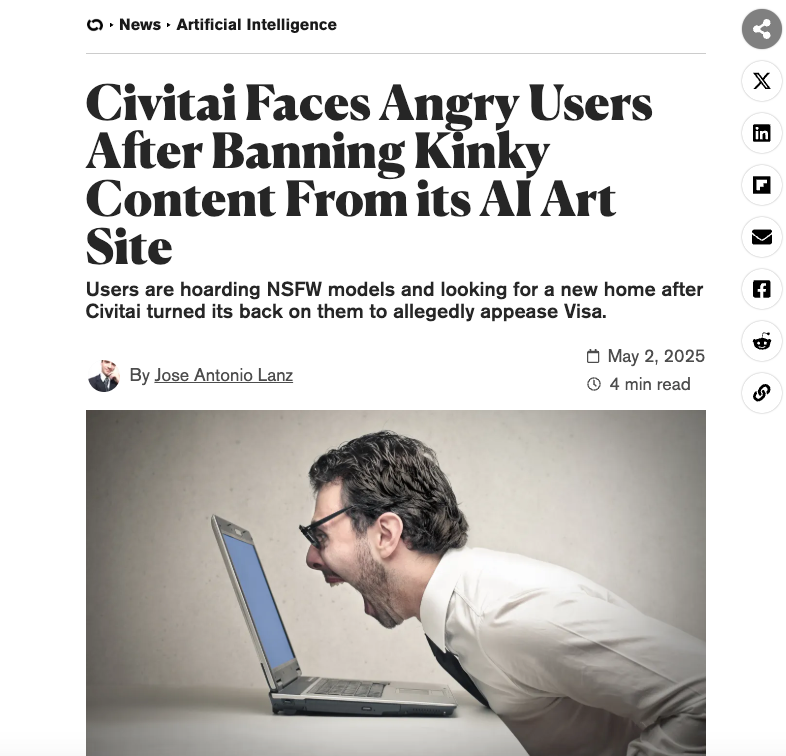

Civitai is the other big one, and it works differently. Civitai isn’t a single model — it’s a platform that hosts thousands of community-trained checkpoints and LoRAs, many of which are explicitly NSFW. Its Terms of Service permit mature content with age-gating, mandatory tagging, and a five-level content classification system. But — and this is important — Civitai tightened its rules sharply in April 2025, banning specific categories like incest, self-harm, and certain fetish content, and requiring metadata on all NSFW uploads. Decrypt’s coverage of the community backlash gives you a sense of how fast the rules moved.

That’s basically it for hosted platforms with real permission. Everything else either pretends to allow NSFW (then blocks most of it in practice) or is operating in a legal grey zone I won’t recommend.

Local and open-source options

The honest answer for creators who genuinely need unrestricted output is to run something locally. Stable Diffusion is the dominant choice here — the older SD 1.5 and SDXL weights are widely distributed, and the community has built an enormous ecosystem of NSFW-capable checkpoints around them on Civitai.

The catch: Stability AI itself has tightened its hosted offerings. As of July 31, 2025, Stability AI’s Acceptable Use Policy prohibits generating sexually explicit content through its hosted APIs and platforms. That doesn’t affect older weights you’ve already downloaded — but it does mean newer Stability models can’t be used for NSFW even locally if you’re operating under their license. Their annual transparency report lays out exactly how the company approaches moderation, and the direction of travel is toward more restriction, not less.

Local means you handle everything yourself: hardware, model selection, licensing, legal exposure. It also means you control the rules.

How to Check a Platform’s NSFW Policy

This is the actually-useful part. Forget what I or anyone else tells you about a platform — here’s the workflow I run before I trust a tool with a real project. It takes about ten minutes and it’s saved me from at least three “wait, what happened to my account” disasters.

Step 1. Find the actual policy document. Not a blog post about it. The real one. Look for “Terms of Service,” “Acceptable Use Policy,” “Community Guidelines,” and “Content Policy” — sometimes the rules are split across all four. Note the “last updated” date. If it’s more than six months old on a fast-moving platform, that’s already a yellow flag.

Step 2. Search the document for the exact terms that matter to your work. “Nudity,” “sexual,” “explicit,” “mature,” “adult,” “minors,” “deepfake,” “real person.” Read what the platform actually says — not what summaries say it says.

Step 3. Check for region-specific clauses. This catches almost everyone. A platform can permit something globally and block it entirely in the UK, EU, Australia, or parts of Asia depending on local age-verification laws. The terms usually call this out in a separate section.

Step 4. Test the model with a benign edge-case prompt. Pick something fully clothed but stylistically suggestive — a noir-style portrait, a swimwear shot, a renaissance figure study. See what gets blocked. This is your image ai content policy reality check. The gap between policy and shipped behavior is where most surprises live.

Step 5. Read the enforcement section. What happens when you violate? Warning? Temporary ban? Permanent ban? Loss of generated assets? Some platforms remove your past work; some keep it. This matters more than people think.

Step 6. Search for recent policy changes. Type the platform name plus “policy change 2026” into a news search. If there’s a major update or active regulatory investigation, you want to know before you commit a workflow to that tool.

I do this every time I evaluate a new tool. It feels paranoid until the day a platform you trusted suddenly rewrites its rules and your library is gone.

Policy Comparison Table

Quick reference. Verify each policy directly before you rely on it — these things move.

| Platform | NSFW Status (Early 2026) | Key Conditions | Last Major Policy Update |

| Midjourney | Prohibited (SFW only) | Multi-layer filters; bans on artistic nudity | ToS effective Feb 12, 2026 |

| DALL·E (OpenAI) | Prohibited; adult mode in development | Age-gated mode signaled, not yet broadly shipped | Adult-content policy update late 2025 |

| Adobe Firefly | Prohibited | Strict commercial-safety filtering | Continually updated |

| Grok Imagine (xAI) | Permitted via Spicy Mode | Premium+ or SuperGrok subscription + 18+ verification; mobile primary; blocked in some regions | January 2026 tightening |

| Civitai | Permitted with restrictions | Age-gating, content tagging, banned subcategories | April 2025 + September 2025 |

| Stability AI hosted | Prohibited (since July 2025) | Older weights still usable locally | July 31, 2025 |

| Stable Diffusion (local, SD1.5/SDXL) | Technically permitted | License terms apply; user responsible for legal compliance | License terms vary by checkpoint |