Hi, I’m Dora. I was testing a prompt in CrePal last week when someone in Discord I follow dropped a link to a new model release — LTX 2.3. The thread blew up. “Free. Local. 22B. Audio + video in one pass.” I stopped what I was doing and went down the rabbit hole for the next two hours. Here’s everything I found throughout the process.

What LTX 2.3 is (22B DiT, dev vs distilled variants)

LTX 2.3 is a 22-billion-parameter DiT-based (Diffusion Transformer) audio-video foundation model from Lightricks. It’s fully open-source under Apache 2.0, which means free for most creators — including commercial use for organizations under $10M annual revenue.

The big deal: it generates both video and audio together in a single pass. The sound, the motion, and the visuals all come from the same model at the same time. Most tools you’ve used separate those two steps. LTX 2.3 doesn’t.

The base model uses Google’s Gemma 3 12B as its text encoder — the part that reads your prompt and figures out what to generate.

Key Specs at a Glance

| Spec | Detail |

| Parameters | 22 billion |

| Architecture | Diffusion Transformer (DiT) |

| Release date | March 5, 2026 |

| License | Apache 2.0 (free commercial use under $10M revenue) |

| Native output | 480p–1080p, 5–20 seconds |

| With upscaler | Up to 4K |

| Frame rate | Up to 50 FPS |

| Audio | Synchronized, generated in same diffusion pass |

| Portrait mode | Native 9:16 support |

| Local VRAM (recommended) | 32GB+ for full BF16; ~18–20GB for FP8 quantized |

What’s New vs LTX 2 (upscaler, audio, monorepo, Desktop App)

LTX 2 established architecture. LTX 2.3 is a point release, but the improvements are structural, not cosmetic.

Rebuilt VAE. The most consequential change is a rebuilt VAE with a redesigned latent space. In practice this means sharper fabric, cleaner hair, and stable chrome reflections during camera moves — the kinds of fine-detail tests that previous open-source models failed consistently.

4x larger text connector. Multi-subject prompts with specific spatial relationships — “a red car parked behind a white truck at night” — now hold across the full clip rather than drifting after 3–4 seconds. This was one of the most frustrating failure modes in earlier versions.

Cleaner audio. Audio quality is cleaner thanks to a new HiFi-GAN vocoder. Because LTX 2.3 produces audio within the same diffusion pass as the video, a door slam lands on exactly the right frame. It’s synchronized at the model level.

Native portrait mode. Instead of generating landscape video and cropping it later, LTX 2.3 can generate vertical video directly — especially useful for YouTube Shorts, Instagram Reels, and TikTok.

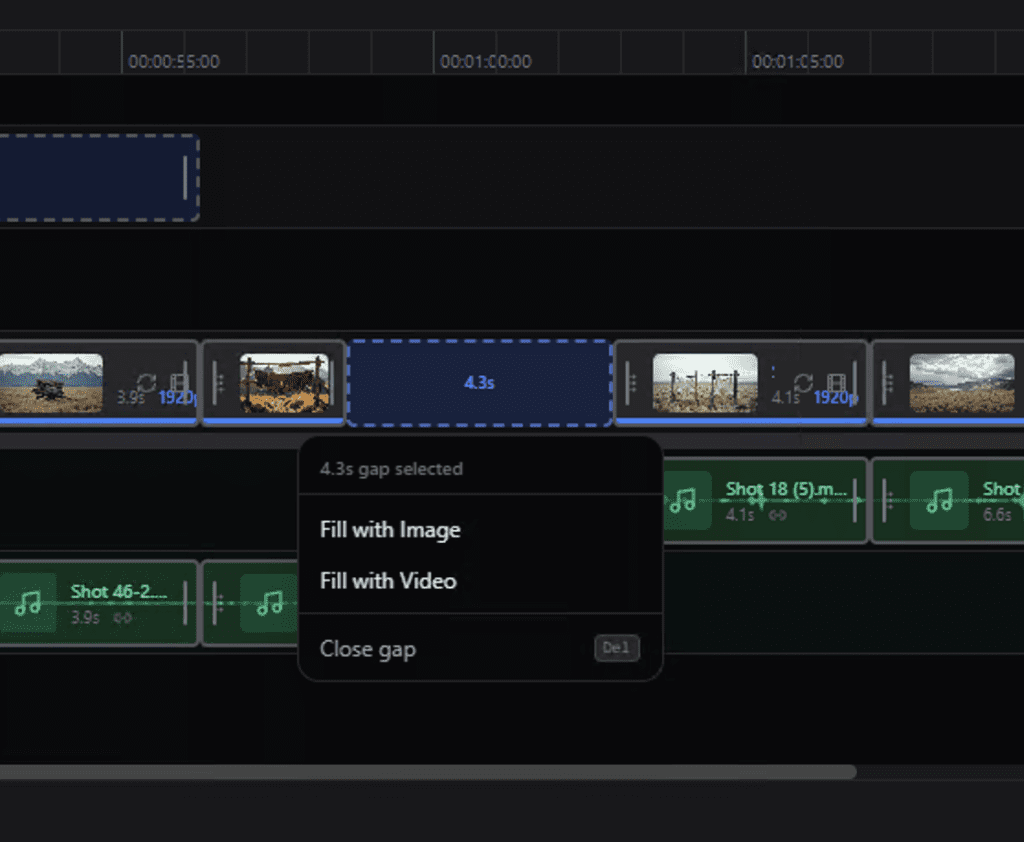

LTX Desktop App.The LTX Desktop app provides a free, local generation workspace with text-to-video, image-to-video, and timeline tools — no cloud account needed. This shipped alongside 2.3 and is probably the fastest way to get started without touching Python.

Monorepo + LoRA training. Lightricks provides reproducible LoRA and IC-LoRA training through the LTX-2 Trainer, with motion, style, and likeness training completing in under an hour in many configurations.

What You Can Generate

LTX 2.3 handles the full generation stack in one model:

- Text-to-video (T2V): Describe a scene, get a clip with matched audio.

- Image-to-video (I2V): Start from a still image and animate it. Note: I2V has known instability bugs in the current release — the model occasionally freezes or over-applies the Ken Burns effect.

- Multi-stage / chained generation: Generate a base clip, upscale it, chain sequences together for longer narratives.

- Portrait video: Native 9:16 output up to 1080×1920 — no cropping required.

What you can’t do well yet: complex crowd physics, water simulation, and emotional tonal subtlety in faces still lag behind top closed systems like Veo 3.1. This isn’t a knock — it’s just honest.

Free Access Paths (Online vs Local)

Local (truly free, unlimited):

The base optimized package in FP8 format weighs about 18–20GB. This includes the diffusion weights, the VAE, and the text encoders. The full uncompressed BF16 version for server hardware takes up around 40GB.

The official requirements ask for 32GB VRAM and Python 3.12+ with CUDA 12.7+. In practice, the community has pushed this lower. One user ran the Q4_K_S GGUF on an RTX 3080 (10GB VRAM) and got a 960×544, 5-second clip with audio in about 2–3 minutes — accepting roughly 5–8% softening in fine detail versus the BF16 baseline, but with identical motion coherence.

Mac users: Apple Silicon M2/M3/M4 can use the Metal framework (MPS) for hardware acceleration. Expected generation time for a 10-second 1080p clip on an M3 Max is ~4–6 minutes. It works, but it’s slower than a CUDA GPU.

LTX Desktop App (easiest entry point): The easiest way to get started is using the pre-built binaries with an integrated UI. You don’t need to install Python or set up virtual environments. The installer automatically downloads the necessary base model weights (~18GB). If your GPU isn’t powerful enough, the app can offload to their cloud API — but that part is paid.

ComfyUI: ComfyUI v0.16.1+ includes native LTX 2.3 support with day-0 templates covering text-to-video, image-to-video, and multi-stage generation. Key nodes include LTXVSeparateAVLatent, LTXVAudioVAEDecode, CreateVideo, and SaveVideo.

Model variants:ltx-2.3-22b-dev is the full BF16 model, trainable, intended for fine-tuning and research. ltx-2.3-22b-distilled is the 8-step distilled version for faster inference with lower memory overhead. For most creators, distilled is the right starting point.

Limitations and Trade-offs

I want to be straight with you on this:

- Hardware barrier is real. 32GB VRAM for the recommended local setup means an RTX 3090/4090 or workstation GPU. Consumer setups under 12GB can run quantized versions but require 32GB system RAM.

- I2V instability. Image-to-video generation has known bugs in the current release — freezing and over-animation are documented issues.

- Audio is foley, not music. The synchronized audio is good for ambient sounds, environmental effects, and general soundscapes. It’s not yet competitive with dedicated music generation models or voice synthesis tools. Think of it as automatic foley rather than full audio production.

- Generation speed. On a flagship GPU like an RTX 4090, generating a base clip of 10 seconds at 1080p takes about 4–6 minutes. Not instant.

- Complex physics. Water, crowds, and realistic facial emotion still have quality gaps versus closed commercial models.

Who Should Use LTX 2.3

Use it if:

- You have a capable GPU (24GB+ VRAM) and want unlimited local generation at zero cost per clip

- You’re a developer or researcher who wants to fine-tune or build on top of an open model

- You need native portrait video for social content and don’t want to crop

- You want synchronized ambient audio without a separate tool

Skip it (for now) if:

- You’re on a laptop or sub-16GB GPU and want consistent results fast

- You need to polish I2V — the instability issues are real

- Your workflow depends on voice or music generation (use a dedicated tool for that)

FAQ

Q: What’s the difference between LTX 2 and LTX 2.3?

LTX 2 established architecture. LTX 2.3 upgrades the VAE for sharper details, adds a 4x larger text connector for better prompt adherence, fixes Ken Burns over-application in I2V, introduces native portrait mode, and ships a new HiFi-GAN vocoder for cleaner audio.

Q: Is LTX 2.3 free for commercial use?

Yes, under Apache 2.0 — free for commercial use for organizations under $10M annual revenue. Above that threshold, contact Lightricks for commercial licensing terms.

Q: Can I run LTX 2.3 on a Mac?

Yes, with limitations. Apple Silicon M2/M3/M4 supports inference via MPS (Metal). Expect 4–6 minutes per 10-second clip at 1080p on an M3 Max. AMD and Mac community ports exist but are experimental and slower as of March 2026.

Q: What’s the difference between the dev and distilled variants?

ltx-2.3-22b-dev is the full BF16 model for fine-tuning and research. ltx-2.3-22b-distilled runs inference in 8 steps (vs the dev model’s full denoising schedule) — faster generation, lower memory, slightly lower quality ceiling. For most creators, distilled is the better starting point.

Q: Does LTX 2.3 work in ComfyUI?

Yes. ComfyUI v0.16.1+ includes native support with day-0 templates for T2V, I2V, and multi-stage generation.

Previous Posts: